Navigating Global Analytical Method Validation: A Comparative Framework for Regulatory Compliance

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to compare and navigate analytical method validation requirements across major global regulatory guidelines, including ICH, FDA, USP,...

Navigating Global Analytical Method Validation: A Comparative Framework for Regulatory Compliance

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to compare and navigate analytical method validation requirements across major global regulatory guidelines, including ICH, FDA, USP, EMA, and WHO. It covers foundational principles, practical application methodologies, common troubleshooting strategies, and a direct comparative analysis of regional requirements. By synthesizing the latest regulatory expectations—such as those in the modernized ICH Q2(R2) and Q14 guidelines—this guide aims to equip professionals with the knowledge to develop robust, compliant validation protocols, optimize resource allocation, and facilitate successful global market submissions.

Understanding the Pillars of Analytical Method Validation

Analytical method validation is the documented process of demonstrating that an analytical procedure is suitable for its intended purpose, ensuring that it consistently produces reliable, accurate, and reproducible results within specified parameters [1]. In the pharmaceutical and life sciences industries, method validation is a critical component of regulatory compliance and quality assurance. It ensures that testing methods used during drug development, manufacturing, and release meet stringent regulatory expectations and scientific standards, thereby guaranteeing the identity, strength, quality, purity, and potency of drug substances and products [1] [2].

The importance of analytical method validation extends beyond mere regulatory formality. It serves as a fundamental scientific requirement for ensuring the integrity of data generated during pharmaceutical development and manufacturing [3]. Validated methods support release and stability testing of pharmaceutical products, enable consistent and reproducible results across different laboratories and analysts, and ensure compliance with Good Manufacturing Practice (GMP) and other regulatory requirements [1]. For biotechnology companies conducting early-phase clinical trials, robust analytical method development is particularly crucial for commercial success, regulatory approval, and ultimately, patient safety [2].

Regulatory Framework and Guidelines

The regulatory landscape for analytical method validation is primarily shaped by harmonized international guidelines, with the International Council for Harmonisation (ICH) serving as the cornerstone for global standards. The ICH provides a harmonized framework that, once adopted by member countries, becomes the global benchmark for analytical method guidelines, ensuring that a method validated in one region is recognized and trusted worldwide [4]. This streamlined approach helps pharmaceutical companies navigate the complex patchwork of regional regulations, facilitating the path from development to market.

Key Regulatory Guidelines

Several key guidelines govern analytical method validation in the pharmaceutical industry, with ICH guidelines representing the global standard. The most recently adopted ICH Q2(R2) guideline, officially implemented in 2023, modernizes the principles for validation of analytical procedures by expanding its scope to include modern technologies and emphasizing a science- and risk-based approach to validation [4] [5]. Simultaneously, the new ICH Q14 guideline introduces a comprehensive framework for analytical procedure development, emphasizing a systematic, risk-based approach and concepts like the Analytical Target Profile (ATP) [4].

The U.S. Food and Drug Administration (FDA), as a key member of ICH, adopts and implements these harmonized guidelines, making compliance with ICH standards essential for meeting FDA requirements [4]. The FDA's approach expands upon the ICH framework while addressing requirements unique to the U.S. regulatory landscape, with particular emphasis on method robustness and thorough documentation of analytical accuracy [6]. The United States Pharmacopeia chapter <1225> establishes foundational guidance for validating analytical procedures used in pharmaceutical testing, outlining specific validation requirements for four categories of compendial procedures: identification tests, quantitative impurity tests, limit tests, and assays [6].

Other significant regulatory bodies with their own guidelines include the European Medicines Agency (EMA), which adopts ICH guidelines; the World Health Organization (WHO); and the Association of Southeast Asian Nations (ASEAN), each with specific regional requirements that, while showing notable variations in validation approaches, all emphasize product quality, safety, and efficacy [7].

Recent Developments: ICH Q2(R2) and Q14

The simultaneous release of ICH Q2(R2) and ICH Q14 represents a significant modernization of analytical method guidelines, marking a shift from a prescriptive, "check-the-box" approach to a more scientific, lifecycle-based model [4]. This evolution emphasizes that analytical procedure validation is not a one-time event but rather a continuous process that begins with method development and continues throughout the method's entire lifecycle [4]. Key enhancements in these updated guidelines include the inclusion of biological assays, expanded guidance for modern techniques such as multivariate analytical procedures, and the formalization of concepts like the Analytical Target Profile (ATP) as a prospective summary of a method's intended purpose and desired performance characteristics [5] [4].

Table: Key Regulatory Guidelines for Analytical Method Validation

| Regulatory Body | Guideline | Scope and Focus | Status/Effective Date |

|---|---|---|---|

| International Council for Harmonisation (ICH) | Q2(R2) | Validation of analytical procedures; covers chemical and biological drugs, modern analytical technologies | Adopted November 2023 [5] |

| International Council for Harmonisation (ICH) | Q14 | Analytical procedure development; introduces ATP and enhanced approach | Adopted January 2024 [3] |

| U.S. Food and Drug Administration (FDA) | Analytical Procedures and Methods Validation | Implements ICH guidelines with additional U.S.-specific requirements; emphasizes robustness and documentation | Effective (references ICH Q2(R2)) [4] |

| European Medicines Agency (EMA) | ICH Q2(R2) | Adopts and implements ICH guidelines within the European Union | Legally effective June 2024 [8] |

| U.S. Pharmacopeia (USP) | <1225> | Validation of compendial procedures; categorizes methods and defines acceptance criteria | Continuously updated [6] |

Core Validation Parameters and Acceptance Criteria

According to ICH Q2(R2) guidelines, analytical method validation requires the evaluation of specific performance parameters to demonstrate that a method is fit for its intended purpose [8] [3]. The specific parameters tested depend on the type of analytical procedure (e.g., identification test, quantitative impurity test, limit test, or assay) [6]. For each parameter, predefined and justified acceptance criteria must be established based on the method's purpose and regulatory expectations [3].

Table: Core Validation Parameters and Typical Acceptance Criteria

| Validation Parameter | Definition | Typical Acceptance Criteria Examples | Common Experimental Approach |

|---|---|---|---|

| Accuracy [1] | The closeness of test results to the true value [4]. | Percent recovery of 98-102% for API assay [3]. | Analyze samples of known concentration (e.g., spiked placebo) [4]. |

| Precision [1] | The degree of agreement among individual test results from repeated samplings [4]. Includes repeatability and intermediate precision. | %RSD ≤ 2% for assay methods [3]. | Multiple measurements under same (repeatability) and varied conditions (intermediate precision) [3]. |

| Specificity [1] | The ability to assess the analyte unequivocally in the presence of other components [4]. | No interference from impurities, degradants, or matrix [1]. | Chromatographic analysis of samples with and without potential interferents [3]. |

| Linearity [1] | The ability to obtain test results proportional to analyte concentration [4]. | Correlation coefficient (r) > 0.998 [3]. | Analyze a series of solutions at different concentrations across the specified range [1]. |

| Range [1] | The interval between upper and lower analyte concentrations demonstrating suitable linearity, accuracy, and precision [4]. | Typically 80-120% of test concentration for assay [1]. | Established from linearity data, confirming accuracy and precision at the extremes [1]. |

| Limit of Detection (LOD) [1] | The lowest amount of analyte that can be detected but not necessarily quantified [4]. | Signal-to-noise ratio ≥ 3:1 [3]. | Based on signal-to-noise ratio or standard deviation of the response [1]. |

| Limit of Quantitation (LOQ) [1] | The lowest amount of analyte that can be quantified with acceptable accuracy and precision [4]. | Signal-to-noise ratio ≥ 10:1, with accuracy and precision ±20% [3]. | Based on signal-to-noise ratio or standard deviation of the response and accuracy data [1]. |

| Robustness [1] | The method's capacity to remain unaffected by small, deliberate variations in method parameters [4]. | Consistent system suitability results under varied conditions. | Deliberate variations in parameters like pH, mobile phase composition, or temperature [3]. |

Detailed Experimental Protocols for Key Parameters

Protocol for Accuracy Assessment

Accuracy is typically assessed by applying the analytical method to samples of known concentration and comparing the measured value to the true value [3]. For drug substance analysis, accuracy may be determined by spiking a placebo with known quantities of the active pharmaceutical ingredient (API) [4]. A minimum of nine determinations across a minimum of three concentration levels covering the specified range (e.g., 80%, 100%, 120%) is recommended [1]. Results are expressed as percent recovery of the known amount of analyte, or as the difference between the mean and the accepted true value (bias) [3].

Protocol for Precision Evaluation

Precision is evaluated at multiple levels, encompassing repeatability, intermediate precision, and reproducibility [1]. Repeatability (intra-assay precision) is assessed using a minimum of nine determinations covering the specified range for the procedure (e.g., three concentrations with three replicates each) or a minimum of six determinations at 100% of the test concentration [1]. Intermediate precision involves evaluating the influence of random events on the analysis, such as different days, different analysts, or different equipment within the same laboratory [3]. Results are expressed as the relative standard deviation (%RSD) for the series of measurements [3].

Protocol for Specificity Demonstration

For identity tests, specificity requires that the method can discriminate between compounds of closely related structure which are likely to be present [3]. For assays and impurity tests, specificity is demonstrated by showing that the response from the analyte is unaffected by the presence of impurities, excipients, or matrix components [1]. In chromatographic methods, this is typically achieved by injecting individually solutions of the analyte, potential impurities, degradation products (generated by stress testing), placebo, and the complete mixture to demonstrate resolution and absence of interference at the retention time of the analyte [3].

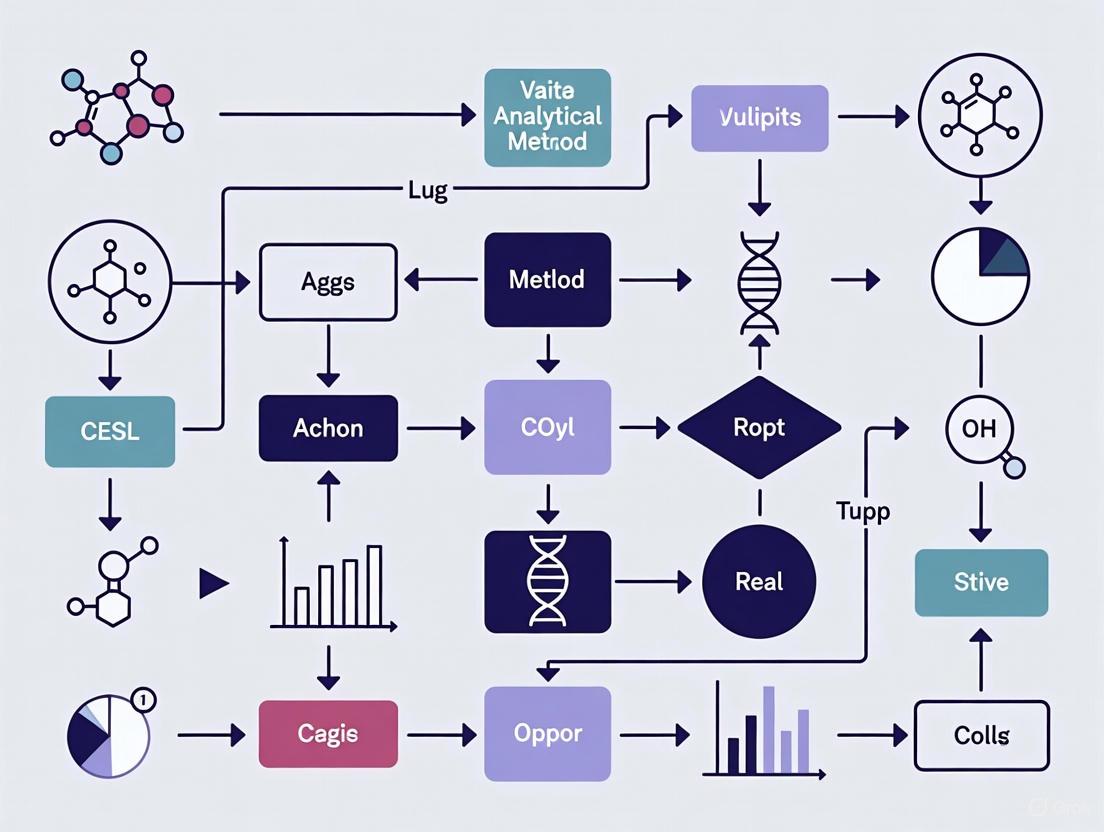

Analytical Method Lifecycle and Workflow

The modernized approach introduced by ICH Q2(R2) and Q14 emphasizes that analytical method validation is not a one-time event but part of a continuous lifecycle that begins with development and continues through to retirement [4]. This lifecycle management ensures methods remain fit-for-purpose throughout their use and allows for more flexible, science-based post-approval changes [4]. The following workflow diagram illustrates the key stages and decision points in the analytical method lifecycle, from initial requirement identification through routine use and eventual retirement or revalidation.

Method Transfer Protocol

A critical aspect of the method lifecycle is analytical method transfer, which qualifies a receiving laboratory to use an analytical procedure that originated in another laboratory [2]. This process is typically managed under a formal transfer protocol that details the parameters to be evaluated and the predetermined acceptance criteria [2]. Transfer studies usually involve two or more laboratories (originating and receiving) executing the pre-approved transfer protocol, which often includes comparative testing of homogeneous samples to demonstrate equivalent performance between laboratories [2]. Successful method transfer ensures that analytical methods produce consistent and reproducible results across different sites, which is essential for maintaining product quality when manufacturing or testing activities are relocated.

Essential Research Reagent Solutions and Materials

The successful development and validation of analytical methods requires specific high-quality reagents, reference standards, and materials. The following table details key research reagent solutions essential for conducting validation experiments, particularly for chromatographic methods commonly used in pharmaceutical analysis.

Table: Essential Research Reagents and Materials for Analytical Method Validation

| Reagent/Material | Function and Role in Validation | Key Quality Attributes |

|---|---|---|

| Chemical Reference Standards [3] | Certified reference materials used to establish method accuracy, prepare calibration curves for linearity, and determine specificity. | High purity (>95%), well-characterized identity and structure, certified purity value with uncertainty. |

| Active Pharmaceutical Ingredient (API) | Serves as the primary analyte for method development and validation; used in accuracy (recovery) and precision studies. | Well-defined synthetic route, comprehensive characterization, known impurity profile, stability data. |

| Placebo/Formulation Excipients | Used in specificity testing to demonstrate no interference from non-active components in the drug product matrix. | Representative of commercial product composition, individually sourced for interference testing. |

| Forced Degradation Samples [3] | Artificially degraded samples (acid/base, oxidative, thermal, photolytic) used to demonstrate method specificity and stability-indicating properties. | Generated under controlled conditions, degradation level typically 5-20%, identified major degradation products. |

| HPLC-Grade Solvents | Used as mobile phase components and for sample preparation; critical for achieving consistent chromatographic performance and baseline stability. | Low UV absorbance, high purity, low particulate content, consistent lot-to-lot quality. |

| Buffer Salts and Additives | Used to adjust mobile phase pH and ionic strength; critical for achieving optimal separation, peak shape, and method robustness. | HPLC grade, controlled pH ±0.1 units, specified buffer capacity, filtered and degassed before use. |

Analytical method validation serves as an indispensable pillar of pharmaceutical quality assurance, providing the scientific evidence that analytical methods are fit for their intended purpose in ensuring the identity, strength, quality, purity, and potency of drug substances and products [1] [8]. The regulatory framework governing method validation, particularly the recently modernized ICH Q2(R2) and Q14 guidelines, has evolved from a prescriptive checklist approach to a more scientific, risk-based lifecycle model that emphasizes method understanding, robustness, and continuous improvement [4] [5]. This harmonized framework enables pharmaceutical companies to develop validated methods that meet global regulatory expectations, thereby facilitating market access while maintaining the highest standards of product quality and patient safety [7].

For researchers and drug development professionals engaged in comparative studies of validation requirements, understanding the core parameters, their experimental protocols, and acceptance criteria remains fundamental [6] [3]. The successful implementation of these validation principles requires not only technical competence but also a robust quality management system, appropriate reagent qualification, and adherence to good documentation practices [9]. As the pharmaceutical industry continues to evolve with increasingly complex modalities and advanced analytical technologies, the principles of method validation outlined in this article provide a stable foundation for ensuring data integrity and regulatory compliance throughout the drug development lifecycle.

In pharmaceutical development, the reliability of analytical data is the cornerstone of product quality, regulatory compliance, and patient safety. Analytical method validation provides documented evidence that a procedure is fit for its intended purpose, ensuring that test results are both trustworthy and meaningful [10] [4]. Among the various performance characteristics defined by guidelines such as the International Council for Harmonisation (ICH) Q2(R2), four parameters form the essential foundation of a robust analytical procedure: Accuracy, Precision, Specificity, and Linearity [11] [8] [4]. These core parameters collectively assure that a method correctly measures the analyte, yields consistent results, is unaffected by interfering components, and provides proportional responses across the required concentration range. This application note details the experimental protocols and acceptance criteria for demonstrating these foundational parameters, providing researchers and scientists with a structured framework for validation activities aligned with modern regulatory standards.

Core Parameter Definitions and Regulatory Significance

The ICH Q2(R2) guideline serves as the primary global standard for validating analytical procedures for pharmaceutical registration applications [8] [4]. The following table summarizes the formal definitions and critical importance of the four core parameters.

Table 1: Core Validation Parameters: Definitions and Significance

| Parameter | Formal Definition | Regulatory and Scientific Significance |

|---|---|---|

| Accuracy | "The closeness of agreement between the value which is accepted either as a conventional true value or an accepted reference value and the value found." [12] [13] [14] | Ensures that measured values are close to the true value, which is critical for correct potency assessment, purity evaluation, and ensuring patient safety and product efficacy [10] [14]. |

| Precision | "The closeness of agreement (degree of scatter) between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions." [12] [13] [14] | Demonstrates the method's reliability and consistency, confirming that results are reproducible under normal operating variations (e.g., different analysts, days, equipment) [11] [4]. |

| Specificity | "The ability to assess unequivocally the analyte in the presence of components which may be expected to be present." [12] | Establishes that the method can accurately measure the target analyte amidst potential interferents like impurities, degradants, or sample matrix components, ensuring the result's identity and purity [11] [12] [13]. |

| Linearity | "The ability (within a given range) to obtain test results which are directly proportional to the concentration (amount) of analyte in the sample." [12] [14] | Provides the scientific basis for quantifying the analyte, demonstrating that the instrument response is directly proportional to concentration across the specified range, which is foundational for generating accurate quantitative results [4] [14]. |

Experimental Protocols and Methodologies

Accuracy

The protocol for Accuracy demonstrates that the method yields results that are close to the true value.

- Experimental Design: Accuracy is established by analyzing a minimum of nine determinations over a minimum of three concentration levels covering the specified range (e.g., 80%, 100%, 120% of the target concentration) [13] [14]. For a drug product, this is typically done by spiking a placebo or blank matrix with known quantities of the analyte (standard addition) [13] [14]. For a drug substance, a reference standard of known purity can be used [14].

- Sample Preparation: Prepare synthetic mixtures of the drug product by adding known weights of the drug substance (analyte) to the corresponding placebo. Alternatively, prepare solutions of a certified reference material at the three specified concentration levels. For each level, prepare three independent samples.

- Data Analysis: Calculate the recovery (%) for each sample using the formula:

(Measured Concentration / Known Concentration) * 100. Report the mean recovery and confidence interval (e.g., ± standard deviation) for each concentration level [13] [14]. - Acceptance Criteria: The mean recovery at each concentration level should be within established limits, commonly 98.0% - 102.0% for assay methods, with a predefined precision (e.g., %RSD) [14].

Table 2: Experimental Design for Assessing Accuracy

| Concentration Level | Number of Independent Samples | Data Reporting | Typical Acceptance Criteria (for Assay) |

|---|---|---|---|

| Low (e.g., 80% of target) | 3 | Mean Recovery (%) ± SD | 98.0% - 102.0% |

| Medium (e.g., 100% of target) | 3 | Mean Recovery (%) ± SD | 98.0% - 102.0% |

| High (e.g., 120% of target) | 3 | Mean Recovery (%) ± SD | 98.0% - 102.0% |

Precision

Precision is evaluated at multiple levels, with Repeatability and Intermediate Precision being the minimum requirements for a validated method.

- Experimental Design - Repeatability (Intra-assay): Analyze a minimum of nine determinations covering the specified range (three concentrations/three replicates each) or a minimum of six determinations at 100% of the test concentration [13] [14]. All measurements should be performed under identical conditions, by the same analyst, in a short time interval.

- Experimental Design - Intermediate Precision: Demonstrate the method's performance under variations within the same laboratory. A common approach involves two different analysts, each preparing and analyzing replicate sample preparations (e.g., six each at 100% concentration) on different days and/or using different HPLC systems [13]. The experimental design should allow monitoring the effects of these individual variables.

- Data Analysis: Calculate the standard deviation (SD) and relative standard deviation (RSD or Coefficient of Variation) for the results from the repeatability and intermediate precision studies [13] [14]. For intermediate precision, the %-difference in the mean values between the two analysts' results can be subjected to statistical testing (e.g., Student's t-test) [13].

- Acceptance Criteria: The RSD for repeatability is typically required to be not more than 1-2% for a drug substance assay. For intermediate precision, the RSD should meet the same criteria, and no significant difference should be found between the results obtained by different analysts or instruments [13].

Specificity

The protocol for Specificity proves that the method can distinguish the analyte from all other components.

- Experimental Design: Inject and analyze the following solutions individually:

- Blank/Placebo: The sample matrix without the analyte.

- Analyte Standard: A pure reference standard of the analyte.

- Stressed or Spiked Samples: Samples containing potential interferents, such as known impurities, degradation products (generated by stressing the sample), or process-related intermediates [13].

- Sample Preparation: For forced degradation studies, stress the drug product or substance under appropriate conditions (e.g., acid/base, heat, light, oxidation). For spiking, add available impurities to the sample matrix.

- Data Analysis: In chromatographic methods, specificity is demonstrated by achieving baseline separation (resolution > 1.5 to 2.0) between the analyte peak and the closest eluting potential interferent [13]. Peak identity and purity should be confirmed using techniques like photodiode-array (PDA) detection or mass spectrometry (MS) to ensure the analyte peak is homogeneous and free from co-eluting components [13].

- Acceptance Criteria: The blank/placebo chromatogram should show no interference at the retention time of the analyte. The analyte peak in the sample should be pure as confirmed by peak purity tools, and well-resolved from all other peaks [13].

Linearity and Range

Linearity establishes the proportional relationship between analyte concentration and instrument response, while the Range is the interval between the upper and lower concentrations for which this linearity, as well as acceptable accuracy and precision, has been demonstrated [12] [14].

- Experimental Design: Prepare a minimum of five concentrations of the analyte over the specified range (e.g., 50%, 75%, 100%, 125%, 150% for content uniformity; 80%, 90%, 100%, 110%, 120% for assay) [15] [14]. Analyze each concentration in duplicate or triplicate.

- Sample Preparation: Prepare solutions from independent weighings or stock solution dilutions. For drug product linearity, the solutions can be prepared by spiking the placebo with the drug substance or by using a synthetic sample mixture [14].

- Data Analysis: Plot the mean response (e.g., peak area) against the analyte concentration. Perform linear regression analysis using the method of least squares to calculate the correlation coefficient (r), coefficient of determination (r²), y-intercept, and slope [14]. The residuals (difference between the experimental and calculated points) should be randomly distributed.

- Acceptance Criteria: The correlation coefficient (r) is typically required to be greater than 0.998, or r² > 0.995 [14]. The y-intercept should not be significantly different from zero, and the residuals should show no systematic pattern.

Table 3: Experimental Design for Assessing Linearity

| Parameter | Recommended Practice | Data Analysis | Typical Acceptance Criteria |

|---|---|---|---|

| Linearity | A minimum of 5 concentration levels, analyzed in duplicate [15] [14]. | Linear regression by least-squares method. | r² > 0.995 (or r > 0.998) [14]. |

| Range | Derived from linearity, accuracy, and precision studies. | The interval where linearity, accuracy, and precision are all acceptable. | Assay: 80-120% of test concentration [14]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials and solutions required for the successful execution of the validation protocols described above.

Table 4: Essential Research Reagents and Materials for Validation Studies

| Item | Function and Critical Specifications |

|---|---|

| Certified Reference Standard | High-purity analyte material with certified purity and identity, used to prepare calibration standards and accuracy spikes. It is the primary benchmark for trueness [14]. |

| Placebo/Blank Matrix | A mixture of all drug product components except the active analyte. Used in specificity testing to rule out matrix interference and in accuracy studies for spiking experiments [12] [14]. |

| Known Impurity Standards | Authentic samples of potential impurities and degradation products. Used to challenge method specificity by demonstrating resolution from the main analyte [13] [14]. |

| Chromatographic Mobile Phase | Solvent or buffer system used as the eluent in LC methods. Its composition, pH, and grade must be strictly controlled as per method specifications, as these are critical for robustness [12]. |

| Sample Preparation Solvents | High-purity solvents and reagents for dissolving and extracting samples. Must be compatible with the analyte and the analytical system to prevent interference or instability. |

Method Validation Workflow and Parameter Relationships

The validation of an analytical method is a logical sequence of experiments where parameters are interdependent. The following diagram illustrates the typical workflow and key relationships between the four core validation parameters.

Figure 1: Core Parameter Validation Workflow. This diagram outlines the logical progression for establishing core validation parameters, highlighting their interdependencies. ATP = Analytical Target Profile.

A rigorous and science-based approach to validating Accuracy, Precision, Specificity, and Linearity is non-negotiable for generating reliable analytical data in pharmaceutical research and development. The experimental protocols detailed in this application note, aligned with ICH Q2(R2) principles, provide a solid foundation for demonstrating that an analytical procedure is fit for its intended purpose [8] [4]. By systematically executing these studies and adhering to predefined acceptance criteria, scientists can ensure the integrity of their data, support robust regulatory submissions, and ultimately safeguard product quality and patient safety.

The development, validation, and application of analytical methods are governed by a harmonized yet complex framework of regulatory guidelines. These guidelines, established by international and national bodies, ensure that analytical procedures used in the pharmaceutical industry are reliable, reproducible, and fit for their intended purpose. The International Council for Harmonisation of Technical Requirements for Pharmaceuticals for Human Use (ICH), the U.S. Food and Drug Administration (FDA), and the United States Pharmacopeia (USP) form the cornerstone of this framework. For researchers and drug development professionals, understanding the interplay between these guidelines is crucial for designing robust analytical procedures that meet regulatory expectations. This document, framed within broader research on comparing analytical method validation requirements, provides detailed application notes and experimental protocols for navigating this regulatory landscape.

The current regulatory environment is dynamic, with significant recent updates. The ICH Q2(R2) guideline on analytical procedure validation was recently finalized, and USP is actively revising its General Chapter <1225> to align with this new standard [16]. Simultaneously, the FDA has increased its focus on ensuring that both compendial and non-compendial methods are properly validated and verified, a trend observed in recent inspections [17]. These developments underscore the importance of using the most current information when designing validation studies.

ICH Guidelines

The ICH provides harmonized technical requirements for pharmaceutical registration across its member regions (the EU, Japan, and the USA). The recently updated ICH Q2(R2) guideline, titled "Validation of Analytical Procedures," serves as the primary international standard for validating analytical procedures used in the pharmaceutical industry [8] [16].

- Scope and Application: ICH Q2(R2) applies to analytical procedures used for the release and stability testing of commercial drug substances and products, including both chemical and biological/biotechnological entities. It covers the most common types of analytical procedures: assay/potency, purity, impurities, identity, and other quantitative or qualitative measurements [8]. The guideline is intended to be applied following a risk-based approach and can be extended to other analytical procedures used as part of a control strategy.

- Core Principles: The guideline emphasizes a life cycle approach to analytical procedures, connecting it with the concepts described in ICH Q14 on Analytical Procedure Development. It provides detailed guidance on the validation of various tests, including specificity, accuracy, precision, detection limit, quantitation limit, linearity, and range [8]. The focus is on ensuring the procedure is suitable for its intended use and provides reliable reportable results for decision-making.

FDA Guidelines

The FDA issues guidance documents that represent the Agency's current thinking on a particular topic. For bioanalytical method validation, the critical document is the M10 guidance, which was finalized in November 2022 [18].

- Scope and Application: The M10 guidance provides harmonized regulatory expectations for the validation of bioanalytical assays used in nonclinical and clinical studies that generate data to support regulatory submissions. It specifically addresses chromatographic and ligand-binding assays used to measure concentrations of the parent drug and its active metabolites in biological matrices [18]. For drug quality, the FDA also enforces compliance with other relevant guidelines, including ICH Q2(R2) and USP chapters.

- Regulatory Focus: Recent observations from the field indicate that the FDA is "hyper-focused" on the validation and verification of analytical test methods [17]. This includes requests for product-specific reports proving that products were tested using validated methods, whether they are official compendial methods (e.g., USP) or in-house developed methods. This heightened scrutiny applies to both prescription and over-the-counter (OTC) drug products.

USP Guidelines

The USP publishes legally recognized standards for medicines in the United States. Its general chapters provide guidelines for analytical procedures, with <1225> "Validation of Compendial Procedures" being the central document, currently under significant revision [16].

- Scope and Application: USP <1225> provides standards for the validation of both compendial and non-compendial analytical procedures. The ongoing revision aims to adapt the chapter for common usage in the validation of all analytical procedures and to create better connectivity with the Analytical Procedure Life Cycle (APLC) concepts described in USP <1220> [16].

- Core Principles and Revisions: The proposed revision of <1225> introduces and expands on several key concepts that align it more closely with ICH Q2(R2):

- Reportable Result (RR): Emphasized as the definitive output supporting batch release and compliance decisions.

- Fitness for Purpose: Positioned as the overarching goal of validation, focusing on the confidence in decision-making.

- Replication Strategy: Now linked to controlling the uncertainty of the Reportable Result.

- Statistical Intervals: Introduction of confidence, prediction, and tolerance intervals as tools for evaluating precision and accuracy in relation to decision risk [16].

- Role of USP Standards: USP public standards are universally recognized as essential tools that support the design, manufacture, testing, and regulation of drug substances and products. They play a critical role in ensuring medicine quality and safety, thereby increasing regulatory predictability [19].

Comparative Analysis of Key Guidelines

The following table summarizes the scope, core documents, and primary focus of the three major regulatory bodies concerning analytical method validation.

Table 1: Comparative Overview of Major Regulatory Guidelines for Analytical Method Validation

| Aspect | ICH | FDA | USP |

|---|---|---|---|

| Primary Scope | Harmonized global requirements for drug registration | Regulatory approval & compliance for US market | Public quality standards for medicines in the US |

| Key Document(s) | Q2(R2) - Validation of Analytical Procedures [8] | M10 - Bioanalytical Method Validation [18] | <1225> - Validation of Analytical Procedures (under revision) [16] |

| Document Status | Finalized | Final (Nov 2022) | Draft (Comment until Jan 2026) [16] |

| Primary Focus | Quality of drug substances & products (Chemical & Biological) | Bioanalysis of drugs & metabolites in biological matrices | Quality of drug substances & products (Compendial & Non-Compendial) |

| Regulatory Standing | Harmonized guideline adopted by members | Agency guidance (represents current thinking) | Legally recognized standard (Official compendium) |

Comparative Analysis of Validation Characteristics

While all guidelines aim to ensure data reliability, their emphasis on specific validation characteristics can differ based on the analytical context. The following table provides a high-level comparison.

Table 2: Comparison of Emphasis on Key Validation Characteristics

| Validation Characteristic | ICH Q2(R2) (Drug Substance/Product) | FDA M10 (Bioanalytical) | USP <1225> (Drug Substance/Product) |

|---|---|---|---|

| Accuracy | Core Requirement | Core Requirement | Core Requirement |

| Precision | Core Requirement | Core Requirement (incl. run-to-run variability) | Core Requirement |

| Specificity/Selectivity | Core Requirement | Critical (Selectivity) | Core Requirement (Specificity & Selectivity) [16] |

| Linearity & Range | Core Requirement | Core Requirement | Core Requirement |

| Detection Limit (LOD) | Defined | Defined (For metabolites) | Defined |

| Quantitation Limit (LOQ) | Defined | Critical (For metabolites) | Defined |

| Robustness | Recommended | Recommended | Recommended |

| Stability (in matrix) | Not primary focus | Critical | Not primary focus |

Integrated Experimental Protocol for Analytical Method Validation

This protocol is designed to satisfy the core requirements of ICH Q2(R2), FDA, and USP, leveraging the ongoing harmonization efforts. It is structured around the life cycle concept, from pre-validation planning to ongoing performance verification.

Pre-Validation Planning: The Analytical Target Profile (ATP)

1. Objective: To define the ATP, which specifies the required quality of the Reportable Result and its acceptable uncertainty, ensuring the method is "fit-for-purpose" [16].

2. Materials and Reagents: - Reference Standard: Certified, high-purity analyte for defining truth. - System Suitability Test (SST) Materials: Mixtures or standards for verifying instrument performance before analysis. - Specification Documents: Documents listing the acceptance limits for the drug substance or product.

3. Procedure: - Define the Purpose: Clearly state what the analytical procedure is intended to measure (e.g., assay of active ingredient, quantification of a specific impurity). - Define the Reportable Result: Specify the format of the final result (e.g., % w/w, ppm) [16]. - Set the Target Acceptance Limits: Based on product specifications, define the maximum allowable uncertainty (e.g., the method must be able to distinguish between 98.0% and 102.0% with 95% confidence).

Protocol for Validation of Performance Characteristics

This phase involves a series of experiments to collect data on the key validation characteristics.

1. Objective: To experimentally demonstrate that the analytical method meets the criteria defined in the ATP for specificity, accuracy, precision, linearity, range, LOD, and LOQ.

2. Materials and Reagents: - Analytical Balance: Calibrated, with appropriate sensitivity. - HPLC/UPLC System (or other relevant instrument): Qualified and maintained. - Reference Standard, Placebo/Blank Matrix, and Forced Degradation Samples: For specificity testing. - Volumetric Glassware/Pipettes: Class A or calibrated.

3. Experimental Workflow:

The following diagram illustrates the logical workflow and key decision points in the analytical method validation lifecycle, integrating concepts from ICH Q2(R2) and the revised USP <1225>.

4. Detailed Methodologies for Key Experiments:

Specificity/Selectivity:

- Procedure: Inject analyses of the following solutions in triplicate: blank (placebo or biological matrix), analyte standard, sample spiked with potential interferents (e.g., impurities, degradants, matrix components). For stability-indicating methods, include forcefully degraded samples.

- Acceptance Criterion: The analyte peak should be resolved from all other peaks (resolution > 2.0). The response from the blank should be less than the LOD.

Accuracy and Precision:

- Procedure: Prepare a minimum of three concentration levels (e.g., 80%, 100%, 120% of target) with a minimum of three replicates per level. Analyze all samples over at least three different days or by different analysts to determine intermediate precision.

- Data Analysis: Calculate the mean (accuracy) and standard deviation/relative standard deviation (precision) for each level. The revised USP <1225> encourages the use of statistical intervals (confidence, prediction) for evaluation [16].

- Acceptance Criterion: Accuracy should be within ±2.0% of the theoretical value for assay, and precision (RSD) should be ≤2.0% for the same. Criteria for impurities are wider.

Linearity and Range:

- Procedure: Prepare a minimum of five concentration levels across the claimed range (e.g., 50%-150% of target). Inject each level in duplicate.

- Data Analysis: Perform linear regression analysis on the peak response vs. concentration data.

- Acceptance Criterion: Correlation coefficient (r) > 0.998, y-intercept not significantly different from zero, and residual plot shows random scatter.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents essential for successfully executing the validation protocols outlined above.

Table 3: Essential Research Reagent Solutions for Analytical Method Validation

| Item | Function & Importance in Validation | Key Considerations |

|---|---|---|

| Certified Reference Standards | Serves as the primary benchmark for defining accuracy, preparing calibration standards, and determining linearity. | Must be of certified high purity and well-characterized. Source from a qualified supplier. Stability under storage conditions is critical. |

| System Suitability Test (SST) Mixtures | Verifies that the entire analytical system (instrument, reagents, columns, etc.) is performing adequately at the start of and during a run. | Typically a mixture of the analyte and key impurities, or a standard that tests critical parameters like resolution, efficiency, and tailing. |

| Placebo/Blank Matrix | Critical for demonstrating specificity/selectivity by proving the absence of interfering signals at the retention time of the analyte. | For drug products, this is the formulation without the active ingredient. For bioanalysis, this is the biological fluid (plasma, serum) from untreated subjects. |

| Forced Degradation Samples | Used in specificity testing to demonstrate that the method can accurately measure the analyte in the presence of its degradation products, proving it is "stability-indicating." | Generated by subjecting the analyte to stress conditions (acid, base, heat, light, oxidation). |

| High-Purity Solvents & Reagents | Form the mobile phase and sample diluents in chromatographic methods. Their quality directly impacts baseline noise, detection sensitivity, and reproducibility. | Use HPLC/MS grade or higher. Filter and degas mobile phases to prevent system damage and baseline drift. |

The simultaneous effectiveness of ICH Q2(R2) and ICH Q14 in 2024 marks a transformative period for pharmaceutical analytical science. These harmonized guidelines, effective since June 2024, represent a significant evolution from previous standards, moving toward a more integrated, science-based, and risk-managed approach to analytical procedure development and validation [20] [21]. ICH Q2(R2) "Validation of Analytical Procedures" provides an updated framework for validation principles, now encompassing modern analytical techniques like spectroscopic data and chemometric models, while ICH Q14 "Analytical Procedure Development" establishes, for the first time, a standalone regulatory framework for systematic analytical procedure development [20] [22]. This synergistic pairing is designed to facilitate more efficient regulatory evaluations and provide greater flexibility in post-approval change management when supported by scientific justification [20].

The revision of Q2(R2) was driven by the need to address advancements in analytical technology that were not covered in the previous Q2(R1) guideline, which had remained largely unchanged since 2005 [21]. Meanwhile, ICH Q14 represents a paradigm shift from traditional, static method development toward a dynamic, lifecycle-oriented model that embeds Quality by Design (QbD) principles directly into analytical practices [22]. Together, these guidelines encourage a holistic understanding of analytical procedures, from initial development through commercial monitoring and continuous improvement, aligning analytical science with the established ICH Q8-Q12 framework for pharmaceutical development and lifecycle management [21].

Core Principles and Synergistic Framework

Foundational Concepts of ICH Q2(R2) and ICH Q14

The enhanced approach introduced through ICH Q14 and supported by ICH Q2(R2) establishes a lifecycle-oriented framework for analytical procedures. This represents a fundamental shift from viewing method validation as a one-time event to embracing ongoing verification and improvement throughout a method's operational life [22] [23]. The core principles of this integrated framework include:

Analytical Target Profile (ATP): A foundational element that defines the required quality standards for analytical measurement performance. The ATP specifies what the method needs to achieve—in terms of accuracy, precision, specificity, and other relevant criteria—based on its pharmaceutical purpose, without constraining the methodological approach [22] [23].

Structured Method Development: Employs systematic, risk-based strategies to identify critical method parameters and their relationships to performance outcomes [22]. This replaces the traditional trial-and-error approach with scientifically rigorous development practices.

Method Operable Design Region (MODR): Also referred to as the analytical procedure design space, the MODR represents the established combination of analytical procedure parameter ranges within which the method performance criteria are consistently met [22]. Operating within this predefined space does not typically require regulatory re-approval [22].

Enhanced Validation Principles: ICH Q2(R2) expands traditional validation methodologies to include new analytical techniques and chemometric models, with greater emphasis on risk management throughout the analytical procedure lifecycle [21].

Lifecycle Management: Integration of development, validation, application, and continuous optimization throughout the method's operational life, aligned with ICH Q12 principles for pharmaceutical product lifecycle management [22] [23].

Synergy Between ICH Q14 and ICH Q2(R2)

The simultaneous implementation of ICH Q14 and ICH Q2(R2) creates a cohesive framework where development and validation activities are intrinsically linked [21]. ICH Q14 provides the structured approach for developing robust methods, while ICH Q2(R2) offers the updated validation methodologies to demonstrate their reliability [21]. This synergy enables a more holistic approach to analytical procedure lifecycle management, supporting continuous improvement and adaptation to new scientific findings and technological advances [22] [21].

Table: Comparison of Traditional vs. Enhanced Analytical Approaches

| Aspect | Traditional Approach | Enhanced Approach (Q14 & Q2(R2)) |

|---|---|---|

| Philosophy | Static, one-time validation | Dynamic, lifecycle-oriented |

| Development Basis | Prior knowledge, established methodologies | Systematic, risk-based, QbD principles |

| Regulatory Flexibility | Limited; changes often require prior approval | Greater flexibility within defined MODR |

| Method Selection | Technology-specific | ATP-driven, multiple technologies possible |

| Change Management | Reactive, often requiring revalidation | Proactive, based on risk assessment |

| Documentation | Limited to validation parameters | Extensive knowledge management |

Analytical Procedure Development Under ICH Q14

The Analytical Target Profile (ATP) Foundation

The Analytical Target Profile (ATP) serves as the cornerstone of the enhanced approach to analytical procedure development under ICH Q14 [23]. The ATP is a predefined objective that outlines the quality requirements for the analytical measurement, specifying what needs to be measured and the performance criteria the method must meet, but not how to achieve it [22] [23]. This fundamental shift in approach allows method developers to select the most appropriate technology based on scientific justification rather than historical precedent.

Derived from Critical Quality Attributes (CQAs) summarized in the Quality Target Product Profile (QTPP), the ATP ensures alignment between analytical measurements and product quality requirements [23]. Developing a robust ATP requires comprehensive understanding of:

- The analyte's physicochemical properties

- The matrix composition and potential interferences

- Required performance characteristics (accuracy, precision, specificity, etc.)

- The intended purpose of the method within the overall control strategy

The ATP-driven approach offers significant advantages, including flexibility in technology selection and the ability to adapt methods to advancing technologies without fundamentally changing the measurement objective [23]. However, establishing an effective ATP may require substantial initial experimentation when prior knowledge is limited [23].

Systematic Method Development Strategies

ICH Q14 advocates for a structured, science-based approach to method development that emphasizes understanding and controlling variability throughout the method's lifecycle [23]. The enhanced approach incorporates several key methodological strategies:

Design of Experiments (DoE): A central tool for systematically assessing multiple parameter effects and creating robust mathematical models that define the relationship between critical method parameters and performance attributes [22] [23]. Unlike one-factor-at-a-time approaches, DoE enables efficient identification of interactions between variables and establishes a statistical basis for parameter ranges.

Risk Assessment Tools: Formal risk assessment methodologies, such as Ishikawa diagrams and Failure Mode and Effects Analysis (FMEA), are employed throughout development to identify, assess, and control potential sources of variability [23]. These tools help prioritize development efforts on high-risk parameters and establish appropriate control strategies.

Knowledge Management: The development process under Q14 requires comprehensive documentation of decisions, experimental data, and scientific rationale [22] [23]. This creates a valuable knowledge repository that supports future method improvements, technology transfers, and regulatory submissions.

The following workflow illustrates the structured approach to analytical procedure development under ICH Q14:

Enhanced Validation Under ICH Q2(R2)

Key Updates in Validation Requirements

ICH Q2(R2) represents a substantial modernization of analytical validation principles, expanding beyond the traditional methods covered in Q2(R1) to include contemporary analytical techniques and validation methodologies [21]. The updated guideline incorporates principles of Pharmaceutical Development (ICH Q8), Quality Risk Management (ICH Q9), and Quality Systems (ICH Q10), creating a more comprehensive and modern approach to validation [21].

Key improvements in ICH Q2(R2) include:

Expanded Scope: Explicit inclusion of modern analytical techniques such as multivariate methods, process analytical technology (PAT), and chemometric models based on Principal Component Analysis and other advanced statistical approaches [21].

Risk-Based Approach: Greater emphasis on risk management throughout the analytical procedure lifecycle, aligning validation activities with potential impact on product quality and patient safety [21].

New Validation Criteria: Updated validation methodologies and acceptance criteria strategies that accommodate a wider range of analytical technologies [21].

Alignment with Q14: The validation approach supports the enhanced development concepts introduced in ICH Q14, particularly the validation of methods developed within a defined MODR [21].

Validation Throughout the Method Lifecycle

Under the integrated Q14/Q2(R2) framework, validation is not a one-time event but an ongoing process that continues throughout the method's lifecycle [23]. This lifecycle approach to validation includes:

Initial Validation: Conducted based on the ATP to demonstrate the method meets predefined performance criteria under various conditions [23].

Continuous Verification: Ongoing monitoring of method performance during routine use to ensure it remains fit for purpose [23].

Revalidation Activities: Triggered by changes in raw materials, equipment, or process conditions that may impact method performance [23].

For methods developed using the enhanced approach, validation should demonstrate performance not only at the nominal operating conditions but also across the MODR, providing assurance of robustness throughout the defined parameter ranges [23].

Table: Analytical Procedure Validation Characteristics per ICH Q2(R2)

| Validation Characteristic | Traditional Methods | Multivariate/ Chemometric Methods | Lifecycle Considerations |

|---|---|---|---|

| Accuracy | Recovery studies against reference standard | Validation against reference methods with uncertainty estimation | Ongoing verification through system suitability |

| Precision | Repeatability, intermediate precision | Cross-validation, bootstrap methods | Continuous monitoring through control charts |

| Specificity | Resolution from impurities/ matrix | Selectivity ratio, variable importance | Periodic assessment against new impurities |

| Detection/ Quantitation Limits | Signal-to-noise, visual evaluation | Multivariate detection limits, uncertainty-based approaches | Verification with new instrument platforms |

| Range | Demonstrated suitable precision, accuracy, linearity | Validated range of applicability | Extension studies for new sample types |

| Robustness | One-factor-at-a-time studies | MODR verification via DoE | Documented within established MODR |

Implementation Challenges and Strategic Solutions

Organizational and Technical Challenges

The implementation of ICH Q14 and Q2(R2) presents several significant challenges for pharmaceutical organizations:

Expertise Requirements: Successful implementation demands specialized knowledge in multivariate statistics, experimental design, and advanced software tools that may not be readily available in traditional quality control laboratories [22] [23].

Resource Intensity: The enhanced approach requires substantial initial investment in development activities, including extensive experimentation, data management, and documentation [22] [23].

Cultural Shift: Moving from a static, fixed-parameter approach to a dynamic, lifecycle-oriented model represents a significant cultural change that requires buy-in across multiple organizational levels [22].

Knowledge Management: Establishing robust systems for capturing, maintaining, and utilizing the extensive knowledge generated during enhanced method development poses both technical and organizational challenges [23].

Analytical Control Strategies and Lifecycle Management

A critical component of ICH Q14 implementation is the establishment of comprehensive analytical control strategies [23]. These strategies involve identifying potential sources of variability—whether related to the system, user, or environment—and implementing appropriate controls to mitigate their impact [23]. Key elements include:

System Suitability Tests (SST) and Sample Suitability Criteria: Designed to ensure the analytical system is functioning properly and the sample is appropriate for analysis at the time of testing [23].

Established Conditions (ECs): Legally binding parameters, including performance characteristics, procedure principles, and set points or ranges required for procedure parameters [23]. Understanding what constitutes an EC and establishing appropriate risk-based categorizations presents an initial challenge but offers significant regulatory flexibility once implemented [23].

Continuous Monitoring and Feedback Loops: Enable ongoing method performance assessment and facilitate rapid detection of out-of-trend (OOT) results, potentially reducing method-related investigations and batch release failures [23].

The following diagram illustrates the relationship between key elements of the analytical control strategy:

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing the enhanced approach under ICH Q14 and Q2(R2) requires specific tools and resources. The following table details essential research reagent solutions and their functions in method development and validation:

Table: Essential Research Reagent Solutions for ICH Q14/Q2(R2) Implementation

| Tool/Resource | Function | Application in Enhanced Approach |

|---|---|---|

| DoE Software | Enables design and analysis of multivariate experiments | Critical for MODR establishment and understanding parameter interactions |

| Multivariate Analysis Tools | Statistical analysis of complex data sets | Essential for chemometric method development and validation |

| Reference Standards | Qualified materials of known purity and identity | Required for ATP demonstration and method validation studies |

| Forced Degradation Materials | Stress-treated samples for specificity studies | Supports robustness claims and MODR boundary definition |

| Matrix Components | Individual components of sample matrix | Enables specificity demonstration and interference studies |

| Data Management Systems | Capture, store, and analyze development data | Supports knowledge management and regulatory submissions |

| Risk Assessment Tools | Formalized risk identification and ranking | FMEA, Ishikawa diagrams for systematic risk management |

| System Suitability Reference Materials | Qualified materials for system performance verification | Ongoing method performance verification per control strategy |

Experimental Protocols for Enhanced Method Development

Protocol 1: ATP Definition and Technology Selection

Objective: To systematically define the Analytical Target Profile and select appropriate analytical technology.

Methodology:

- Identify Critical Quality Attributes: Review QTPP to identify CQAs requiring analytical control.

- Define Performance Requirements: For each CQA, specify required measurement performance (accuracy, precision, specificity, range).

- Document ATP: Create formal ATP statement specifying quality standards without methodological constraints.

- Technology Screening: Evaluate multiple technologies against ATP requirements.

- Initial Risk Assessment: Conduct formal risk assessment of candidate technologies.

Deliverables: Formal ATP document, Technology assessment report, Initial risk assessment.

Protocol 2: DoE for MODR Establishment

Objective: To systematically establish the Method Operable Design Region using Design of Experiments.

Methodology:

- Identify Critical Method Parameters: Through risk assessment and preliminary experiments.

- DoE Design: Create multivariate experimental design covering parameter ranges.

- Experimental Execution: Conduct experiments according to designed matrix.

- Response Measurement: Evaluate method performance against ATP criteria.

- Data Analysis: Build mathematical models relating parameters to responses.

- MODR Definition: Establish parameter ranges where ATP criteria are met.

Deliverables: DoE protocol and report, Mathematical models, MODR definition.

Protocol 3: Enhanced Method Validation per Q2(R2)

Objective: To validate analytical procedures according to enhanced Q2(R2) principles.

Methodology:

- Validation Master Plan: Define validation strategy based on ATP and risk assessment.

- Protocol Development: Create detailed validation protocol addressing all relevant characteristics.

- Experimental Validation: Execute validation studies including accuracy, precision, specificity, range, detection/quantitation limits, and robustness.

- MODR Verification: Confirm method performance across design space boundaries.

- Data Analysis and Reporting: Comprehensive documentation of validation results.

Deliverables: Validation protocol, Validation report, MODR verification data.

The implementation of ICH Q14 and Q2(R2) represents a transformative shift in pharmaceutical analytics, moving the industry from static, fixed-parameter methods toward dynamic, science-based, and lifecycle-oriented analytical procedures [22]. This paradigm change brings analytical science into alignment with the established QbD principles already applied to formulation and process development, creating a more integrated approach to pharmaceutical quality systems [22] [21].

While the enhanced approach requires significant initial investment in expertise, resources, and organizational change management, the long-term benefits include increased method robustness, regulatory flexibility, and improved operational efficiency [22] [23]. The ability to make changes within an established MODR without requiring regulatory re-approval represents a significant advancement in post-approval change management [22].

The synergy between ICH Q14 and Q2(R2) creates a comprehensive framework that supports the development of more reliable, adaptable, and future-proof analytical procedures [21]. As the pharmaceutical industry continues to evolve with increasing digitalization and advanced analytical technologies, these guidelines provide the foundation for analytical quality systems that can adapt to new scientific advancements while maintaining regulatory compliance and, most importantly, ensuring product quality and patient safety [22] [21].

Distinguishing Between Method Validation, Qualification, and Verification

In pharmaceutical development and quality control, demonstrating the reliability of analytical methods is a fundamental regulatory requirement. The terms validation, qualification, and verification are often used interchangeably, yet they represent distinct processes within the analytical method lifecycle. Confusing these terms can lead to non-compliance, failed audits, or patient safety issues [24] [25].

This article clarifies these critical concepts by defining their unique purposes, applications, and regulatory frameworks. It provides a structured comparison and detailed experimental protocols to guide researchers, scientists, and drug development professionals in applying the correct approach for their specific context, thereby supporting robust analytical method selection and regulatory success.

Defining the Concepts

Analytical Method Validation

Method validation is the comprehensive and documented process of proving that an analytical method is suitable for its intended purpose [26] [27]. It provides evidence that the method consistently produces results that are accurate, precise, and reliable across its specified range [24]. Validation is typically required for new methods developed in-house, methods that have been significantly modified, or methods used for a new product or formulation [27]. It is a mandatory requirement for regulatory submissions for commercial products [26] [28].

Analytical Method Qualification

Method qualification is an early-stage, often limited, evaluation of an analytical method's performance characteristics [24] [28]. It serves as a pre-validation assessment during early development phases—such as preclinical or Phase I/II clinical trials—when manufacturing processes are not yet locked [28] [29]. Qualification demonstrates that a method is "work in progress" but can generate meaningful and consistent data to support development decisions [28]. It helps identify potential issues early and guides future optimization and full validation protocols [24].

Analytical Method Verification

Method verification is the process of confirming that a previously validated method performs as expected in a specific laboratory setting [26] [24]. It is less exhaustive than validation and is appropriate when adopting standard methods, such as those from a pharmacopoeia (USP, Ph. Eur.) or a method transferred from another site [26] [27]. Verification focuses on confirming critical performance parameters under the receiving laboratory's actual conditions, including its instruments, personnel, and sample matrices [27] [30].

Comparative Analysis

The table below summarizes the core differences between validation, qualification, and verification to guide appropriate application selection.

Table 1: Core Differences Between Validation, Qualification, and Verification

| Comparison Factor | Method Validation | Method Qualification | Method Verification |

|---|---|---|---|

| Primary Objective | Prove method suitability for intended use [26] [27] | Early-stage assessment of method performance [24] [28] | Confirm a validated method works in a new lab [26] [24] |

| When It Is Used | For new methods; before regulatory submission for commercial product [26] [27] | Early development (e.g., Phase I/II); prior to full validation [28] [29] | When adopting a compendial or previously validated method [26] [27] |

| Regulatory Status | Mandatory for marketing approval [26] [28] | Voluntary; a phase-appropriate exercise [28] [29] | Required for compendial methods [27] [30] |

| Scope & Complexity | Comprehensive assessment of all relevant performance characteristics [26] [31] | Limited, focused assessment; less complex than validation [24] [28] | Limited assessment of critical parameters only [26] [30] |

| Method Status | Method is fully developed and locked [28] | Method can be changed and optimized [28] | Method is established and fixed [26] |

Decision Workflow for Selection

The following diagram illustrates the decision-making process for selecting the appropriate approach based on the method's origin and development stage.

Experimental Protocols

Protocol for Analytical Method Validation

This protocol is aligned with ICH Q2(R2) guidelines and is designed for the validation of a new HPLC method for assay and impurity quantification [31] [8].

Table 2: Performance Characteristics and Acceptance Criteria for HPLC Method Validation

| Performance Characteristic | Experimental Procedure | Acceptance Criteria Example (Assay) |

|---|---|---|

| Accuracy | Analyze a minimum of 9 determinations over 3 concentration levels (e.g., 80%, 100%, 120% of target), spiking known amounts of analyte into a placebo matrix [31]. | Mean Recovery: 98.0–102.0% |

| Precision | 1. Repeatability: Inject 6 independent preparations at 100% test concentration [31].2. Intermediate Precision: Perform repeatability study on a different day, with a different analyst and instrument [31]. | RSD ≤ 1.0% for both studies |

| Specificity | Inject blank (placebo), standard, and stressed samples (e.g., exposed to heat, light, acid, base). Demonstrate baseline separation of analyte from impurities and excipients [31]. | Peak Purity: Passes; Resolution > 2.0 between analyte and nearest impurity |

| Linearity | Prepare and analyze a minimum of 5 concentration levels, from 50% to 150% of the target concentration. Plot response vs. concentration [31]. | Correlation Coefficient (r) > 0.999 |

| Range | Established from the linearity study, confirming that accuracy, precision, and linearity are met within the interval [31]. | e.g., 50-150% of test concentration |

| Robustness | Deliberately vary method parameters (e.g., column temperature ±2°C, flow rate ±0.1 mL/min, mobile phase pH ±0.1) and evaluate impact on system suitability [31]. | Method meets all system suitability criteria under all variations |

Protocol for Analytical Method Qualification

For a Phase I clinical trial where a method is needed for a drug substance whose process is not yet locked, a limited qualification is appropriate [29].

Objective: To demonstrate the method is suitable for generating preliminary stability and safety data. Parameters to Assess: Specificity, Linearity, Accuracy, and Repeatability. Experimental Design:

- Specificity: Analyze the drug substance standard and a stressed sample to confirm the main peak is pure and free from interfering peaks.

- Linearity & Range: Prepare a 5-point calibration curve from 50% to 150% of the target concentration. The correlation coefficient (r) should be >0.995.

- Accuracy & Repeatability: Prepare and analyze 3 samples at 100% concentration. The mean accuracy should be 95-105% with an RSD ≤ 2.0%.

Protocol for Analytical Method Verification

This protocol applies when implementing a USP monograph method for a finished product in a quality control laboratory for the first time [27] [30].

Objective: To verify that the compendial method performs as expected for the specific product under the laboratory's actual conditions. Parameters to Assess: Typically includes Specificity, Accuracy, and Precision [30]. Experimental Design:

- Specificity: Demonstrate that the analyte peak is pure and resolved from any excipients or known impurities in the specific product formulation.

- Accuracy: Perform a spike recovery study using the product placebo (if available) or by standard addition to the sample. Analyze in triplicate at 100% concentration. Recovery should be within 98.0-102.0%.

- Precision (Repeatability): Analyze six independent sample preparations from a homogeneous batch. The RSD for the assay results should not exceed 2.0%.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and solutions required for executing the validation and verification protocols described above.

Table 3: Essential Reagents and Materials for Analytical Method Validation and Verification

| Item | Function / Purpose | Critical Quality Attributes |

|---|---|---|

| Drug Substance/Active Pharmaceutical Ingredient (API) Reference Standard | Serves as the primary benchmark for identifying the analyte and establishing method accuracy and linearity [31]. | Certified purity and identity; high stability; sourced from a qualified supplier. |

| Placebo/Blank Matrix | Used in specificity testing to prove no interference from excipients, and in accuracy/recovery studies [31]. | Must be representative of the final product formulation, minus the active ingredient. |

| Known Impurity Standards | Used to challenge method specificity, establish resolution, and determine Limit of Detection (LOD)/Quantitation (LOQ) [31]. | Certified purity; should include potential process impurities and degradation products. |

| HPLC-Grade Solvents & Reagents | Used for mobile phase and sample preparation to ensure reproducibility and minimize baseline noise and ghost peaks. | Low UV absorbance; high purity; consistent lot-to-lot quality. |

| Qualified HPLC System & Column | The instrumental platform for method execution. System and column performance underpin all validation parameters. | System suitability tests must be met (e.g., plate count, tailing factor, RSD of replicate injections) [27]. |

| Certified Volumetric Glassware & Balances | Ensures accurate and precise preparation of standard and sample solutions, which is fundamental to all quantitative results. | Must be within calibration limits and used within their operational range. |

Regulatory Framework and Guidelines

Adherence to established regulatory guidelines is non-negotiable for market approval. The key guidelines governing these processes include:

- ICH Q2(R2): Provides the international standard for the validation of analytical procedures for drug substances and products, detailing the validation characteristics to be evaluated [8].

- USP General Chapters

<1225>and<1226>:<1225>covers "Validation of Compendial Procedures," while<1226>is dedicated to "Verification of Compendial Procedures," providing detailed U.S. pharmacopeial requirements [30]. - EU GMP Annex 15: Covers qualification and validation, emphasizing a life cycle approach and requiring that equipment is qualified before processes are validated [31].

Understanding and correctly applying the distinctions between validation, qualification, and verification is critical for efficient drug development and regulatory compliance. By following the structured protocols and leveraging the essential tools outlined in this article, scientists can ensure their analytical methodologies are robust, defensible, and ultimately capable of ensuring product quality and patient safety.

A Step-by-Step Guide to Validation Protocol Development and Lifecycle Management

Establishing the Analytical Target Profile (ATP) for Intended Use

The Analytical Target Profile (ATP) is a foundational concept in modern pharmaceutical development, defined as a prospective summary of the requirements that an analytical procedure must fulfill to be fit for its intended purpose [32] [33]. It outlines the necessary quality characteristics of a measurement for a specific quality attribute, ensuring that the reportable result delivers the right level of confidence for quality decisions [32] [34]. The ATP is analogous to the Quality Target Product Profile (QTPP) for a drug product but is specifically applied to the analytical procedure itself [33]. Its primary role is to drive the entire analytical method lifecycle, from initial development and validation through technology selection and ongoing lifecycle management, ensuring the procedure remains suitable despite changes [32] [33] [34].

Establishing the ATP early in the analytical procedure development process is critical. It provides a clear roadmap and aligns stakeholders on the performance criteria necessary for the method to support the evaluation of drug substance and drug product quality [33] [35]. This is particularly important within the framework of ICH Q14, which describes a systematic, science- and risk-based enhanced approach for analytical procedure development [33].

Core Components of an Effective ATP

An effective ATP is constructed from several key components that collectively define the analytical needs. The table below outlines these essential elements and their descriptions.

Table 1: Key Components of an Analytical Target Profile

| Component | Description |

|---|---|

| Intended Purpose | A clear description of what the analytical procedure is meant to measure (e.g., quantitation of an active ingredient, impurity level, or biological activity) [33] [34]. |

| Technology Selection | The specific analytical technology chosen (e.g., HPLC, cell-based assay, ELISA) and the rationale for its selection [33]. |

| Link to CQAs | A summary of how the procedure provides reliable results for the Critical Quality Attributes (CQAs) it assesses [33]. |

| Performance Characteristics | The specific performance criteria the method must meet, such as accuracy, precision, specificity, and range [33] [35] [34]. |

| Acceptance Criteria | The predefined, justified limits for each performance characteristic that define the minimum acceptable performance [33] [34]. |

| Reportable Range | The range of analyte concentrations over which the method must meet the accuracy and precision criteria [33] [35]. |

The characteristics of the reportable result—namely the performance characteristics and their acceptance criteria—form the core of the ATP. These criteria relate to the maximum uncertainty associated with the reportable result that is acceptable for making confident quality decisions [32]. The ATP should define the required level for characteristics such as:

- Specificity/Selectivity: The ability to distinguish the analyte from other components [35] [34].

- Accuracy: The closeness of agreement between the measured value and a true or accepted reference value [35] [34].

- Precision: The degree of agreement among individual measurements (repeatability, intermediate precision) [35].

- Linearity and Range: The ability to obtain results directly proportional to analyte concentration within a given range [35].

- Robustness: A measure of the method's capacity to remain unaffected by small, deliberate variations in procedural parameters [35].

Protocol for Establishing an ATP

The process of developing and implementing an ATP is systematic and can be broken down into a series of key steps. The following workflow diagram illustrates the lifecycle of an ATP from initiation to post-implementation monitoring.

Diagram Title: ATP Establishment and Lifecycle Workflow

Step-by-Step Experimental Protocol

Define the Intended Purpose and Link to CQAs

- Objective: Clearly articulate what the analytical procedure is intended to measure and how it connects to the product's Critical Quality Attributes (CQAs) [33].