Navigating Regulatory Pathways: A Strategic Guide to Biomarker Endpoint Validation in Drug Development

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the validation of biomarker endpoints across major regulatory frameworks.

Navigating Regulatory Pathways: A Strategic Guide to Biomarker Endpoint Validation in Drug Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the validation of biomarker endpoints across major regulatory frameworks. It explores foundational concepts from the FDA-NIH BEST Resource, details the fit-for-purpose validation methodology, and analyzes regulatory pathways including the FDA's Biomarker Qualification Program (BQP) and early engagement strategies. Through troubleshooting of common challenges like protracted timelines and evolving requirements, and a comparative look at global standards, the article offers actionable strategies for successfully integrating biomarkers into drug development to accelerate regulatory approval and advance patient-centric therapies.

Biomarker Endpoints 101: Definitions, Categories, and Regulatory Significance

In the pursuit of efficient drug development and regulatory approval, the use of precise biological measures is paramount. The FDA-NIH Biomarker Working Group established the BEST (Biomarkers, EndpointS, and other Tools) Resource to harmonize and clarify the terms used in translational science and medical product development [1]. This glossary provides a common language for communication between researchers, drug developers, and regulatory agencies, forming the foundation for a modern, evidence-based approach to therapy development [1] [2].

A biomarker is defined as "a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention, including therapeutic interventions" [3] [1] [2]. Molecular, histologic, radiographic, or physiologic characteristics can all serve as biomarkers [1].

A surrogate endpoint is a specific type of biomarker used in clinical trials. It is "a clinical trial endpoint used as a substitute for a direct measure of how a patient feels, functions, or survives" [4]. A surrogate endpoint does not measure the clinical benefit of primary interest itself but is instead expected to predict that clinical benefit based on epidemiologic, therapeutic, pathophysiologic, or other scientific evidence [4] [5].

Table 1: Core Definitions from the BEST Resource

| Term | Definition | Key Differentiator |

|---|---|---|

| Biomarker [1] [2] | A defined characteristic measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention. | A broad category of measurable indicators. |

| Surrogate Endpoint [4] | A biomarker used as a substitute for a direct measure of how a patient feels, functions, or survives. | A specific application of a biomarker as a trial endpoint to predict clinical benefit. |

| Clinical Outcome Assessment (COA) [4] | A measure describing or reflecting how an individual feels, functions, or survives. | Based on a report from a patient, clinician, or observer; not a biological measure. |

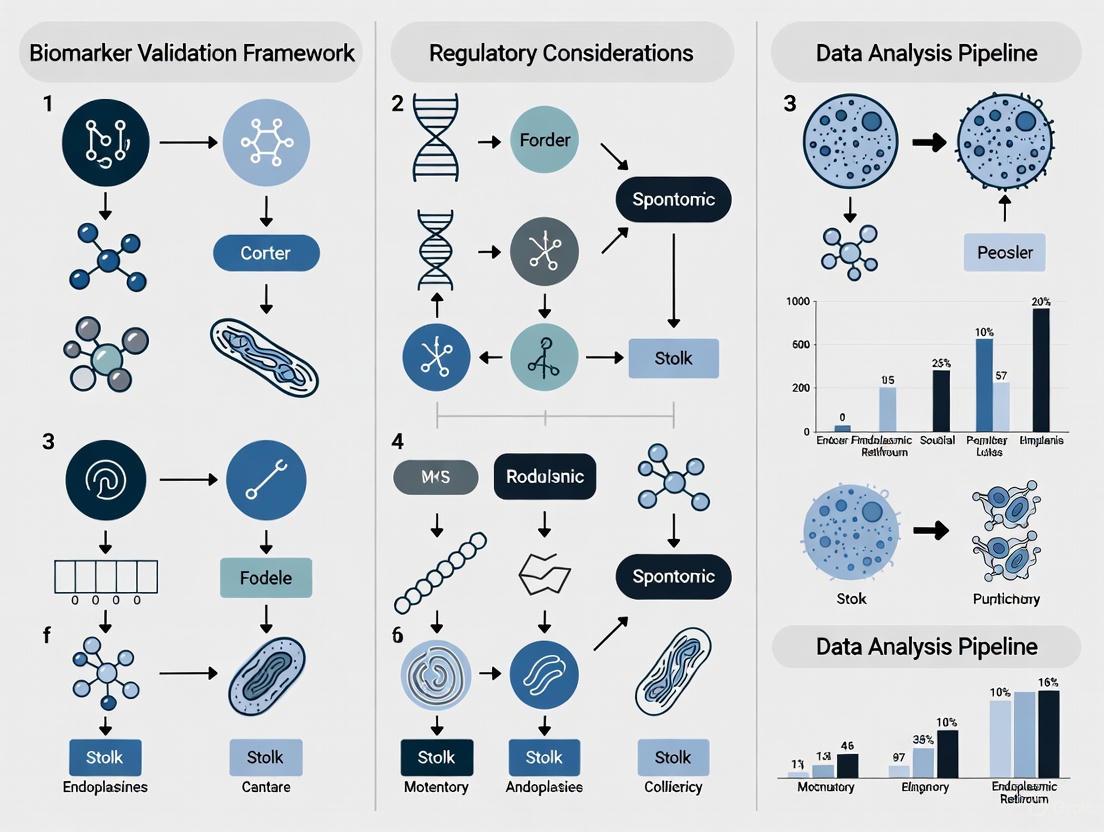

Diagram 1: Conceptual relationship between a biomarker, a surrogate endpoint, and the final clinical outcome within the BEST framework.

Biomarker Categories within the BEST Framework

The BEST Resource categorizes biomarkers based on their specific application in the disease and treatment continuum [1] [2]. Understanding these categories is critical for selecting the right tool for a given drug development challenge. An individual biomarker can fall into more than one category depending on its use [2].

Table 2: Biomarker Categories and Applications in Drug Development

| Biomarker Category | Definition | Example |

|---|---|---|

| Susceptibility/Risk | Indicates the potential for developing a disease or condition in an individual without clinically apparent disease [1]. | BRCA1/2 mutations for breast/ovarian cancer risk [2]. |

| Diagnostic | Used to detect or confirm the presence of a disease or condition, or to identify individuals with a disease subtype [1]. | Hemoglobin A1c for diagnosing diabetes; IDH1/2 mutations for glioma classification [2] [1]. |

| Monitoring | Measured serially to assess the status of a disease or medical condition, or for evidence of exposure to a medical product [1]. | Contrast-enhanced MRI for brain tumors; HCV RNA viral load [1] [2]. |

| Prognostic | Used to identify the likelihood of a clinical event, disease recurrence, or progression in patients with the disease of interest [1]. | MGMT promoter methylation in glioma; total kidney volume for polycystic kidney disease [1] [2]. |

| Predictive | Used to identify individuals who are more likely to experience a favorable or unfavorable effect from a medical product [1]. | EGFR mutation status for response to tyrosine kinase inhibitors in NSCLC [2]. |

| Pharmacodynamic/Response | Shows that a biological response has occurred in an individual exposed to a medical product [1]. | HIV RNA viral load reduction in HIV treatment; blood pressure response to anti-hypertensives [2] [6]. |

| Safety | Measured before or after exposure to indicate the likelihood, presence, or extent of toxicity as an adverse effect [1]. | Serum creatinine for acute kidney injury [2]. |

The Role of Surrogate Endpoints

Surrogate endpoints are a critical tool for accelerating drug development, particularly when measuring the true clinical outcome (like survival) would take many years or is otherwise impractical [4]. Their use is grounded in the premise that a change in the surrogate reliably predicts a change in a meaningful clinical outcome.

Validation Levels for Surrogate Endpoints

Regulatory acceptance of a surrogate endpoint depends on the level of evidence supporting its predictive value. The FDA recognizes different levels of clinical validation [4]:

- Candidate Surrogate Endpoint: An endpoint still under evaluation for its ability to predict clinical benefit.

- Reasonably Likely Surrogate Endpoint: Supported by strong mechanistic and/or epidemiologic rationale, but with insufficient clinical data for full validation. This level can support the Accelerated Approval program, which provides patients with serious diseases more rapid access to promising therapies [4].

- Validated Surrogate Endpoint: Supported by a clear mechanistic rationale and clinical data providing strong evidence that an effect on the surrogate endpoint predicts a specific clinical benefit. This level can support traditional approval [4].

Table 3: Examples of Validated and "Reasonably Likely" Surrogate Endpoints

| Surrogate Endpoint | Clinical Outcome | Level of Validation / Regulatory Context |

|---|---|---|

| Reduction in LDL Cholesterol [5] | Reduction in cardiovascular events | Validated Surrogate Endpoint |

| Reduction in Blood Pressure [5] | Reduction in stroke risk | Validated Surrogate Endpoint |

| Reduction in HIV Viral Load [2] | Increased survival and reduced AIDS-defining events | Validated Surrogate Endpoint |

| Tumor Response Rate [4] | Improved overall survival or quality of life | Can be a "Reasonably Likely" endpoint supporting Accelerated Approval in oncology |

Validation Frameworks and Regulatory Pathways

The journey from biomarker discovery to regulatory acceptance is a rigorous process. The level of evidence required for a biomarker depends on its Context of Use (COU), which is a concise description of the biomarker's specified use in drug development [2]. The principle of "fit-for-purpose" validation is central to this process, meaning the validation effort should be tailored to the specific application and the consequences of being wrong [2].

The Two Pillars of Biomarker Validation

For any biomarker to be considered reliable, it must undergo two distinct but complementary validation processes:

Analytical Validation: This assesses the performance characteristics of the biomarker assay itself. It is the proof that the test is technically robust and measures what it is supposed to reliably [6]. Key parameters include [7] [6]:

- Accuracy: Does the assay measure the true value?

- Precision: Does the assay give the same result for the same sample every time?

- Analytical Sensitivity: What is the minimum amount of analyte that can be reliably detected?

- Analytical Specificity: Does the assay only measure the intended analyte?

Clinical Validation: This demonstrates that the biomarker can be used for the clinical purpose for which it has been designed. It is the proof that the biomarker result is associated with the clinical outcome of interest (e.g., disease presence, future progression, or response to therapy) [6]. This involves assessing metrics like sensitivity, specificity, and positive/negative predictive value in a patient population distinct from the one used for discovery to avoid "overfitting" [3] [6].

Diagram 2: The sequential, interdependent workflow for biomarker validation, culminating in regulatory acceptance.

Pathways to Regulatory Acceptance

There are several pathways for achieving regulatory acceptance of a biomarker for use in drug development [2]:

- Early Engagement: Drug developers can engage with the FDA early via pathways like Critical Path Innovation Meetings (CPIM) or the pre-Investigational New Drug (IND) process to discuss biomarker validation plans [2].

- IND Process: This is a common pathway for biomarkers used within a specific drug development program. A Type C meeting can be used specifically to discuss novel surrogate endpoints [4].

- Biomarker Qualification Program (BQP): The FDA's BQP provides a structured framework for the development and regulatory acceptance of biomarkers for a specific COU [8] [2]. This pathway is more resource-intensive but, once qualified, the biomarker can be used by any drug developer within the specified COU without needing re-review [2].

Essential Research Toolkit and Experimental Protocols

Successfully discovering and validating biomarkers requires a suite of sophisticated tools and carefully designed experiments.

Research Reagent Solutions and Key Materials

Table 4: Essential Research Reagents and Materials for Biomarker Research

| Item / Technology | Function in Biomarker Research |

|---|---|

| Next-Generation Sequencing (NGS) [3] | Enables high-throughput discovery of genomic, transcriptomic, and epigenomic biomarkers from tissue or liquid biopsy samples. |

| Liquid Biopsy (ctDNA analysis) [3] [9] | Provides a non-invasive method for cancer detection, genotyping, and monitoring treatment response via circulating tumor DNA. |

| Patient-Derived Xenografts (PDX) & Organoids [10] | Preclinical models that closely mimic human disease biology, used for validating biomarker responses to therapeutic candidates. |

| Immunohistochemistry (IHC) / Immunoassays | Detects and quantifies protein-level biomarkers in tissue sections (IHC) or body fluids (immunoassays). |

| Artificial Intelligence / Machine Learning Platforms [9] | Analyzes complex, high-dimensional datasets (e.g., from multi-omics) to identify novel biomarker signatures and predict outcomes. |

Representative Experimental Protocol: Predictive Biomarker Identification in an RCT

The most statistically rigorous method for identifying a predictive biomarker is through a secondary analysis of data from a randomized clinical trial (RCT) [3].

Objective: To determine if a biomarker (e.g., EGFR mutation status) can identify patients who benefit from a new targeted therapy (e.g., gefitinib) compared to standard chemotherapy.

Methodology:

- Trial Design: Conduct a randomized controlled trial where patients are assigned to either the new therapy or the standard control therapy. Patient biomarker status may not be known at the time of enrollment [3].

- Specimen Collection: Collect and archive tumor specimens from consenting patients prior to treatment.

- Biomarker Analysis: After trial completion, analyze the archived specimens using a previously analytically validated assay to determine the biomarker status for each patient.

- Statistical Analysis:

- The primary analysis tests for a significant interaction between treatment assignment and biomarker status in a statistical model (e.g., a Cox proportional hazards model for a time-to-event endpoint like survival) [3].

- A statistically significant interaction term (e.g., p < 0.05) indicates that the treatment effect differs between biomarker-positive and biomarker-negative subgroups.

- The treatment effect (e.g., Hazard Ratio for progression-free survival) is then estimated separately within the biomarker-positive and biomarker-negative groups [3].

Key Consideration: This design avoids the bias inherent in non-randomized comparisons and provides the highest level of evidence for a predictive biomarker [3]. The IPASS study, which established EGFR mutation status as a predictive biomarker for gefitinib in NSCLC, is a classic example of this protocol [3].

Biomarkers are defined as a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention, including therapeutic interventions [11]. These measurable indicators can include molecular, histologic, radiographic, or physiologic characteristics [12] [11]. In modern drug development and clinical practice, biomarkers serve as critical tools for bridging the gap between laboratory discovery and patient bedside application, ultimately accelerating the development of new therapeutics and improving their benefit-risk profile [13].

The regulatory landscape for biomarkers has evolved significantly, with the U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) establishing formal qualification processes [14] [15] [13]. The Biomarkers, EndpointS, and other Tools (BEST) resource, developed jointly by the FDA and National Institutes of Health (NIH), provides standardized definitions and a framework for biomarker application [12]. A fundamental distinction in regulatory science is that biomarkers should be distinguished from Clinical Outcome Assessments (COAs), which directly measure how a patient feels, functions, or survives [12]. This distinction is crucial because COAs typically form the basis for regulatory approval of therapeutics, while biomarkers serve various supporting purposes throughout drug development [12].

The validation of biomarker endpoints across different regulatory frameworks requires a rigorous, fit-for-purpose approach where the level of validation is tailored to the biomarker's intended clinical use [15] [16]. This process involves both analytical validation (establishing that the test accurately and reliably measures the biomarker) and clinical validation (demonstrating that the biomarker measurement correctly corresponds to the clinical endpoint for a specific context of use) [16]. Understanding the precise taxonomy and application of different biomarker classes is fundamental to their successful implementation in both drug development and clinical practice.

Biomarker Taxonomy and Comparative Analysis

The BEST resource defines seven primary biomarker categories based on their application [12] [11]. This guide focuses on five core types most frequently encountered in therapeutic development: diagnostic, prognostic, predictive, pharmacodynamic/response, and safety biomarkers. Each category serves a distinct purpose in the drug development continuum, from early discovery through clinical trials and post-market monitoring.

Table 1: Comparative Analysis of Core Biomarker Types

| Biomarker Type | Primary Function | Representative Examples | Regulatory Considerations |

|---|---|---|---|

| Diagnostic | Detects or confirms the presence of a disease or condition of interest, or identifies a disease subtype [12] [16]. | Prostate-Specific Antigen (PSA) for prostate cancer [17] [18]; C-Reactive Protein (CRP) for inflammation [17]. | Requires high specificity and sensitivity; context of use is critical for test interpretation [12]. |

| Prognostic | Identifies the likelihood of a clinical event, disease recurrence, or progression in patients with a diagnosed disease [12] [13]. | Ki-67 (MKI67) for tumor proliferation in breast cancer [17]; BRAF mutation status in melanoma [17]. | Must provide information independent of treatment; often used for patient stratification in trials [13]. |

| Predictive | Identifies individuals more likely than similar patients without the biomarker to experience a favorable or unfavorable effect from a specific therapeutic exposure [12] [13]. | HER2/neu status for trastuzumab response in breast cancer [17]; EGFR mutation status for erlotinib/gefitinib in non-small cell lung cancer [17] [19]. | Often linked to companion diagnostics (CDx); evidence must link biomarker to drug response [13]. |

| Pharmacodynamic/ Response | Shows that a biological response has occurred in an individual exposed to a medical product or environmental agent [12] [13]. | Reduction of LDL cholesterol with statin treatment [17]; reduction of blood pressure with antihypertensives [17]; phosphorylated AKT (pAKT) levels with PI3K inhibitor treatment [19]. | Demonstrates biological activity and mechanism of action; used for dose selection in early-phase trials [19]. |

| Safety | Indicates the likelihood, presence, or extent of toxicity as an adverse event [12] [13]. | Liver function tests (ALT, AST) for drug-induced liver injury [17]; Creatinine clearance for nephrotoxicity [17]. | Monitored before and after treatment; used to manage patient risk during development and clinical use [17]. |

Key Distinctions and Overlapping Applications

A single biomarker can fulfill multiple roles depending on its context of use. For example, BRAF mutation status serves as a prognostic biomarker in melanoma, indicating likely disease course, and also as a predictive biomarker for response to BRAF inhibitor therapies [17]. Similarly, PSA functions as a diagnostic biomarker for prostate cancer detection and a monitoring biomarker to track disease recurrence or treatment response [17] [18].

The critical distinction between prognostic and predictive biomarkers warrants emphasis. A prognostic biomarker provides information about the patient's overall disease outcome regardless of therapy, while a predictive biomarker provides information about the effect of a specific therapeutic intervention [13] [19]. This distinction is vital for clinical trial design and interpretation, as predictive biomarkers enable enrichment strategies by identifying patient populations most likely to respond to an investigational therapy.

Table 2: Methodological and Validation Requirements by Biomarker Type

| Biomarker Type | Common Detection Platforms | Key Analytical Validation Parameters | Typical Clinical Validation Endpoints |

|---|---|---|---|

| Diagnostic | Immunoassays (ELISA, MSD), PCR, NGS, Imaging [15] [18]. | Sensitivity, Specificity, Positive/ Negative Predictive Value [18] [16]. | Correlation with clinical diagnosis; disease prevalence; net reclassification index [12]. |

| Prognostic | Immunohistochemistry, PCR, NGS, Flow Cytometry [17] [18]. | Reproducibility, Precision, Dynamic Range [15]. | Hazard ratios for event-free survival, overall survival, or disease progression [13]. |

| Predictive | NGS, IHC, FISH, RT-PCR [17] [13]. | Robustness, Inter-laboratory concordance [13]. | Differential treatment effect (e.g., p-value for interaction) in biomarker-defined subgroups [13]. |

| Pharmacodynamic/ Response | LC-MS/MS, Multiplex Immunoassays (MSD), ELISA [15] [19]. | Precision at relevant effect sizes, Dynamic Range [15]. | Dose-response relationship, temporal response pattern, correlation with mechanism [19]. |

| Safety | Clinical Chemistry Analyzers, Immunoassays [17] [15]. | Accuracy at decision thresholds, Reference ranges [15]. | Correlation with clinically adverse events; positive/negative likelihood ratios [17]. ``` |

Experimental Design and Validation Workflows

Analytical Validation Methodologies

Robust analytical validation is the foundation for any reliable biomarker application. This process establishes that the measurement assay performs acceptably in terms of key parameters including sensitivity, specificity, accuracy, and precision [16]. The choice of technology platform significantly impacts validation strategies, with a trend toward advanced methods that offer superior performance characteristics compared to traditional approaches.

Liquid Chromatography Tandem Mass Spectrometry (LC-MS/MS) provides exceptional specificity and sensitivity for quantifying low-abundance proteins and metabolites. The methodology involves sample preparation (e.g., protein precipitation, solid-phase extraction), chromatographic separation to reduce matrix effects, and mass spectrometric detection using multiple reaction monitoring (MRM) for precise quantification [15]. Key validation parameters for LC-MS/MS include linearity (across the expected concentration range), intra- and inter-assay precision (typically <15% coefficient of variation), and accuracy (85-115% of known values) [15].

Multiplex Immunoassay Platforms, such as Meso Scale Discovery (MSD), enable simultaneous quantification of multiple analytes from a single small-volume sample. These assays utilize electrochemiluminescence detection, which offers up to 100-fold greater sensitivity than traditional ELISA with a broader dynamic range [15]. The experimental workflow involves coating plates with capture antibodies, incubating with samples and detection antibodies, and measuring light emission upon electrochemical stimulation. Validation requires demonstration of minimal cross-talk between analytes, parallelism (similar dilution curves in biological matrix and standard diluent), and recovery (80-120% of spiked analyte) [15].

Biomarker Qualification Pathways

The regulatory qualification process for biomarkers has been formally established by both the FDA and EMA to provide a pathway for biomarkers to be accepted for specific contexts of use (COU) in drug development [11] [14]. The FDA's Biomarker Qualification Program follows a collaborative, multi-stage submission process as outlined in the 21st Century Cures Act [11] [14].

The following diagram illustrates the key stages and decision points in the biomarker qualification journey, highlighting the iterative nature of biomarker development and regulatory interaction:

Diagram 1: Biomarker Qualification Pathway. This diagram outlines the iterative, multi-stage process for regulatory qualification of biomarkers, as established by the FDA's Biomarker Qualification Program under the 21st Century Cures Act [11] [14].

The qualification process begins with submission of a Letter of Intent (LOI) that describes the biomarker, its proposed context of use, and the unmet drug development need it addresses [11]. If accepted, sponsors submit a detailed Qualification Plan (QP) outlining the development strategy, including analytical validation data and plans to address knowledge gaps [11]. The final stage involves submission of a Full Qualification Package (FQP) containing comprehensive evidence supporting the biomarker's qualification for the specified COU [11]. Throughout this process, regulatory agencies provide iterative feedback, and qualification decisions are based on the strength of evidence demonstrating that the biomarker can be reliably measured and interpreted for its intended use [11] [14].

Visualization of Biomarker Applications in Drug Development

Biomarkers serve distinct but complementary functions throughout the drug development continuum. The following diagram illustrates how different biomarker types are strategically employed across discovery, preclinical testing, clinical trials, and post-market monitoring to inform critical development decisions:

Diagram 2: Biomarker Applications Across the Drug Development Continuum. Different biomarker types provide critical decision-making support at specific stages of therapeutic development, from early discovery through post-market surveillance [12] [13] [19].

Essential Research Reagents and Technologies

The successful development and validation of biomarkers relies on a comprehensive toolkit of research reagents and analytical technologies. The selection of appropriate tools is critical for generating robust, reproducible data that meets regulatory standards for analytical validity.

Table 3: Research Reagent Solutions for Biomarker Development

| Reagent/Technology | Primary Function | Key Applications | Performance Considerations |

|---|---|---|---|

| High-Specificity Antibodies | Selective binding and detection of target proteins in complex biological matrices [17] [18]. | Immunoassays (ELISA, MSD), Immunohistochemistry (IHC), Western Blot [17]. | Specificity (minimal cross-reactivity), affinity, lot-to-lot consistency, validation in intended application [18]. |

| PCR & NGS Assays | Detection and quantification of nucleic acid biomarkers (DNA, RNA) including mutations and expression levels [17] [18]. | Genetic, prognostic, and predictive biomarker analysis; gene expression profiling [17]. | Sensitivity (detection limit), specificity, reproducibility, coverage of relevant variants [18]. |

| LC-MS/MS Platforms | Highly specific and sensitive quantification of small molecules, metabolites, and proteins [15]. | Pharmacodynamic biomarker analysis, therapeutic drug monitoring, metabolic profiling [15]. | Linear dynamic range, sensitivity (lower limit of quantitation), sample throughput, matrix effect minimization [15]. |

| Multiplex Immunoassay Platforms (e.g., MSD) | Simultaneous measurement of multiple protein biomarkers from a single small-volume sample [15]. | Cytokine profiling, signaling pathway analysis, biomarker signature validation [15]. | Multiplexing capability (number of analytes), dynamic range, sensitivity compared to ELISA, minimal cross-talk [15]. |

| Reference Standards & Controls | Calibration of assays and monitoring of performance over time and across laboratories [16]. | All quantitative biomarker assays requiring standardization [16]. | Well-characterized composition, stability, commutability with clinical samples [16]. |

The evolution of biomarker technologies has progressively shifted from single-analyte approaches to multiplexed platforms that can measure dozens to hundreds of biomarkers simultaneously. While ELISA remains widely used for single protein biomarker quantification due to its established workflow and relatively low cost, advanced platforms like MSD and LC-MS/MS offer significant advantages in sensitivity, dynamic range, and multiplexing capability [15]. For genetic biomarkers, next-generation sequencing (NGS) has largely replaced older technologies by enabling comprehensive profiling of multiple genomic alterations in a single assay [18].

The selection of appropriate research reagents must align with the intended context of use and regulatory requirements. For biomarkers advancing toward clinical implementation, reagents must undergo rigorous validation to ensure consistency, reliability, and reproducibility across multiple laboratories and over time [16]. This is particularly critical for predictive biomarkers used to guide treatment decisions, where analytical performance directly impacts patient care [13].

The precise classification of biomarkers into diagnostic, prognostic, predictive, pharmacodynamic/response, and safety categories provides a critical framework for their appropriate application throughout therapeutic development. Each category serves distinct purposes and carries specific requirements for analytical validation, clinical evidence generation, and regulatory qualification. The evolving landscape of biomarker science continues to be shaped by technological advancements in detection platforms, with multiplexed assays and highly sensitive mass spectrometry methods increasingly supplanting traditional approaches.

The validation of biomarker endpoints across different regulatory frameworks demands a deliberate, context-driven strategy that prioritizes both analytical robustness and clinical relevance. As regulatory agencies continue to refine qualification pathways through programs like the FDA's Biomarker Qualification Program and the EMA's Qualification of Novel Methodologies, the importance of early engagement and collaborative evidence generation cannot be overstated. By understanding the distinct taxonomy, applications, and validation requirements for different biomarker types, researchers and drug developers can more effectively leverage these powerful tools to accelerate the development of safe, effective, and targeted therapies.

The Critical Role of Context of Use (COU) in Defining Validation Requirements

In the realm of drug development and regulatory science, the Context of Use (COU) serves as a foundational framework that dictates the validation requirements for biomarker endpoints. The U.S. Food and Drug Administration (FDA) defines COU as a "concise description of the biomarker's specified use in drug development" that includes both the BEST (Biomarkers, EndpointS, and other Tools) biomarker category and the biomarker's intended application within the development process [20]. This conceptual framework is not merely administrative but represents a critical strategic tool that aligns biomarker development with regulatory expectations, ensuring that validation efforts are appropriately scaled to the biomarker's decision-making role.

The COU framework operates on the principle of "fit-for-purpose" validation, where the level of evidence required to support a biomarker's use depends entirely on its specific context and application [2] [21]. This approach recognizes that different biomarker types and intended uses demand distinct validation strategies, focusing on specific evidence characteristics based on their proposed role in drug development or clinical care. A biomarker's journey from discovery to regulatory acceptance hinges on precisely defining this COU early in development, as it directly influences the design of analytical and clinical validation studies, the extent of documentation required, and ultimately, regulatory acceptance [22] [21].

Biomarker Categories and Their Contexts of Use

The BEST Biomarker Classification System

The FDA-NIH BEST Resource establishes a standardized taxonomy for biomarkers, categorizing them based on their specific applications in drug development [2]. Understanding these categories is essential for properly defining a biomarker's COU, as each category carries distinct validation requirements and regulatory considerations.

Table: Biomarker Categories and Their Contexts of Use

| Biomarker Category | Primary Function | Example Context of Use | Key Validation Focus |

|---|---|---|---|

| Diagnostic | Identify or confirm presence of a disease or condition | Diagnose diabetes and pre-diabetes in adults using Hemoglobin A1c [2] | Sensitivity, specificity, and accurate disease identification across diverse populations [2] |

| Monitoring | Assess disease status or response to treatment over time | Monitor response to antiviral therapy in patients with chronic Hepatitis C using HCV RNA viral load [2] | Ability to reflect disease status changes over time with reliability [2] |

| Pharmacodynamic/Response | Show biological response to therapeutic intervention | Use of HIV RNA viral load as a surrogate for clinical benefit in HIV drug trials [2] | Evidence of direct relationship between drug action and biomarker changes; biological plausibility [2] |

| Predictive | Identify likelihood of response to specific treatment | Predict response to EGFR tyrosine kinase inhibitors in patients with NSCLC using EGFR mutation status [2] | Sensitivity, specificity, and mechanistic link to treatment response; often requires causality [2] |

| Prognostic | Forecast disease course or outcome regardless of treatment | Define higher risk disease population using total kidney volume for autosomal dominant polycystic kidney disease [2] | Robust clinical data showing consistent correlation with disease outcomes [2] |

| Safety | Detect or monitor drug-induced toxicity | Monitor renal function and potential nephrotoxicity during drug treatment using serum creatinine [2] | Consistent indication of potential adverse effects across different populations and drug classes [2] |

| Susceptibility/Risk | Identify individuals with increased probability of developing a condition | Identify individuals with increased risk of developing breast or ovarian cancer using BRCA1 and BRCA2 genetic mutations [2] | Epidemiological evidence, genetic evidence, biological plausibility, and establishing causality [2] |

Complexities in Biomarker Categorization

A single biomarker may fall into multiple categories depending on its specific application, illustrating the critical importance of precisely defining the COU. For example, Hemoglobin A1c serves as both a diagnostic biomarker for identifying patients with diabetes and a monitoring biomarker for assessing long-term glycemic control in diagnosed individuals [2]. This duality necessitates different validation approaches for each distinct context of use. The validation requirements for diagnosing disease differ significantly from those for monitoring treatment response, particularly in the stringency of analytical performance characteristics and the clinical evidence needed to support the intended use [2] [23].

How COU Dictates Validation Strategies

Fit-for-Purpose Validation Framework

The fit-for-purpose validation approach recognizes that the level of evidence required to support a biomarker's use depends entirely on its COU and the consequences of potential decision errors [2] [21]. This principle forms the cornerstone of efficient biomarker development, ensuring resources are allocated appropriately based on the biomarker's role in the drug development process. For example, a biomarker used for early internal decision-making may require less extensive validation than one used as a primary endpoint in a pivotal trial or to support regulatory claims [21].

The analytical and clinical validation requirements vary significantly across biomarker categories. Analytical validation assesses the performance characteristics of the biomarker measurement tool, including accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range [2]. Clinical validation, in contrast, demonstrates that the biomarker accurately identifies or predicts the clinical outcome of interest, often involving assessments of sensitivity, specificity, and predictive values in the intended population [2]. The FDA also considers the benefit/risk assessment of using a biomarker, including consequences of false positive or false negative results and availability of alternative tools [2].

Case Study: Same Biomarker, Different Validation Requirements

A compelling example from industry illustrates how the same biomarker requires completely different validation approaches based on its COU [21]. In two separate Phase I trials evaluating different investigational drugs, the same complement factor protein was used as a biomarker but with distinct contexts of use:

Table: Case Study - Same Biomarker with Different Contexts of Use

| Aspect | Case Study A: PD Response Biomarker | Case Study B: Predictive Stratification Biomarker |

|---|---|---|

| Context of Use | Measure pharmacodynamic response to a drug designed to suppress complement activity | Stratify patients based on baseline levels to identify those more likely to respond to treatment |

| Key Analytical Requirement | Accurate and reproducible baseline measurements to calculate percent change from pre-dose | High precision across a narrow spectrum related to clinical decision points |

| Consequence of Error | Minor impact on response quantification due to expected large effect size | False positives/negatives in patient selection, potentially excluding responsive patients or including non-responsive ones |

| Validation Focus | Reliability at pre-dose point; dynamic variability across range is acceptable | Precision and reproducibility around specific clinical thresholds |

This case study demonstrates that the identical biomarker demands tailored validation strategies based solely on its context of use. In Case A, where large fold-changes were expected, the focus was on baseline measurement reliability, while post-dose variability was acceptable. In Case B, where the biomarker determined patient eligibility, precise measurement around specific thresholds became critical [21].

Regulatory Pathways and COU Considerations

Biomarker Qualification Program (BQP)

The FDA's Biomarker Qualification Program (BQP) provides a structured framework for the development and regulatory acceptance of biomarkers for a specific COU [2] [24]. This program involves three stages: Letter of Intent, Qualification Plan, and Full Qualification Package, offering a pathway for broader acceptance of biomarkers across multiple drug development programs rather than just within a single drug application [2].

Recent analyses of the BQP reveal important patterns in regulatory acceptance. As of 2025, safety biomarkers (30%), diagnostic biomarkers (21%), and pharmacodynamic response biomarkers (20%) represent the most common categories in accepted qualification projects [24]. However, the program has demonstrated limited success for biomarkers intended as surrogate endpoints, with only five such projects accepted and none reaching qualification [24]. This highlights the particularly challenging evidence requirements for surrogate endpoint biomarkers, which must demonstrate not only correlation with clinical outcomes but also that treatment effects on the biomarker reliably predict effects on the ultimate clinical outcome [25].

The BQP process involves substantial timelines, particularly for complex biomarkers. Development of a Qualification Plan takes a median of 32 months, with surrogate endpoints requiring even longer at 47 months [24]. These extended timelines underscore the importance of early planning and engagement with regulatory agencies for biomarkers intended to support significant regulatory decisions.

Alternative Regulatory Pathways

Beyond the BQP, several pathways exist for regulatory acceptance of biomarkers. Early engagement mechanisms such as Critical Path Innovation Meetings (CPIM) allow developers to discuss biomarker validation plans before substantial investment [2]. The IND application process provides another avenue for pursuing clinical validation within specific drug development programs, which may be more efficient for well-established biomarkers with existing supporting data [2].

For digital health technology-derived biomarkers, additional considerations apply. Regulatory acceptance requires demonstration that the DHT is "fit-for-purpose" for its intended use, with the evidentiary burden varying depending on whether the biomarker will be used for exploratory purposes or to support primary endpoints in pivotal trials [26]. The recent qualification of stride velocity 95th centile as a primary endpoint for ambulatory Duchenne Muscular Dystrophy studies by the European Medicines Agency demonstrates the evolving regulatory landscape for novel biomarker modalities [26].

Experimental Protocols for Biomarker Validation

Analytical Validation Methodology

The analytical validation process establishes that a biomarker assay reliably measures the intended analyte with appropriate precision, accuracy, and reproducibility. The following protocol outlines key experiments required for analytical validation:

1. Precision and Accuracy Assessment:

- Within-run precision: Analyze at least 5 replicates of 3 different analyte concentrations (low, medium, high) in a single run

- Between-run precision: Analyze the same samples across 3 different runs, 3 different days, with 2 analysts

- Acceptance criteria: Coefficient of variation (CV) typically ≤15-20% for biomarkers, though this should be fit-for-purpose based on COU [21]

2. Sensitivity Determination:

- Lower Limit of Quantification (LLOQ): Determine the lowest concentration measurable with ≤20% CV and 80-120% accuracy using serial dilutions

- Upper Limit of Quantification (ULOQ): Establish the highest concentration measurable with acceptable precision and accuracy

- Limit of Detection (LOD): Identify the lowest concentration detectable but not necessarily quantifiable

3. Specificity and Selectivity Evaluation:

- Interference testing: Spike potential interferents (hemolyzed, lipemic, icteric matrices) at clinically relevant concentrations

- Cross-reactivity: Test structurally similar compounds or metabolites

- Recovery: Assess by comparing measured versus expected values of spiked samples

4. Stability Studies:

- Conduct bench-top, freeze-thaw, and long-term stability testing under anticipated storage conditions

- Establish stability in matrix and after sample processing

5. Reference Range Establishment:

- Analyze samples from appropriate healthy and disease populations

- Use statistical methods to establish reference intervals (typically 2.5th to 97.5th percentiles) [23]

This analytical validation protocol must be tailored to the biomarker's COU. For example, a biomarker used for patient stratification requires more rigorous precision around clinical decision points compared to one used for monitoring large pharmacodynamic effects [21].

Clinical Validation Methodologies

Clinical validation establishes the relationship between the biomarker and clinical endpoints. Key methodological approaches include:

1. Retrospective Sample Analysis:

- Utilize archived samples from previous clinical trials or biobanks

- Establish correlation between biomarker levels and clinical outcomes

- Assess sensitivity, specificity, and predictive values

2. Prospective Cohort Studies:

- Enroll appropriate patient populations followed over time

- Collect biomarker measurements at predefined intervals

- Document clinical outcomes of interest

- Use statistical models to establish predictive value

3. Blinded Comparison Studies:

- Compare biomarker performance against gold standard measures

- Calculate concordance statistics (kappa statistics for categorical biomarkers)

- Determine area under the ROC curve for continuous biomarkers [22]

4. Reliability and Reproducibility Assessment:

- Test-retest studies to establish intraclass correlation coefficients (ICC)

- Multi-site reproducibility studies for central laboratory tests

- Inter-rater reliability assessments for subjective biomarkers [22]

The sample size requirements for clinical validation studies are often substantial, particularly for reliability studies and evaluation of biomarkers as prodromes, and must be determined with those specific objectives in mind [22].

Research Reagent Solutions for Biomarker Validation

Table: Essential Research Reagents and Materials for Biomarker Validation Studies

| Reagent/Material | Function in Validation | Key Considerations |

|---|---|---|

| Reference Standards | Establish calibration curves and quantify analyte levels | Purity, stability, commutability with native forms [21] |

| Quality Control Materials | Monitor assay performance across runs | Three levels (low, medium, high) covering reportable range [23] |

| Biological Matrices | Diluent for standards and validation samples | Match to study samples; assess matrix effects [21] |

| Assay Kits/Platforms | Biomarker measurement systems | Analytical performance characteristics, throughput, ease of use [27] |

| Antibodies/Binding Reagents | Detection and capture elements for immunoassays | Specificity, affinity, lot-to-lot consistency [23] |

| Nucleic Acid Probes/Primers | Detection for molecular biomarkers | Specificity, optimization, validation [23] |

| Cell Lines/Tissue Samples | Controls for IHC and cellular assays | Well-characterized, appropriate positive/negative controls [23] |

| Data Analysis Software | Statistical analysis and result interpretation | Validation of algorithms, especially for machine learning approaches [26] |

Visualizing the Relationship Between COU and Validation Requirements

The following diagram illustrates how Context of Use drives the stringency of biomarker validation requirements throughout the development process:

Relationship Between COU and Validation Requirements

The Context of Use serves as the cornerstone of efficient and effective biomarker development, providing the critical framework that aligns validation efforts with regulatory expectations and clinical applications. The fit-for-purpose validation paradigm recognizes that different biomarker applications demand distinct levels of evidence, with the stringency of requirements driven by the consequences of decision errors and the regulatory impact of the proposed use. As the field evolves with emerging technologies including digital health technologies and novel biomarker modalities, the disciplined application of COU principles becomes increasingly vital for successful biomarker implementation across drug development programs. By precisely defining the Context of Use early in development and maintaining a science-based, fit-for-purpose approach to validation, researchers can navigate the complex regulatory landscape while advancing biomarkers that meaningfully contribute to drug development and patient care.

Biomarkers have transformed from useful biological indicators into essential decision-making tools that accelerate pharmaceutical innovation and enhance regulatory decision-making. Defined as "a defined characteristic measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention" by the FDA, biomarkers provide a critical window into the body's inner workings [28]. In both drug development and regulatory submissions, biomarkers serve as quantifiable proxies that bridge the gap between laboratory discoveries and patient outcomes, enabling more efficient and targeted therapeutic development [29].

The strategic application of biomarkers addresses fundamental challenges in the drug development pipeline, including high failure rates, prolonged timelines, and escalating costs. By providing early indicators of therapeutic efficacy and safety, biomarkers enable better candidate selection, dose optimization, and patient stratification [30] [10]. In regulatory contexts, appropriately validated biomarkers can support various claims, from informing dose selection to serving as surrogate endpoints that may form the basis for drug approval, particularly in diseases with significant unmet medical needs [2] [5].

Biomarker Categories and Their Applications in Drug Development

Classification of Biomarkers by Function

The FDA-NIH BEST (Biomarkers, EndpointS, and other Tools) Resource provides a standardized framework for categorizing biomarkers based on their specific application in drug development [2] [28]. Understanding these categories is essential for their appropriate implementation throughout the drug development continuum.

Table 1: Biomarker Categories and Their Applications in Drug Development

| Biomarker Category | Primary Function | Representative Examples |

|---|---|---|

| Diagnostic | Identify or confirm presence of a disease or subtype | Hemoglobin A1c for diabetes mellitus [2] |

| Monitoring | Track disease status or response to treatment over time | HCV RNA viral load for Hepatitis C infection [2] |

| Pharmacodynamic/Response | Indicate biological response to therapeutic intervention | Reduced glucose levels after antidiabetic therapy [29] |

| Predictive | Identify patients more likely to respond to a specific treatment | EGFR mutation status in nonsmall cell lung cancer [2] |

| Prognostic | Identify probability of a clinical event, recurrence, or progression | Total kidney volume for autosomal dominant polycystic kidney disease [2] |

| Safety | Monitor potential adverse effects or toxicity | Serum creatinine for acute kidney injury [2] |

| Susceptibility/Risk | Assess potential for developing a medical condition | BRCA1/2 mutations for breast and ovarian cancer risk [2] |

The Importance of Context of Use

A fundamental principle in biomarker application is defining the Context of Use (COU), which FDA defines as "a concise description of the biomarker's specified use in drug development" [2]. The COU precisely specifies the circumstances under which the biomarker data will be applied for regulatory decision-making, including the population, intervention, and purpose. The same biomarker may serve different functions across various COUs, necessitating distinct validation approaches for each application [2]. For instance, Hemoglobin A1c serves as a diagnostic biomarker for identifying patients with diabetes and as a monitoring biomarker for tracking long-term glycemic control [2].

Biomarkers as Accelerators in the Drug Development Pipeline

Enhancing Efficiency Across Development Stages

Biomarkers provide critical decision-making support throughout the drug development continuum, from early discovery to late-stage trials. In preclinical stages, biomarkers help assess drug metabolism, identify potential toxicities, and predict efficacy in disease models, enabling more informed candidate selection before human testing [10]. During clinical development, biomarkers facilitate patient stratification, dose optimization, and early efficacy and safety assessments, potentially reducing trial durations and costs [30] [29].

The growing impact of biomarkers is evidenced by their increasing incorporation into regulatory submissions. Analysis of New Molecular Entity (NME) applications for neurological diseases between 2008 and 2024 revealed that 37 of 67 submissions included biomarker data, with 29 incorporating biomarkers into their labeling [30]. This trend underscores the expanding role of biomarkers in demonstrating therapeutic value to regulatory agencies.

Biomarkers as Surrogate Endpoints

Perhaps the most significant application of biomarkers in accelerating drug development is their use as surrogate endpoints – biomarkers that are reasonably likely to predict clinical benefit and can support regulatory approval, particularly through the accelerated approval pathway [30] [5]. This approach has been particularly valuable in diseases with slow progression or significant unmet need, where traditional clinical endpoints would require prolonged follow-up.

Notable examples include:

- Dystrophin protein production for Duchenne Muscular Dystrophy therapies (eteplirsen, golodirsen, casimersen, viltolarsen) [30]

- Reduction in plasma neurofilament light chain (NfL) for tofersen in SOD1-amyotrophic lateral sclerosis [30]

- Reduction of brain amyloid beta (Aβ) plaque measured by PET imaging for lecanemab in Alzheimer's Disease [30]

Surrogate endpoints can significantly shorten development timelines by providing earlier indicators of treatment effect. However, their interpretation requires caution, as surrogates may not always reliably reflect true clinical benefit without proper validation [5].

Regulatory Frameworks and Biomarker Validation Standards

Pathways to Regulatory Acceptance

Regulatory agencies provide multiple pathways for biomarker acceptance, each with distinct advantages and considerations for drug developers.

Table 2: Comparison of Regulatory Pathways for Biomarker Acceptance

| Pathway | Key Characteristics | Best Suited For |

|---|---|---|

| IND Integration | Biomarker validated within specific drug development program; reviewed as part of IND/NDA/BLA | Well-established biomarkers with data supporting use in a specific drug program [2] |

| Biomarker Qualification Program (BQP) | Structured, collaborative process for qualification for specific Context of Use; once qualified, can be used across multiple programs without re-review [2] [31] | Biomarkers with broad applicability across multiple drug development programs [2] |

| Early Engagement (CPIM/pre-IND) | Early discussions with FDA to align on biomarker validation strategy and evidence needs | Novel biomarkers or new applications of existing biomarkers [2] |

The Biomarker Qualification Program (BQP), formally established under the 21st Century Cures Act of 2016, provides a transparent framework for qualifying biomarkers for specific Contexts of Use [31] [24]. This program employs a three-stage process: Letter of Intent, Qualification Plan, and Full Qualification Package [24]. While this pathway offers the advantage of broader applicability beyond a single drug program, analyses indicate challenges with its efficiency, with only eight biomarkers fully qualified as of 2025 and review timelines frequently exceeding targets [31] [24].

The Validation Process: Fit-for-Purpose Approach

Biomarker validation follows a fit-for-purpose principle, where the level of evidence required is determined by the specific Context of Use [2]. This approach encompasses two fundamental components:

Analytical Validation

Analytical validation establishes that the biomarker measurement method is reliable and reproducible for its intended use [2] [28]. This process assesses key performance characteristics including:

- Accuracy (closeness to true value)

- Precision (reproducibility across measurements)

- Analytical sensitivity and specificity

- Reportable range and reference ranges [2]

For bioanalytical methods, regulatory guidelines from FDA and EMA require demonstrating that assays consistently produce accurate and reproducible results across different laboratories and conditions [29]. This often involves following established protocols from organizations such as the Clinical and Laboratory Standards Institute (CLSI) [28].

Clinical Validation

Clinical validation demonstrates that the biomarker accurately identifies or predicts the clinical outcome of interest in the intended population [2]. This process varies by biomarker category:

- Diagnostic biomarkers require proof of accurate disease identification across diverse populations [2]

- Prognostic biomarkers need robust clinical data showing consistent correlation with disease outcomes [2]

- Predictive biomarkers prioritize sensitivity, specificity, and mechanistic link to treatment response [2]

- Safety biomarkers must demonstrate consistent indication of potential adverse effects across populations [2]

The evidence required for clinical validation depends on the intended use, with more substantial evidence needed for biomarkers supporting primary efficacy endpoints or serving as surrogate endpoints [2].

Diagram 1: BQP regulatory pathway with timelines. Source: [31] [24]

Experimental Protocols and Methodologies for Biomarker Research

Biomarker Discovery and Analytical Workflows

The biomarker analysis pipeline involves standardized methodologies to ensure reliable and reproducible results:

Sample Collection and Pre-analytical Processing: Proper collection, stabilization, and storage of biological samples (plasma, serum, tissue, etc.) is crucial to prevent degradation or signal loss [29]. Standardized protocols must be established for each sample type.

Detection Platforms and Methodologies:

- Immunoassays (ELISA, multiplex arrays): For protein biomarker quantification [29]

- Molecular techniques (qPCR, sequencing): For RNA and DNA biomarkers [29]

- Liquid Chromatography-Mass Spectrometry (LC-MS): For proteomic and metabolomic profiling [29]

- Advanced imaging (PET, MRI): For radiographic biomarkers [30] [10]

- Liquid biopsy platforms: For circulating tumor DNA and other blood-based biomarkers [10]

Data Processing and Quantification: Implementation of robust computational tools for signal normalization, calibration, and quality control, particularly for high-throughput platforms [29].

Validation Methodologies

Analytical Validation Experiments:

- Precision Studies: Repeatability (within-run) and intermediate precision (between-run, between-day, between-operator) assessments

- Accuracy and Recovery: Spike-and-recovery experiments using known biomarker concentrations

- Linearity and Range: Determination of the quantitative measurement range

- Specificity/Selectivity: Assessment of interference from similar molecules or matrix components

- Stability Studies: Evaluation of biomarker stability under various storage conditions [29]

Clinical Validation Study Designs:

- Retrospective analyses of archived samples from previous clinical trials

- Prospective observational studies in well-characterized patient cohorts

- Blinded validation studies comparing biomarker performance to reference standards

- Longitudinal studies to establish relationship between biomarker changes and clinical outcomes

Table 3: Essential Research Reagent Solutions for Biomarker Validation

| Research Tool | Primary Function | Key Applications |

|---|---|---|

| Patient-Derived Organoids | 3D culture systems replicating human tissue biology | Study patient-specific drug responses, model complex disease mechanisms [10] |

| Patient-Derived Xenografts (PDX) | Tumor models from patient tissues in immunocompromised mice | Validate cancer biomarkers, assess drug resistance mechanisms [10] |

| Multiplex Immunoassay Platforms | Simultaneous measurement of multiple protein biomarkers | Comprehensive proteomic profiling, biomarker signature identification [29] |

| LC-MS/MS Systems | High-sensitivity quantification of proteins and metabolites | Targeted biomarker quantification, proteomic discovery [29] |

| Digital PCR Systems | Absolute quantification of nucleic acid biomarkers | Liquid biopsy applications, minimal residual disease detection [10] |

| Single-Cell RNA Sequencing | Resolution of cellular heterogeneity within samples | Identification of cell subtype-specific biomarker signatures [10] |

Diagram 2: Biomarker validation workflow with fit-for-purpose approach. Source: [2] [29]

Comparative Analysis of Biomarker Utilization Across Therapeutic Areas

Trends in Neurology Drug Development

The application of biomarkers in neurological drug development demonstrates their growing importance in addressing unique challenges in this therapeutic area. Analysis of FDA approvals for neurological diseases from 2008 to 2024 reveals three primary roles of biomarkers in regulatory decision-making:

- Surrogate Endpoints (9% of submissions): Supporting accelerated approval based on biomarkers reasonably likely to predict clinical benefit [30]

- Confirmatory Evidence (24% of submissions): Providing mechanistic support for efficacy claims [30]

- Dose Selection (67% of submissions): Informing optimal dosing strategies based on pharmacodynamic responses [30]

This analysis demonstrates that biomarkers most frequently contribute to dose selection, while also playing critical roles in establishing efficacy. The approval of therapies for neurological conditions such as Alzheimer's Disease (amyloid beta reduction), Amyotrophic Lateral Sclerosis (neurofilament light chain), and Duchenne Muscular Dystrophy (dystrophin production) highlights how biomarkers enable drug development in diseases with progressive, irreversible damage where traditional endpoints would require extended follow-up [30].

Emerging Biomarker Technologies

Innovative technologies are expanding the potential applications of biomarkers in drug development:

Digital Biomarkers: Data from wearable sensors and devices enable continuous, real-world monitoring of physiological and behavioral parameters [26] [29]. The qualification of stride velocity 95th centile as a primary endpoint for ambulatory Duchenne Muscular Dystrophy by EMA demonstrates the regulatory utility of digital biomarkers [26].

Liquid Biopsy Platforms: Circulating tumor DNA (ctDNA) and other blood-based biomarkers enable non-invasive disease monitoring and assessment of treatment response [10].

Artificial Intelligence and Machine Learning: AI-driven analysis of multi-omics datasets identifies novel biomarker signatures and enhances predictive accuracy [10] [29].

Multi-Omics Integration: Combined analysis of genomic, transcriptomic, proteomic, and metabolomic data provides comprehensive insights into disease mechanisms and treatment responses [10] [29].

Biomarkers have evolved from supportive tools to fundamental components of efficient drug development and regulatory strategy. Their strategic implementation requires careful consideration of context of use, fit-for-purpose validation, and appropriate regulatory pathways. The growing regulatory acceptance of biomarkers across multiple roles – from dose selection to surrogate endpoints – demonstrates their value in accelerating the development of safe and effective therapies.

As biomarker science continues to advance, emerging technologies including digital biomarkers, liquid biopsy, and AI-driven analytics promise to further transform drug development. However, realizing the full potential of these innovations will require addressing ongoing challenges in validation standards, regulatory alignment, and evidence generation. Through strategic application of well-validated biomarkers across the development continuum, researchers and drug developers can enhance decision-making, reduce late-stage failures, and ultimately deliver better therapies to patients more efficiently.

The Validation Blueprint: Fit-for-Purpose Strategies and Regulatory Pathways

In the landscape of modern drug development, the "fit-for-purpose" validation paradigm represents a fundamental shift from one-size-fits-all approaches to a more nuanced, context-driven framework. This methodology recognizes that the level of evidence required to support a biomarker's use must be tailored to its specific role in drug development and regulatory decision-making [2]. Fit-for-purpose validation ensures that the evaluation of a biomarker is proportional to its intended application, with more consequential uses requiring more rigorous evidence [2] [32]. This principle underpins regulatory acceptance across global health authorities and has become increasingly important with the emergence of novel biomarker types, including digital biomarkers derived from wearable devices and sensors [33] [26].

The framework is built upon the foundational concept of "Context of Use" (COU), defined by the FDA as a concise description of the biomarker's specified application in drug development [2]. The COU explicitly states how the biomarker will be implemented, in which patient populations, and for what specific decision-making purpose [2] [26]. This clarity is essential for determining the appropriate validation strategy, as the same biomarker may require substantially different evidence depending on whether it's used for early research decisions versus supporting regulatory endpoints for drug approval [2]. The fit-for-purpose approach thus provides a strategic pathway for biomarker development that efficiently allocates resources while ensuring scientific rigor appropriate to the decision-making context.

Biomarker Categories and Contexts of Use

Biomarker Classification System

Biomarkers are categorized based on their specific applications in drug development and clinical practice, with each category serving distinct purposes and requiring specialized validation approaches. The BEST (Biomarkers, EndpointS, and other Tools) Resource, developed through an FDA-NIH collaborative effort, provides a standardized glossary for biomarker classification [2]. This systematic categorization is essential for establishing clear Context of Use statements and ensuring appropriate validation strategies for each biomarker type. Understanding these categories allows researchers to align validation requirements with the biomarker's intended function, following the fit-for-purpose principle that different applications demand distinct evidence characteristics [2].

Table 1: Biomarker Categories and Their Applications

| Biomarker Category | Primary Function | Representative Examples | Key Validation Focus |

|---|---|---|---|

| Susceptibility/Risk | Identifies likelihood of developing disease | BRCA1/2 mutations for breast/ovarian cancer [2] | Epidemiological evidence, biological plausibility [2] |

| Diagnostic | Detects or confirms disease presence | Hemoglobin A1c for diabetes [2] | Sensitivity/specificity, accurate disease identification [2] |

| Monitoring | Tracks disease status or treatment response | HCV RNA viral load for Hepatitis C [2] | Ability to reflect disease changes over time [2] |

| Prognostic | Predicts disease course or outcome | Total kidney volume for polycystic kidney disease [2] | Consistent correlation with disease outcomes [2] |

| Predictive | Identifies responders to specific treatments | EGFR mutation status in lung cancer [2] | Sensitivity/specificity, mechanistic link to treatment response [2] |

| Pharmacodynamic/Response | Measures biological response to treatment | HIV RNA viral load in HIV treatment [2] | Evidence of direct relationship to drug action [2] |

| Safety | Monitors potential adverse drug effects | Serum creatinine for acute kidney injury [2] | Consistent indication of adverse effects across populations [2] |

Context of Use Determination

The Context of Use (COU) statement is the cornerstone of fit-for-purpose validation, providing a comprehensive description of how a biomarker will be implemented within a specific drug development program [2]. A well-defined COU includes the biomarker category, intended application, patient population, analytical methodology, and the specific decision the biomarker will support [2] [26]. For example, a biomarker used for early internal decision-making about compound selection requires substantially less validation than one used as a primary endpoint in a pivotal Phase 3 trial or for patient stratification in registrational studies [2].

Establishing the COU begins with identifying a significant drug development challenge that the biomarker can address [2]. Developers must then determine whether the biomarker provides meaningful improvement over existing assessment methods and what specific studies or data are needed to validate it for the proposed context [2]. This process requires careful consideration of practical implementation factors, including measurement feasibility within a drug development program, assessment frequency, and whether the biomarker will eventually be used in routine clinical care if the drug is approved [2]. The COU ultimately guides the entire validation strategy, ensuring that the generated evidence matches the regulatory and scientific requirements for the biomarker's specific application.

Methodological Framework for Fit-for-Purpose Validation

Analytical Validation Approaches

Analytical validation forms the foundation of biomarker development, assessing the performance characteristics of the measurement assay itself [2]. The specific parameters evaluated depend on the detection method and the analyte of interest, but typically include accuracy, precision, analytical sensitivity, analytical specificity, reportable range, and reference range [2] [34]. For sensor-based digital health technologies (sDHTs), a hierarchical framework has been developed to guide the selection of appropriate reference measures for analytical validation [34]. This framework prioritizes reference methods based on their scientific rigor, with defining reference measures (those that establish the medical definition of a physiological process) representing the highest standard [34].

Table 2: Hierarchical Framework for Reference Measures in Analytical Validation

| Reference Category | Definition | Key Attributes | Examples |

|---|---|---|---|

| Defining Reference | Sets medical definition for a physiological process or behavioral construct [34] | Objective data capture, ability to retain source data [34] | Polysomnography for sleep staging [34] |

| Principal Reference | Directly and objectively measures the physiologic process or construct of interest [34] | Objective data capture, not prone to observer bias [34] | Capnography for respiratory rate [34] |

| Manual Reference | Relies on measurement by trained healthcare professional [34] | Can be seen, heard, or felt; potential for standardization [34] | Auscultation for respiratory rate [34] |

| Reported Reference | Based on patient or observer reports [34] | Subjective identification or quantification of measures [34] | Sleep diaries for time in bed [34] |

The validation requirements vary significantly depending on the biomarker's context of use. For instance, a pharmacodynamic biomarker used for internal dose selection decisions may require less extensive analytical validation than a diagnostic biomarker used for patient stratification or a surrogate endpoint supporting regulatory approval [2]. The stringency of analytical validation should reflect the consequences of potential measurement errors, with particular attention to the risks associated with false positive or false negative results in the specific context of use [2].

Clinical Validation Requirements

Clinical validation demonstrates that a biomarker accurately identifies or predicts the clinical outcome of interest for its specific context of use [2]. This process establishes the relationship between the biomarker measurement and the relevant biological process, pathological state, or response to therapeutic intervention [2]. Clinical validation typically involves assessing sensitivity and specificity, determining positive and negative predictive values, and evaluating the biomarker's performance in the intended population [2]. The evidentiary requirements vary substantially across biomarker categories, with predictive biomarkers emphasizing mechanistic links to treatment response, while prognostic biomarkers require robust clinical data showing consistent correlation with disease outcomes [2].

For novel digital biomarkers, clinical validation must also establish the clinical relevance of the measured parameter to the concept of interest [26]. This is particularly important when digital biomarkers capture novel aspects of disease not previously measured through conventional approaches. The validation process should demonstrate that the digital biomarker provides meaningful information about the patient's health status or treatment response that is relevant to both clinicians and patients [26]. This often requires multiple prospective studies to establish validity, reliability, and clinical utility [26].

Diagram 1: Fit-for-Purpose Validation Framework. This diagram illustrates how the Context of Use drives the analytical validation, clinical validation, and regulatory strategy for biomarker development.

Regulatory Pathways and Considerations

Framework for Regulatory Acceptance

Regulatory acceptance of biomarkers follows structured pathways that emphasize early engagement and evidence-based qualification. The U.S. Food and Drug Administration (FDA) provides several mechanisms for biomarker qualification, including the Biomarker Qualification Program (BQP), which offers a formal framework for regulatory acceptance of biomarkers for specific contexts of use across multiple drug development programs [2]. The BQP involves three distinct stages: submission of a Letter of Intent, development of a detailed Qualification Plan, and preparation of a Full Qualification Package with comprehensive supporting evidence [2]. This pathway, while potentially lengthy, provides broad regulatory acceptance that any drug developer can leverage for the qualified context of use [2].

For biomarkers intended for use within specific drug development programs, engagement through the Investigational New Drug (IND) application process often represents a more efficient pathway [2]. This approach allows developers to pursue clinical validation and regulatory acceptance within the context of a particular drug's development timeline [2]. The FDA also encourages early engagement through mechanisms such as Critical Path Innovation Meetings (CPIM) and pre-IND consultations to discuss biomarker validation plans before significant resources are invested [35]. For digital health technologies, the FDA has established additional frameworks, including the DHT Steering Committee and the Digital Health Center of Excellence, to provide specialized expertise and guidance on the use of digital biomarkers in drug development [26].

Global Regulatory Landscape

The regulatory landscape for biomarker acceptance continues to evolve globally, with increasing harmonization through initiatives such as the International Council for Harmonisation (ICH) [32] [33]. The recent ICH E6(R3) guideline on Good Clinical Practice emphasizes flexibility, risk-based quality management, and integration of digital technologies, which aligns closely with the capabilities of digital biomarkers and fit-for-purpose validation approaches [33]. European Medicines Agency (EMA) has demonstrated openness to innovative biomarker endpoints through landmark qualifications such as the stride velocity 95th centile as a primary endpoint for ambulatory Duchenne Muscular Dystrophy studies [26].

Regulatory agencies generally adopt a risk-based approach to biomarker evaluation, with the level of evidence required proportional to the biomarker's role in regulatory decision-making [2] [26]. Biomarkers used as primary endpoints in pivotal trials or to support key label claims face the most stringent requirements, while those used for early internal decision-making or exploratory analyses have lower evidence thresholds [2]. Health authorities consistently emphasize that the validation burden should reflect the consequences of potential false positive or false negative results in the specific context of use [2]. This risk-based approach ensures patient safety while facilitating efficient drug development.

Comparative Experimental Data and Validation Protocols

Validation Requirements Across Biomarker Types

The experimental protocols and evidence requirements for biomarker validation vary significantly across categories and contexts of use. This variability reflects the fit-for-purpose principle that different biomarker applications demand distinct validation approaches [2]. The evidence generation process must be tailored to the specific scientific and regulatory questions relevant to each biomarker type, with consideration of the biological plausibility, epidemiological support, and clinical utility required for the intended context [2]. The following comparative analysis illustrates how validation requirements differ across key biomarker categories, highlighting the need for customized experimental approaches.

Table 3: Comparative Validation Requirements Across Biomarker Categories

| Biomarker Category | Typical Experimental Protocols | Key Evidence Requirements | Common Methodologies |

|---|---|---|---|

| Predictive Biomarkers | Randomized controlled trials with biomarker-stratified design [2] | Mechanistic link to drug response, sensitivity, specificity, causality [2] | Genetic sequencing, IHC, PCR, companion diagnostic assays [2] [10] |

| Safety Biomarkers | Longitudinal studies assessing organ function, toxicology studies [2] | Consistent indication of adverse effects across populations [2] | Serum biomarkers, imaging, physiological monitoring [2] [10] |

| Digital Biomarkers | Observational studies with reference standards, usability testing [34] [26] | Technical verification, analytical validation, clinical relevance [34] [26] | Wearable sensors, algorithm development, signal processing [33] [34] |

| Diagnostic Biomarkers | Cross-sectional studies comparing affected and control populations [2] | Proof of accurate disease identification, sensitivity/specificity [2] | Imaging, laboratory tests, pathological examination [2] [10] |

Research Reagent Solutions for Biomarker Validation

The successful implementation of fit-for-purpose biomarker validation requires specialized research tools and technologies appropriate for different stages of development. These solutions range from preclinical models that enhance translational predictability to analytical platforms that ensure accurate biomarker measurement. The selection of appropriate research reagents and platforms is critical for generating robust, reproducible data that meets regulatory standards for the intended context of use [10]. The following table outlines key research solutions utilized in biomarker development and their specific applications in validation studies.

Table 4: Essential Research Reagent Solutions for Biomarker Validation

| Research Solution | Function in Validation | Representative Applications |

|---|---|---|

| Patient-Derived Organoids | 3D culture systems replicating human tissue biology for biomarker discovery [10] | Study patient-specific drug responses, model complex disease mechanisms [10] |

| Patient-Derived Xenografts (PDX) | Tumor models from patient tissues providing clinically relevant insights [10] | Validate cancer biomarkers, assess drug resistance mechanisms [10] |

| Liquid Biopsy Platforms | Non-invasive detection of circulating biomarkers [10] | Cancer detection via circulating tumor DNA, treatment monitoring [10] |

| Multi-Omics Integration | Combined genomic, transcriptomic, proteomic, and metabolomic analysis [10] | Comprehensive biomarker validation, understanding disease biology [10] |