Navigating the Maze: A Researcher's Guide to Solving Data Collection Challenges in Regulatory Frameworks

This article provides a comprehensive roadmap for researchers and drug development professionals grappling with data collection amidst complex and evolving regulatory landscapes.

Navigating the Maze: A Researcher's Guide to Solving Data Collection Challenges in Regulatory Frameworks

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals grappling with data collection amidst complex and evolving regulatory landscapes. It addresses the foundational challenges of regulatory divergence and data privacy laws, offers methodological strategies for ensuring data quality and ethical compliance, presents troubleshooting techniques for common pitfalls like data silos and bias, and explores validation frameworks for Automated Compliance Checking (ACC). The guide synthesizes practical steps to build robust, efficient, and compliant data collection processes that accelerate biomedical research and ensure regulatory adherence.

Understanding the 2025 Regulatory Landscape and Its Data Challenges

The Growing Challenge of Regulatory Divergence and Fragmentation

Technical Support Center

This support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals navigate data collection challenges within complex and fragmented regulatory frameworks.

Frequently Asked Questions (FAQs)

1. What is regulatory divergence and how does it impact multi-jurisdictional clinical trials? Regulatory divergence refers to the growing phenomenon where different countries, states, or regions enact and enforce differing, sometimes conflicting, rules and standards [1]. For multi-jurisdictional clinical trials, this creates significant complexity. You may face incompatible requirements for data sharing, informed consent, and privacy protection between, for example, U.S. FDA guidelines and the European Medicines Agency (EMA) regulations [2]. This divergence can mandate complex study designs, increase compliance costs, and risk delays if not managed proactively.

2. Our data collection protocol was approved in the U.S.; why was it rejected for the same trial in Europe? Even if the core science is identical, regional regulatory frameworks have distinct requirements. A common point of failure is data privacy and sharing. Your protocol might comply with U.S. standards but fall short of the stricter informed consent mandates for data sharing required by some European authorities or institutional review boards [2]. Always investigate local data-sharing policies and consent requirements during the initial planning phase, not after a rejection.

3. How can we troubleshoot a clinical trial data-sharing plan that is being blocked by intellectual property concerns? Resistance from sponsors or investigators due to intellectual property (IP) and data exclusivity is a frequent challenge [2]. To troubleshoot:

- Root Cause Analysis: Determine if the concern is about sharing raw data, analyzed results, or both.

- Implement a Controlled-Access Model: Instead of open access, propose a managed system where data is shared under a strict data-sharing agreement that protects IP. Most clinical trial agencies (65%) mandate such agreements [2].

- Leverage Policy: Reference guidelines from international bodies like the International Committee of Medical Journal Editors, which often require data sharing as a condition of publication [2].

4. What is the best way to design a data collection strategy that remains compliant amid shifting state-level AI and privacy laws? With a general pullback of federal initiatives in some areas and more emphasis by states, you must build an agile strategy [1] [3].

- Define Clear Goals: Start by understanding the specific problem you are trying to solve and what data is most valuable to your stakeholders [4].

- Choose Flexible Methods: Utilize methods that can adapt to new consent or data anonymization requirements, such as surveys with configurable consent modules [4].

- Centralize Compliance Tracking: Replace siloed systems with a single source of truth that can track and apply regulatory changes from multiple states in real-time [5].

5. We are encountering inconsistent quality control results between our U.S. and Asian manufacturing sites. How should we investigate? Inconsistent quality results often stem from regulatory fragmentation in Good Manufacturing Practice (GMP) interpretation and enforcement.

- Initiate a Root Cause Analysis: Follow a structured approach to determine the "what, when, who, where, how, and why" of the quality defect [6].

- Standardize Analytical Methods: Ensure both sites use the same validated analytical techniques (e.g., SEM-EDX for inorganic contaminants, Raman spectroscopy for organic particles) and reference standards [6].

- Audit the Quality Management System: Investigate differences in local procedures, personnel training, equipment qualification, or raw material sourcing that may be influenced by local regulatory focus areas [6].

Troubleshooting Guides

Guide 1: Troubleshooting Data Sharing and Privacy Compliance

This guide helps resolve issues related to sharing clinical trial data across borders with different privacy laws.

- Problem: Inability to share or combine clinical trial data from different countries for a pooled analysis.

- Required Materials:

- Data Sharing Agreements (DSAs) from all relevant jurisdictions.

- Original informed consent forms from all trial participants.

- List of all data elements to be shared, with classification (e.g., anonymized, pseudonymized).

- Step-by-Step Resolution:

- Verify Participant Consent: Check if the original informed consent from all study sites explicitly permits the future sharing of anonymized data for secondary research. If not, this is a primary blocker [2].

- Anonymize Data: Apply a robust anonymization technique (e.g., removal of all 18 HIPAA identifiers) to create a dataset that is no longer considered personal data under stricter laws [2].

- Execute Data Sharing Agreements: Draft and execute DSAs with all involved parties. These agreements should outline the purpose of data use, security protocols, and prohibitions against re-identification [2].

- Submit to Review Committee: Prepare and submit a data-sharing proposal to the overseeing committee (e.g., an independent review board), which is required by 71% of clinical trial agencies [2].

Table: Key Elements of a Data-Sharing Agreement

| Element | Description | Function in Compliance |

|---|---|---|

| Data Use Purpose | Clearly defined research objectives for the shared data. | Limits data use to pre-approved purposes, aligning with consent and privacy laws. |

| Security Protocols | Encryption standards, access controls, and data storage specifications. | Ensures technical safeguards meet the requirements of all involved regulatory jurisdictions. |

| Publication Terms | Agreements on authorship, acknowledgment, and data citation. | Manages intellectual property concerns and promotes collaborative transparency. |

| Audit Rights | Provisions for verifying compliance with the DSA. | Provides a mechanism for regulators and sponsors to ensure ongoing adherence. |

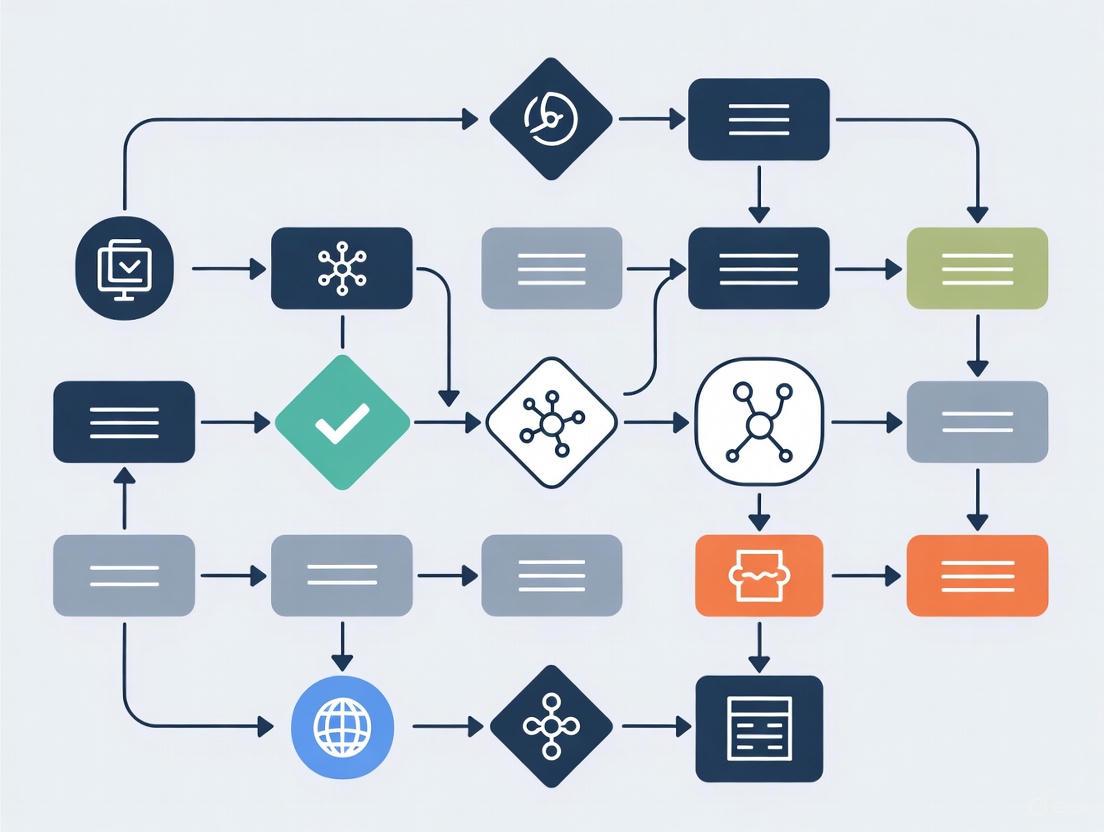

The following workflow diagram outlines the key stages of data collection and regulatory compliance verification in a multi-jurisdictional research project.

Guide 2: Troubleshooting Regulatory Fragmentation in Drug Development

This guide addresses operational challenges when regulatory requirements diverge during the drug development and manufacturing process.

- Problem: A quality control (QC) method validated for a drug product in one region is rejected by a regulatory agency in another region.

- Required Materials:

- Original validation protocol and report.

- Regulatory guidance documents (e.g., ICH, FDA, EMA) pertaining to analytical method validation from both regions.

- Complete data from the method validation study.

- Step-by-Step Resolution:

- Perform a Gap Analysis: Compare your current validation data against the specific requirements outlined in the guidelines of the rejecting agency. Pay close attention to acceptance criteria for parameters like specificity, accuracy, and precision [6].

- Identify Root Cause: The discrepancy is often due to differences in required validation parameters, sample matrix considerations, or acceptance criteria thresholds [1] [6].

- Design Bridging Studies: Develop a supplemental validation (or "bridging") study to generate data that specifically addresses the gaps identified. This may involve testing additional sample types or demonstrating robustness under different conditions [7].

- Compile and Submit: Integrate the new data from the bridging studies with your original validation report into a comprehensive submission for the reviewing agency [7].

Table: Research Reagent Solutions for Compliance and Quality Assurance

| Reagent/Solution | Function | Application in Troubleshooting |

|---|---|---|

| Positive Control Probes (e.g., PPIB, POLR2A) | Verify sample RNA integrity and assay performance. | Essential for qualifying sample quality in RNA-based assays, ensuring data reliability across different labs [8]. |

| Negative Control Probes (e.g., dapB) | Assess background noise and non-specific signal. | Critical for validating the specificity of your assay, a key parameter for regulatory acceptance [8]. |

| Reference Standards | Provide a benchmark for identifying and quantifying compounds. | Used to troubleshoot and validate analytical methods (e.g., HPLC, GC-MS) across different manufacturing sites to ensure consistency [6]. |

| Protease Solution | Permeabilizes tissue to allow probe access to RNA. | Requires precise optimization for different tissue types and fixation protocols to ensure consistent results, a common variable in multi-site studies [8]. |

The following diagram illustrates a systematic approach to troubleshooting quality defects in pharmaceutical manufacturing, a common challenge in a fragmented regulatory environment.

Troubleshooting Common Compliance Challenges

This guide addresses frequent technical and operational issues encountered when implementing key data privacy regulations in a research environment.

How do we resolve incomplete data subject rights handling under GDPR?

The Problem: Researchers cannot efficiently address requests from data subjects (e.g., EU research participants) for access, rectification, or erasure of their personal data, leading to non-compliance.

The Solution:

- Create a Clear DSAR Process: Establish a formal, documented workflow for receiving, tracking, and fulfilling Data Subject Access Requests (DSARs) within the GDPR-mandated timeline of one month [9].

- Implement Management Tools: Utilize specialized software to manage and document these requests, creating a verifiable audit trail [9].

- Map Data Flows: Develop and maintain a data processing register that details what personal data is collected, why it is processed, and where it is stored. This is essential for locating data to fulfill requests [10].

How can we manage third-party and vendor risks under GDPR and HIPAA?

The Problem: Research collaborators, cloud providers, or contract research organizations (CROs) that process personal data or protected health information (PHI) introduce compliance vulnerabilities.

The Solution:

- Perform Vendor Due Diligence: Conduct thorough risk assessments on all vendors before sharing data. For HIPAA, this involves sending vendors a security risk analysis and ensuring their security controls are adequate [11].

- Execute Compliant Agreements: Always have a signed Business Associate Agreement (BAA) in place for HIPAA compliance before sharing PHI [12] [11]. For GDPR, create detailed data processing agreements with vendors that outline their data protection responsibilities [9].

How do we address the failure to conduct a required Risk Analysis for HIPAA?

The Problem: An organization-wide security risk analysis, required annually or when operational changes occur, has not been performed, leaving Protected Health Information (PHI) vulnerable.

The Solution:

- Conduct an Enterprise-Wide Risk Analysis: Perform a formal risk analysis to identify and document vulnerabilities in your security practices related to electronic PHI (ePHI). This is not optional and is one of the most common violations penalized by regulators [12] [11].

- Implement a Risk Management Process: The risk analysis must be actionable. Identified risks must be prioritized and addressed in a reasonable time frame. Knowing about risks and failing to manage them is a major violation [12].

How do we fix inadequate access controls for sensitive data under HIPAA and SOX?

The Problem: Lack of proper controls allows unauthorized personnel to access sensitive financial data (SOX) or electronic Protected Health Information (HIPAA).

The Solution:

- Implement Strict Access Controls: Enforce role-based access controls to ensure individuals can only access data necessary for their job functions [11].

- Ensure Segregation of Duties (SoD): For SOX compliance, design controls so that no single individual has control over all aspects of a critical financial transaction, preventing fraud and errors [13].

- Apply Encryption: Encrypt ePHI on portable devices like laptops and USBs. If encryption is not used, an equivalent security measure must be implemented and documented [12].

How do we avoid delays in providing patients access to their health records under HIPAA?

The Problem: Research participants or patients are denied timely access to their medical records or are overcharged for copies, violating the HIPAA Right of Access rule.

The Solution:

- Adhere to the 30-Day Rule: Provide patients with access to their health records within 30 days of their request. The Office for Civil Rights (OCR) has made this a key enforcement priority [12].

- Avoid Overcharging: Fees for providing copies of records must be reasonable and based on permissible cost factors. Excessive charges are a common violation [12].

How do we prevent a "one-and-done" risk assessment approach under SOX?

The Problem: A single risk assessment is performed, but the internal controls are not updated to reflect business changes, new accounting guidance, or acquisitions.

The Solution:

- Conduct Annual Risk Assessments: Perform a formal risk assessment at least annually. For larger or more complex research organizations, supplement this with quarterly reviews [13].

- Adapt to Change: Continuously ask, "How do business changes affect our risk assessment?" This is critical when acquiring new entities, changing business operations, or experiencing significant staff turnover [13].

Frequently Asked Questions (FAQs)

Q1: What is the most common and costly mistake organizations make with GDPR compliance? A1: A frequent and complex challenge is underestimating the full scope of GDPR, particularly the difficulty of data discovery and mapping. Organizations often discover 3-5 times more third-party data processing relationships than initially documented and struggle with hidden data repositories and complex data flows, leading to a 50-70% scope underestimation [10].

Q2: We are a newly public company. What is a common SOX pitfall related to staff? A2: A major pitfall is gaps in headcount-related competencies. This occurs when staff overseeing key controls are spread too thin, lack specific training to understand the underlying risks, or when management fails to prioritize governance, leading the team to view compliance as a low priority [13].

Q3: What is a simple but critical control often missed for HIPAA compliance? A3: Failing to implement a robust data backup and disaster recovery plan is a common issue. With the rise of ransomware attacks in healthcare, HIPAA requires organizations to retain exact copies of PHI in both local and offsite locations to ensure data can be recovered and is accessible in an emergency [11].

Q4: How does the CCPA/CPRA impact research involving California residents? A4: These laws grant California residents the right to know, delete, and correct their personal information, and to opt-out of its "sale" or "sharing." Researchers must have mechanisms to honor these requests. Note that PHI collected by a HIPAA-covered entity may be exempt, but health data from other sources (e.g., wellness apps used in trials) likely falls under CCPA/CPRA [14].

Comparison of Key Regulatory Provisions

The table below summarizes the core requirements and penalties for the four regulations to aid in experimental design and compliance planning.

| Regulation | Primary Scope | Key Data Rights / Provisions | Penalties for Non-Compliance |

|---|---|---|---|

| GDPR [15] [14] | All organizations processing personal data of EU citizens. | Right to access, rectification, erasure ("right to be forgotten"), data portability, and object to processing. | Up to €20 million or 4% of annual global turnover, whichever is higher [14]. |

| CCPA/CPRA [15] [14] | For-profit businesses operating in California meeting specific revenue/data thresholds. | Right to know, delete, and correct personal information; right to opt-out of sale/sharing of data; non-discrimination. | Fines of up to $7,500 per intentional violation [14]. |

| HIPAA [15] [12] | Healthcare providers, health plans, healthcare clearinghouses, and their Business Associates. | Safeguards for Protected Health Information (PHI); patient rights to access and amend their health records; breach notification. | Fines range from $100 to $50,000 per violation, with an annual maximum of $1.5 million [12] [14]. |

| SOX [15] [14] | Publicly traded companies in the U.S. and their auditors. | Accuracy and reliability of corporate financial disclosures; secure storage of financial records for at least 5 years; internal controls over financial reporting. | Steep fines and potential imprisonment for executives [14]. |

Experimental Protocol: Conducting a Regulatory Risk Assessment

This protocol provides a methodology for identifying and mitigating data privacy risks within a research project, addressing core requirements of HIPAA and GDPR.

1. Objective: To systematically identify, assess, and document risks to the confidentiality, integrity, and availability of sensitive research data (e.g., PHI, personal data) and establish a treatment plan.

2. Materials:

- Risk Register Database: A centralized system (e.g., SQL database, specialized GRC software) for logging and tracking risks.

- Data Flow Mapping Tool: Software capable of creating visual data flow diagrams (e.g., Lucidchart, Draw.io) to identify all data touchpoints.

- Vendor Assessment Questionnaire: A standardized tool for evaluating third-party data processor security controls [11].

3. Methodology:

- Step 1: Scoping & Pre-planning. Define the boundaries of the assessment (e.g., a specific clinical trial, research department). Secure executive sponsorship to ensure resource allocation [10].

- Step 2: Data Discovery & Mapping. Identify all data repositories, including shadow IT and legacy systems. Document the flow of data from collection through analysis, storage, and sharing/disposal, noting all third-party transfers [10].

- Step 3: Threat & Vulnerability Identification. Using the data map, identify potential threats (e.g., unauthorized access, data corruption) and system vulnerabilities (e.g., lack of encryption, weak access controls) [12] [11].

- Step 4: Risk Analysis & Likelihood Impact Matrix. Analyze each risk by estimating its likelihood and potential impact on the research project and participants. Prioritize risks (e.g., High, Medium, Low) for treatment. This step is mandatory for HIPAA [12] [11].

- Step 5: Risk Treatment. Define action plans to mitigate, accept, avoid, or transfer each high-priority risk. Assign an owner and a deadline for each mitigation action.

- Step 6: Documentation & Reporting. Document the entire process, findings, and treatment plans. This documentation is critical evidence for auditors and regulators [12] [13].

- Step 7: Schedule Review. This is not a one-time activity. Schedule the next assessment, typically within one year or after any major change in the research process [13].

Compliance Workflow Diagram

Compliance Implementation Workflow

| Tool / Resource | Function in Compliance Process |

|---|---|

| Data Processing Register | A centralized record of all data processing activities, required under GDPR, to document what data is collected, why, and how it flows through the organization [9]. |

| Security Risk Analysis Software | Tools to systematically identify and assess risks to the confidentiality, integrity, and availability of sensitive data, fulfilling a core requirement of HIPAA and NIST [12] [15]. |

| Access Control Management System | Software that enforces role-based access to ensure only authorized personnel can access sensitive data, a key control for both HIPAA and SOX [11] [13]. |

| Business Associate Agreement (BAA) / Data Processing Agreement (DPA) | Legally required contracts under HIPAA and GDPR to ensure third-party vendors protect data to the required standard [12] [9]. |

| Data Subject Access Request (DSAR) Portal | A system to efficiently receive, track, and fulfill requests from individuals exercising their data rights under GDPR and CCPA [9]. |

Regulatory Intersection and Data Flow Logic

Data Type to Regulation Mapping

Technical Support Center: Troubleshooting Data Governance in Research

This support center provides practical guidance for researchers, scientists, and drug development professionals navigating data governance challenges at the intersection of AI, IoT, Cloud, and regulatory frameworks.

Troubleshooting Guides

Problem: AI Model Produces Biased or Inaccurate Results A machine learning model for patient stratification is showing signs of performance decay and potential bias, leading to unreliable predictions.

Diagnosis Checklist:

- Data Drift: Have the statistical properties of the live, incoming data changed compared to the model's original training data? [16]

- Bias in Training Data: Was the training dataset representative of the entire target population, including diverse racial, ethnic, and gender groups? [17]

- Poor Data Lineage: Can you trace the origin of the data and the transformations it underwent before training? A lack of lineage often makes bias untraceable. [17]

- Data Quality: Are there issues with the accuracy, completeness, or consistency of the input data? [18]

Resolution Protocol:

- Audit for Fairness: Use fairness audit tools to quantify bias across different demographic segments in your dataset. [17]

- Retrain with Representative Data: Curate a new, diverse, and representative training dataset. Apply pre-processing de-biasing techniques like reweighting or resampling. [17]

- Establish a Feedback Loop: Implement continuous monitoring to detect data and concept drift, triggering automatic model retraining. [16]

- Document with Model Cards: Create detailed documentation (model cards) that capture the model's intended use, training data, and known limitations to ensure transparency. [17]

Problem: Data Silos Impeding Cross-Functional Research Critical research data is trapped in isolated systems (e.g., separate CRMs, IoT sensor databases, lab systems), preventing a unified view.

Diagnosis Checklist:

Resolution Protocol:

- Implement a Centralized Architecture: Invest in a centralized data platform like a data lakehouse or fabric architecture to consolidate structured and unstructured data. [17]

- Use ETL/ELT Pipelines: Create automated pipelines to extract, transform, and load data from various sources into the centralized platform. [17]

- Appoint Data Stewards: Designate data stewards for cross-functional coordination and to enforce enterprise-wide governance policies on integrated sources. [17] [19]

- Deploy a Data Catalog: Use a data catalog to inventory all data assets, making them discoverable and understandable to authorized users across the organization. [19]

Problem: Ensuring Regulatory Compliance in a Multi-Cloud Environment A clinical trial spans multiple cloud regions, raising concerns about compliance with data sovereignty laws (like GDPR) and specific regulations (like ICH E6(R3) GCP).

Diagnosis Checklist:

- Unclear Data Residency: Do you know the physical geographic location of every server storing your regulated clinical data? [19]

- Lacking Data Lifecycle Policies: Are there no clear policies for data retention, archiving, and purging as required by regulations? [19]

- Inconsistent Access Controls: Are access controls weak or inconsistent across different cloud platforms, increasing the risk of data leakage? [19]

Resolution Protocol:

- Classify and Catalog Data: Use an automated data catalog to classify data assets, tagging sensitive and regulated data (e.g., patient PII). [19]

- Enforce Data Residency Policies: Configure cloud storage policies to automatically enforce data sovereignty rules, preventing data from being stored in non-compliant jurisdictions. [19]

- Automate Compliance Workflows: Implement automated workflows for managing data subject requests (e.g., right to be forgotten) and consent. [17]

- Maintain Audit Trails: Ensure your cloud governance tools provide detailed audit trails for all data access and modifications, which are essential for regulatory audits. [17] [19]

Frequently Asked Questions (FAQs)

Q1: What is the most critical first step in governing data for an AI-based research project? The most critical first step is data classification. Before using data to train any model, you must identify and tag sensitive elements like Personally Identifiable Information (PII), protected health information (PHI), and intellectual property. This process is foundational for applying appropriate security controls, ensuring compliance, and avoiding the use of copyrighted or harmful content in your training sets. [17] [19]

Q2: How does 'model drift' impact our research, and how can we monitor for it? Model drift occurs when an AI model's predictions become less accurate over time because the live data it processes has changed from the data it was trained on. [16] In research, this can lead to flawed conclusions, invalidated results, and compliance risks. Monitoring involves:

- Technical Monitoring: Continuously tracking performance metrics (accuracy, precision, recall) and using statistical techniques to detect data drift and concept drift. [16]

- Operational Monitoring: Setting up dashboards with alerts for when metrics deviate from predefined thresholds. [16]

Q3: Our research uses IoT medical sensors. How do we ensure the quality and trustworthiness of this streaming data? Governance for IoT data requires a focus on the entire data pipeline:

- At the Edge: Implement data validation checks where possible to filter out corrupt or anomalous readings at the source.

- In Transit: Ensure data is encrypted during transmission from the sensor to the cloud platform. [19]

- At Rest: Upon ingestion into your cloud data lake or platform, run automated data quality checks to profile, validate, and cleanse the data. Establish data quality metrics for accuracy and consistency and monitor them continuously. [19] [18]

Q4: We are preparing a Diversity Action Plan for an FDA submission. How can technology aid in governance here? Technology is crucial for executing and demonstrating the effectiveness of your Diversity Action Plan.

- Recruitment & Engagement: Use Clinical Trial Management Systems (CTMS) and participant engagement platforms to simplify recruitment and enrollment across diverse populations, offering user-friendly digital interfaces. [20]

- Data Collection & Monitoring: Leverage eClinical tools like eSource and eConsent to capture data directly and monitor recruitment metrics in real-time against your diversity goals. [20]

- Data Analysis: Use analytics to track enrollment rates by demographic, allowing you to identify gaps and adjust your outreach strategies proactively. [20]

Quantitative Data on Data Governance Challenges

Table 1: Cost and Organizational Impact of Poor Data Governance

| Metric | Statistic | Source |

|---|---|---|

| Average Annual Cost of Bad Data | $12.9 million | Gartner (via [17]) |

| Reduction in Workforce Productivity | Up to 20% | Harvard Business Review (via [17]) |

| Increase in Operational Costs | Up to 30% | Harvard Business Review (via [17]) |

| Organizations Viewing Lack of Data Governance as Primary AI Inhibitor | 62% | KPMG (via [21]) |

Table 2: AI Adoption and Governance Maturity Landscape

| Metric | Statistic | Source |

|---|---|---|

| Global Organizations Using or Planning to Adopt AI | 84% | Quinnox (via [17]) |

| Companies That Have Integrated AI into at Least One Function | 79% | McKinsey (via [17]) |

| Organizations Lacking a Clear AI Strategy/Roadmap | ~50% (Nearly 1 in 2) | BCG x MIT Sloan Report (via [17]) |

| Generative AI Initiatives Described as "Fully Mature" | 1% | BCG x MIT Sloan Report (via [17]) |

Experimental Protocol: Implementing a 5-Step Data Governance Framework for an AI Research Project

This protocol provides a step-by-step methodology for establishing foundational data governance, aligned with the framework from the search results. [17]

1. Charter: Establish Governance with AI in Mind

- Objective: Define a clear governance charter assigning accountability for data integrity and ethical use.

- Procedure:

- Form a cross-functional team with members from data science, legal, compliance, and senior leadership (C-suite involvement is critical). [17] [21]

- Draft a charter that explicitly addresses AI-specific risks like model bias, hallucinations, and prompt injection attacks. [17]

- Define and document roles: Data Owners, Data Stewards, and Data Scientists, with clear responsibilities. [19] [18]

2. Classify: Know Your Data Before You Use It

- Objective: Identify and tag sensitive and regulated data within your research datasets.

- Procedure:

- Use automated data cataloging and scanning tools to discover and profile data. [19]

- Apply metadata tags to classify data (e.g., "PII," "PHI," "Confidential Intellectual Property"). [17]

- Vet and document all third-party and public data sources used for training to avoid copyright or quality issues. [17]

3. Control: Apply Guardrails to Who Uses What and How

- Objective: Implement security and access controls to prevent data misuse.

- Procedure:

- Implement role-based access control (RBAC) policies in your cloud data platform. [17] [19]

- For Generative AI projects, deploy prompt filters and input sanitization techniques. [17]

- Apply principles of data minimization, ensuring users only have access to the data strictly necessary for their research tasks. [17]

4. Monitor: Make AI Data Transparent and Traceable

- Objective: Establish ongoing monitoring for data quality, model performance, and bias.

- Procedure:

- Implement automated data lineage tools to track the origin and movement of data throughout its lifecycle. [17] [19]

- Set up dashboards to monitor key metrics for data quality (accuracy, completeness) and model performance (accuracy, drift). [16]

- Log all model inputs and outputs for auditability, essential for regulatory compliance under frameworks like the EU AI Act. [17]

5. Improve: Adapt as Risks and Regulations Evolve

- Objective: Create a feedback loop for continuous improvement of the governance framework.

- Procedure:

Data Governance Workflow Visualization

The Researcher's Toolkit: Essential Data Governance Solutions

Table 3: Key Research Reagent Solutions for Data Governance

| Item / Solution | Function in Data Governance |

|---|---|

| Data Catalog | A centralized tool for inventorying, classifying, and making data discoverable. It automatically scans data sources to build a searchable inventory, which is foundational for data classification and lineage. [19] |

| Automated Lineage Tools | Track the origin, movement, and transformation of data throughout its lifecycle. This is critical for troubleshooting AI models, ensuring reproducibility, and passing regulatory audits. [17] [19] |

| Model Card | A documentation framework for providing context and transparency into an AI model. It details the model's intended use, training data, performance metrics, and ethical considerations. [17] |

| eClinical Suite (eSource, CTMS, eConsent) | A set of specialized software tools for clinical research. They streamline data capture (eSource), manage trial operations and recruitment (CTMS), and ensure a compliant informed consent process (eConsent), directly supporting data integrity and regulatory adherence. [20] |

| Fairness Audit Tools | Software libraries and applications used to detect and quantify bias in datasets and AI models. They help researchers ensure their models are fair and do not discriminate against protected groups. [17] |

Defining Research Goals and Target Population for Regulatory Submissions

Troubleshooting Guides

Guide 1: Troubleshooting Target Population Definition

Table: Common Target Population Challenges and Solutions

| Challenge | Root Cause | Solution | Preventive Action |

|---|---|---|---|

| Enrollment Delays [22] | Long, unpredictable regulatory ethics timelines across countries. | Build realistic timelines (e.g., mean of 17.84 months observed) [22]. Engage local regulators early in protocol development [22]. | Develop a harmonized regulatory strategy with pre-emptive country-specific consultations [22]. |

| Lack of Population Diversity [23] | Failure to enroll historically underrepresented populations. | Select trial sites in demographically diverse locations and engage community health workers [23]. | Submit a formal Diversity Action Plan (DAP) to the FDA as required [23]. |

| Data Standardization Issues [24] | Lack of standardized data collection methods, only submission standards exist. | Implement robust internal data management practices and use predefined templates [25]. | Foster collaboration among pharma companies and vendors to establish collection standards [24]. |

| Protocol Non-Compliance [23] | Staff unfamiliarity with protocol or eagerness to enroll ineligible patients. | Immediate staff retraining and suspension of enrollment until compliance is confirmed [23]. | Implement rigorous pre-enrollment checklists and ongoing protocol training [23]. |

Guide 2: Troubleshooting Research Goal Alignment

Table: Aligning Research Goals with Regulatory Requirements

| Symptoms of Misalignment | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Regulatory questions about product's market context or unmet need [25]. | Review submission documents: Is there a clear, cohesive narrative on product positioning? [25] | Thread key messaging throughout the eCTD. Use a project manager to ensure narrative consistency [25]. |

| FDA rejection for lacking Investigational New Drug (IND) application [23]. | Determine if the study is an "experiment" (regulated) or "medical practice" (generally not) [23]. | Consult FDA guidance: Randomized trials of unapproved drug uses typically require an IND [23]. |

| Delays due to shifting regulatory requirements [26]. | Regularly monitor official FDA guidance and policy updates [27]. | Proactively engage the FDA early for feedback and consider parallel submissions with other agencies (e.g., EMA) [26]. |

| Inability to leverage Real-World Evidence (RWE). | Assess if RWE could complement trials for safety or effectiveness data [28]. | Align RWE study design with FDA's RWE Accelerate initiative and use fit-for-purpose data sources [28]. |

Frequently Asked Questions (FAQs)

Q1: What is the single most common mistake in defining a target population, and how can I avoid it?

The most frequent and critical mistake is failing to ensure subjects meet all inclusion/exclusion criteria before enrollment, which is a top citation in FDA Warning Letters [23]. This often stems from staff's desire to help patients access investigational treatments. To avoid this, implement rigorous pre-screening checklists and continuous training that emphasizes the difference between the practice of medicine and the strict, protocol-driven nature of clinical research [23].

Q2: How can I use real-world evidence (RWE) to support the definition of my target population and research goals?

RWE allows you to study large, diverse datasets from real-world settings to understand treatment patterns, safety signals, and gaps in care [28]. You can use RWE to:

- Harness Big Data: Understand the natural history of a disease and identify disparities in care within different patient sub-groups [28].

- Support Accelerated Development: Complement clinical trials by providing additional evidence on how a drug works in broader, more representative populations outside the strict controls of a trial [28]. Engage with the FDA's RWE Accelerate initiative early to ensure your approach to using RWE is aligned with agency expectations [28].

Q3: What are the key differences between HIPAA "Authorization" and informed consent?

These are two separate regulatory requirements. Informed consent is required by federal human subject protection regulations and focuses on the risks and benefits of the research procedures themselves. HIPAA Authorization is required by the Privacy Rule and specifically governs how a covered entity may use and disclose a patient's Protected Health Information (PHI) for research [29]. While the requirements are different, the two documents are often combined into a single form for patient comprehension and administrative ease [29].

Q4: Our multi-country trial is facing significant regulatory delays. What strategic steps can we take?

Significant delays in multi-country trials, especially in resource-limited settings, are common, with mean regulatory timelines sometimes exceeding 17 months [22]. To mitigate this:

- Engage Early: Negotiate with international drug regulators and ethics committees during the protocol development phase, not after it is finalized [22].

- Harmonize Processes: Advocate for and participate in efforts to harmonize regulatory review processes across regions. This includes supporting training exchanges between in-country regulators and established agencies like the FDA or EMA [22].

- Plan for Variability: Allocate resources and build timelines that account for unpredictable regulatory environments in different countries [22].

Q5: What should we do if we receive an FDA Form 483 after a BIMO inspection?

Remain cooperative and acknowledge the issues during the closeout meeting. The most critical step is to provide a timely, robust written response within 15 business days [23]. Your response must detail a comprehensive corrective and preventive action plan (CAPA) and confirm any actions already completed. Demonstrating a clear commitment to addressing the findings can help prevent the issuance of a more severe Warning Letter [23].

Experimental Protocol: Defining a Target Population for a Regulatory Submission

Objective: To systematically define and justify a target population for a clinical study that will meet regulatory standards for approval.

Methodology:

Disease Natural History & Unmet Need Analysis:

- Utilize real-world data (RWD) from electronic health records (EHRs) or claims databases to characterize the patient population, including demographics, disease progression, and current treatment patterns [28].

- Define the unmet medical need that the investigational product aims to address.

Competitive Landscape & Clinical Trial History Review:

- Analyze previously approved products and clinical trials for the same or similar indications.

- Identify gaps in knowledge or populations that have been underrepresented (e.g., specific racial groups, elderly patients) to inform the development of a Diversity Action Plan [23].

Stakeholder Alignment and Regulatory Strategy:

- Hold an internal cross-functional team meeting to align on the target population and research goals.

- Schedule a pre-submission meeting with the regulatory agency (e.g., FDA) to present and refine the proposed target population and overall study design [25].

Protocol Finalization and Documentation:

- Translate the agreed-upon strategy into a precise protocol with unambiguous inclusion/exclusion criteria.

- Prepare a cohesive regulatory submission that tells a clear story, justifying the target population based on the analysis in steps 1-3 and threading this rationale throughout the submission documents [25].

Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Regulatory-Focused Research

| Item/Tool | Function in Research | Regulatory Consideration |

|---|---|---|

| HIPAA Authorization Form | Legally permits the use/disclosure of Protected Health Information (PHI) for research [29]. | Must be specific and can be combined with informed consent. An IRB can waive this requirement under certain conditions [29]. |

| Data Use Agreement (DUA) | Governs the sharing of a "Limited Data Set" (data with some indirect identifiers) with parties not named in the original IRB application [29]. | Required by HIPAA to share data with external collaborators not part of the core research team [29]. |

| Diversity Action Plan (DAP) | A formal plan to enroll a representative study population from historically underrepresented groups [23]. | Soon to be mandatory for certain clinical studies per FDA guidance to improve enrollment diversity [23]. |

| Standardized Data Templates (e.g., CDISC) | Provides a common structure and format for data submitted to regulatory agencies [24]. | While submission standards are mandated, internal collection standards are not, making internal templates vital for efficiency and accuracy [24]. |

| Real-World Data (RWD) Sources | Provides evidence on disease status and healthcare delivery from sources outside traditional clinical trials (e.g., EHRs, claims data) [28]. | Must be fit-for-purpose. The FDA's RWE Accelerate initiative provides a framework for using this data in regulatory decisions [28]. |

Troubleshooting Guides & FAQs

Data Management & Regulatory Compliance

Q: Our clinical trial data collection is often flagged by regulators as being non-compliant with GDPR and HIPAA. How can we ensure we collect necessary research data while respecting data minimization principles?

A: Implement a tiered data collection strategy and leverage privacy-enhancing technologies (PETs). Start by collecting only essential baseline data, then collect additional data points as the study progresses and justifies their need. Utilize technologies like federated learning, which enables collaborative research without transferring raw data between institutions, ensuring sensitive information remains localized. Always conduct a Data Protection Impact Assessment (DPIA) to outline what data is necessary and identify risks in processing activities [30].

Q: What are the most common data-related site challenges in clinical trials, and how can we address them?

A: According to a 2025 survey of clinical research sites worldwide, the top challenges are clinical trial complexity (35%), study start-up issues (31%), and site staffing (30%). To address these, focus on enhancing operational efficiency by streamlining and standardizing routine workflows while actively tracking key metrics against industry benchmarks. Additionally, invest in comprehensive staff training and implement strategies to enhance retention through ongoing educational opportunities [31].

Table: Top Clinical Research Site Challenges (2025)

| Challenge Area | Percentage of Sites Reporting | Key Mitigation Strategies |

|---|---|---|

| Complexity of Clinical Trials | 35% | Simplify protocol designs, reduce endpoints, streamline technology requirements |

| Study Start-up | 31% | Specialize in coverage analysis, budgets, and contracts; strategically outsource |

| Site Staffing | 30% | Invest in training, enhance retention, provide professional development |

| Recruitment & Retention | 28% | Implement DE&I strategies, harness technology to optimize participant experience |

| Long Study Initiation Timelines | 26% | Enhance communication with sponsors/CROs, standardize processes |

Q: How can we ensure our data management practices meet both FDA 21 CFR Part 11 requirements and support robust research outcomes?

A: Implement Clinical Data Management Systems (CDMS) that are compliant with regulatory standards while maintaining data integrity. Key steps include: maintaining secure, computer-generated, time-stamped audit trails; using validated systems to ensure accuracy, reliability, and consistency of data; and following Clinical Data Interchange Standards Consortium (CDISC) standards for data acquisition, exchange, and submission. Ensure your system provides adequate procedures and controls to guarantee data integrity, authenticity, and confidentiality [32].

Q: What strategies can help balance comprehensive data collection for complex trials with regulatory data minimization requirements?

A: Adopt these key strategies: First, implement pseudonymization and anonymization practices to reduce risk while retaining data utility. Second, utilize tiered data collection, starting with essential data and progressively collecting more as justified by study progression. Third, employ Privacy-Enhancing Technologies (PETs) like synthetic data and differential privacy. Fourth, conduct regular audits to ensure data collection aligns with minimization principles. Finally, maintain clear documentation of all data processing activities [30].

Data Integrity & Quality Assurance

Q: We're experiencing inconsistencies in our research data quality despite following protocols. What fundamental guidelines can improve data integrity?

A: Implement the Guidelines for Research Data Integrity (GRDI) which emphasize six core principles: accuracy, completeness, reproducibility, understandability, interpretability, and transferability. Key practical steps include: always keeping raw data in its original, unprocessed form; creating a comprehensive data dictionary that explains all variable names, coding categories, and units; saving data in accessible, general-purpose file formats like CSV; and avoiding combining information in single fields that cannot be easily separated later [33].

Q: How should we handle raw versus processed data to maintain scientific integrity?

A: Raw data should be preserved in its original, unprocessed form as equipment-generated physical records or data files with timestamps and write-protection. Export raw data into write-protected open formats (CSV, JSON) for long-term accessibility. For processed data, carefully document all cleaning procedures, transformations, and normalization techniques. Be aware that aggressive data cleaning may inadvertently eliminate valid data points or introduce bias, so thorough documentation is essential to minimize information loss and maintain dataset integrity [34].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Data Management Resources for Regulatory Compliance

| Tool/Resource | Function/Purpose | Key Features/Benefits |

|---|---|---|

| Clinical Data Management Systems (CDMS) | Collection, cleaning, and management of subject data in compliance with regulatory standards | Audit trail maintenance, discrepancy management, 21 CFR Part 11 compliance [32] |

| Privacy-Enhancing Technologies (PETs) | Safeguard participant data while maximizing utility for research | Includes synthetic data, federated learning, differential privacy [30] |

| Data Protection Impact Assessment (DPIA) | Outline necessary data and identify processing risks | Ensures GDPR compliance, balances research needs with privacy requirements [30] |

| Clinical Data Interchange Standards Consortium (CDISC) Standards | Acquisition, exchange, submission, and archival of clinical research data | Includes SDTMIG and CDASH standards; supports regulatory submission [32] |

| eConsent Platforms | Facilitate informed consent processes across study sites | Streamline enrollment, automate routing and signature management, ensure version control [20] |

| Data Management Plan (DMP) | Roadmap for handling data under foreseeable circumstances | Describes database design, quality control, discrepancy management, database locking [32] |

Experimental Protocols & Workflows

Protocol: Implementing Tiered Data Collection for Regulatory Compliance

Objective: To systematically collect necessary research data while adhering to GDPR data minimization principles and maintaining research integrity.

Materials:

- Data Protection Impact Assessment (DPIA) framework

- Privacy-Enhancing Technologies (federated learning platforms, differential privacy tools)

- Anonymization and pseudonymization software

- Clinical Data Management System (CDMS) with audit trail capability

- Data validation and edit check programs

Methodology:

Pre-Collection Planning Phase

- Conduct comprehensive DPIA to identify essential data requirements

- Define data collection objectives aligned with research endpoints

- Establish data minimization thresholds and justification criteria

- Develop tiered data collection protocol with clear escalation triggers

Baseline Data Collection

- Collect only essential demographic and baseline characteristics

- Implement pseudonymization at point of collection

- Apply data validation checks in real-time

- Document all collection processes in audit trail

Progressive Data Tier Activation

- Activate additional data collection tiers only as study progression justifies need

- Require protocol amendment and ethics approval for each tier activation

- Re-assess data minimization principles at each tier transition

- Maintain comprehensive documentation of tier activation rationale

Quality Assurance & Compliance Monitoring

- Conduct regular audits of data collection against minimization principles

- Verify appropriateness of data points collected at each tier

- Ensure continued alignment with research objectives

- Document all compliance verification activities

Protocol: Ensuring Data Integrity Throughout Research Workflow

Objective: To maintain data accuracy, completeness, and reproducibility from collection through analysis while meeting regulatory standards.

Materials:

- Raw data preservation system (write-protected storage)

- Data dictionary template

- Standardized file formats (CSV, JSON, XML)

- Version control system

- Audit trail software

- Metadata documentation tools

Methodology:

Pre-Collection Preparation

- Develop comprehensive data dictionary with variable definitions, coding schemes, and units

- Establish standardized file naming conventions and version control protocol

- Define raw data preservation procedures and storage locations

- Select appropriate, sustainable file formats for long-term accessibility

Data Collection & Documentation

- Collect data directly into standardized formats

- Implement real-time data validation and edit checks

- Preserve raw data in write-protected, timestamped formats

- Document all collection methodologies, instrument calibrations, and environmental factors

Data Processing & Transformation

- Maintain clear separation between raw and processed data

- Document all data cleaning procedures, transformations, and normalization techniques

- Preserve processing scripts and algorithms with version control

- Implement reproducible data processing workflows

Quality Assurance & Metadata Management

- Conduct regular quality checks against GRDI principles

- Ensure metadata comprehensively describes dataset context and processing history

- Verify data reproducibility through periodic replication tests

- Prepare data for sharing and preservation according to FAIR principles

Regulatory Alignment Framework

Navigating 2025 Regulatory Challenges

The regulatory landscape in 2025 is characterized by significant shifts requiring adaptive data management strategies. Key trends include growing regulatory divergence and fragmentation, increased focus on Trusted AI systems, and evolving cybersecurity requirements [1]. Specific clinical trial updates include the FDA's movement toward single IRB reviews for multicenter studies, finalized ICH E6(R3) Good Clinical Practice guidelines emphasizing flexibility and digital technology integration, and reinforced commitments to diversity in clinical trials through Diversity Action Plans [20].

Table: 2025 Regulatory Priorities and Data Implications

| Regulatory Area | Key Requirements | Data Management Implications |

|---|---|---|

| AI Regulation | Trusted AI frameworks, ethical implementation | Enhanced data governance, algorithm transparency, bias monitoring [1] |

| Data Privacy | GDPR minimization, cross-border transfer rules | Tiered data collection, privacy-enhancing technologies, anonymization protocols [30] |

| Clinical Trial Modernization | ICH E6(R3) adoption, single IRB reviews | Risk-based quality management, centralized data systems, streamlined documentation [20] |

| Diversity & Inclusion | Diversity Action Plans, representative participation | Demographic data collection, barrier analysis, inclusive recruitment strategies [20] |

| Cybersecurity & Information Protection | Enhanced data protection, state-level regulations | Secure data storage, encryption protocols, access controls [1] |

Successful navigation of these regulatory requirements demands a proactive approach that integrates compliance considerations into research design from the outset, rather than as an afterthought. By implementing the protocols and strategies outlined in this technical support center, researchers can confidently pursue their scientific objectives while maintaining rigorous regulatory compliance.

Building a Methodologically Sound and Compliant Data Collection Process

Technical Support Center

Frequently Asked Questions

Q1: Our survey response rates are low, and we are concerned about non-response bias affecting our study's validity. What steps can we take?

A1: Low response rates are a common challenge that can compromise data representatiselected sampling method accurately reflects all relevant subgroups (e.g., age, gender) within your target population and address any barriers to participation [4]. Furthermore, ensure your survey design is accessible and user-friendly. Tools like SurveyCTO offer robust, secure, and scalable mobile data collection, which can be deployed even in areas with limited connectivity, thus widening your reach [4].

Q2: We have collected EHR data, but it is messy and inconsistent. How can we define a reliable patient cohort for our analysis?

A2: Defining a clean cohort from EHR data is a critical first step. We recommend you:

- Create a Source Registry: Document every data source, its origin, and a quality rating. This helps you understand the reliability of the data you are working with [35].

- Implement Data Validation: Establish systematic processes to verify information at the point of entry and throughout its lifecycle. This can include field-level validation in forms and automated post-collection cleaning to detect and handle duplicates or incomplete records [35].

- Collaborate with Extraction Engineers: Work closely with data extraction engineers to understand the origin of data quality issues. The extraction process itself can introduce artifacts, and an iterative collaboration is crucial for ensuring the final dataset is representative [36] [37].

Q3: During clinical observations, how can we minimize the effect of the observer on the subject's behavior (the Hawthorne Effect)?

A3: Minimizing observer bias is key to collecting authentic data.

- Use Non-Participant Observation: The researcher should observe without direct interaction with the participant whenever possible [38].

- Conduct Covert Observation (with ethical approval): In some study designs where participants are unaware they are being observed, you can capture more natural behavior. However, this must be approached with extreme caution and full compliance with ethical and regulatory standards, including informed consent requirements where applicable [38].

- Standardize Procedures: Develop a strict observational framework or checklist to ensure all researchers are recording behaviors systematically, which reduces interpreter bias [38].

Q4: Our sensor data streams are large and complex. How can we ensure the data is of high quality and integrated properly with our other data sources?

A4: Handling high-volume sensor data requires modern engineering approaches.

- Implement Automated Quality Assurance: Use frameworks with machine learning models to identify anomalies, duplicates, and inconsistencies in the data stream before it reaches your analytical systems [39].

- Adopt Cloud-Native and DataOps Practices: Utilize serverless data processing platforms that auto-scale and employ DataOps principles. This involves continuous integration and delivery pipelines that automate the testing, deployment, and monitoring of your data collection workflows [39].

- Ensure Compliance-by-Design: Embed regulatory requirements directly into your collection workflows. This includes automated policy enforcement for data masking, retention, and access controls based on the classified sensitivity of the data [39].

Q5: How can we ensure our data collection methods are compliant with regulations like GDPR or HIPAA?

A5: Privacy compliance is a fundamental responsibility.

- Obtain Informed Consent: Use clear, simple language—not legal jargon—to state what data you are collecting, how it will be used, and who it will be shared with. Offer granular choices, allowing individuals to opt into different types of communication separately [35].

- Maintain Consent Records: Keep a secure, auditable trail of when and how each individual gave their consent. This is a key requirement for demonstrating compliance [35].

- Implement Privacy by Design: Build privacy and security considerations into your technology from the ground up. Ensure systems that store or process personal data have robust security measures and access controls [35].

Troubleshooting Guides

Problem: Data flowing from multiple sources (e.g., ticketing platforms, mobile apps, CRM systems) arrives in incompatible formats (e.g., dates as "MM/DD/YY," "DD-MM-YYYY," and "Month Day, Year"), making merging and analysis impossible.

Solution:

- Create a Data Dictionary: Develop a central document that defines every data field you collect, specifying its name, format (text, number, date), and accepted values [35].

- Enforce Standardized Formats: Adopt industry standards where possible. For example, require dates to follow the ISO 8601 standard (YYYY-MM-DD) and use two-letter country codes (ISO 3166) instead of free-text country names [35].

- Use Field Validation: Implement validation rules in your data capture tools and forms to enforce correct formatting upon entry [35].

Issue: Electronic Health Record (EHR) Data is Not a Perfect Reflection of the Patient

Problem: EHR data suffers from incompleteness, as not all possible observations are collected for all patients at all times. The data that is collected is highly dependent on clinical decisions and hospital procedures, which can introduce bias [36] [37].

Solution:

- Assess Data Fitness: Before model development, carefully consider if the EHR data is fit for your specific prediction goal. Acknowledge that tabular data from routine clinical practice will always have a level of incompleteness [36].

- Understand Clinical Context: Collaborate with clinicians to understand why data was collected. A missing lab test value could be because it was not clinically indicated, which is informative in itself [37].

- Document Data Lineage: Use tools or processes to track the flow of data from its source in the EHR to its destination in your model. This helps quickly identify the origin of data quality issues [35].

Issue: Sampling Bias in Collected Data

Problem: The collected data does not accurately represent the entire target population, leading to flawed conclusions.

Solution:

- Define Your Target Population: Conduct thorough research to understand the characteristics and subgroups of your target population [4].

- Choose an Appropriate Sampling Method: Anticipate and address potential biases by selecting a sampling method that allows for a representative sample of the entire population, not just an easily accessible segment [35] [4].

- Minimize Bias Proactively: Use methodological techniques to reduce systematic errors. This ensures the insights you gather are a true representation of the entire group you're studying [35].

Data Collection Methods at a Glance

The table below summarizes the core data collection methods, helping you choose the right approach for your regulatory research.

Table 1: Comparison of Primary Data Collection Methods

| Method | Primary Data Type | Key Strengths | Common Challenges | Best Use Cases in Regulatory Research |

|---|---|---|---|---|

| Surveys & Questionnaires [4] [39] | Quantitative & Qualitative | Reaches many participants quickly and cost-effectively; Structured analysis [4]. | Response bias; May not capture complex nuances [4]. | Collecting patient-reported outcomes (PROs), healthcare professional opinions on a new therapy. |

| EHR Data Extraction [36] [37] | Quantitative (Structured Data) | Provides detailed, longitudinal real-world patient data from clinical settings [37]. | Data incompleteness; Artifacts from extraction; Requires extensive cleaning [36] [37]. | Real-world evidence (RWE) generation; Pharmacovigilance; Dynamic prediction modeling for disease risk. |

| Clinical Observations [4] [38] | Qualitative & Quantitative | Captures authentic behavior and contextual information in a natural setting [4] [38]. | Observer bias; The Hawthorne Effect; Time-consuming [4] [38]. | Studying clinical workflow adherence; Understanding user interaction with a medical device in a hospital. |

| Sensor Data Collection [39] | Quantitative | Continuous, automated data; Eliminates manual recording errors; Real-time insights [39]. | High data volume and complexity; Requires robust data pipelines [39]. | Remote Patient Monitoring (RPM); Clinical trial endpoint capture (e.g., activity levels); IoT device performance. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Tools and Platforms for Data Collection

| Item | Function | Example Tools & Standards |

|---|---|---|

| Electronic Data Capture (EDC) System | Securely captures and manages clinical trial data collected from participants at investigative sites. | RedCap, SurveyCTO [4] |

| EHR Data Standard | Facilitates structure and terminology consistency for extracted health data, enabling reproducible research. | OMOP Common Data Model (CDM) [36] [37] |

| Streaming Data Platform | Enables real-time ingestion and processing of high-volume data from sensors and other continuous sources. | Apache Kafka [39] |

| Data Integration & API Tool | Connects different software systems to automatically exchange and synchronize data between platforms in real-time. | GraphQL, REST APIs [39] |

| Statistical Software Package | Provides the environment for data preparation, statistical analysis, and predictive model building. | R (tidyverse, tidymodels), Python (pandas, scikit-learn) [37] |

Experimental Workflow for Data Collection

The following diagram outlines a robust, iterative workflow for data collection in regulatory research, from planning to implementation, emphasizing quality and compliance.

Sampling Strategies to Ensure Representative and Unbiased Data

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between probability and non-probability sampling?

- A: Probability sampling is a method where every member of the target population has a known and equal chance of being selected. This method is crucial for producing unbiased, representative samples and is primarily used in quantitative research to ensure generalizability [40] [41] [42]. In contrast, non-probability sampling involves selecting participants in a non-random way, where not everyone has an equal chance of selection. It is often used in qualitative research, exploratory studies, or when researching hard-to-reach populations [40] [41] [43].

Q2: My resources are limited. Can I use a convenience sample for my preliminary research?

- A: Convenience sampling, which involves selecting readily available participants, can be a quick and cost-effective method for exploratory research or pilot studies [41] [43]. However, you must be cautious. This method is highly susceptible to selection bias and may not represent the broader population, thus limiting the generalizability of your findings [41] [42]. Its use in a regulatory context would require strong justification, and it is generally not suitable for definitive design validation studies [44].

Q3: How does my research goal influence the choice of sampling technique?

- A: The research goal is a primary determinant for selecting a sampling method.

- If the goal is to generalize findings to a larger population (e.g., in a clinical trial or a survey), a probability-based method like simple random, stratified, or cluster sampling is necessary [45] [46].

- If the goal is exploratory research or to gain deep, qualitative insights into a specific phenomenon, non-probability methods like purposive or theoretical sampling are more appropriate [40] [47] [43].

- For studying hard-to-reach or hidden populations (e.g., specific patient support groups), snowball sampling is often the most practical technique [41] [42] [46].

Q4: What is data saturation in qualitative research and how does it relate to sample size?

- A: Data saturation is the guiding principle for determining sample size in qualitative research. It is the point at which collecting new data no longer yields new analytical information or insights but instead becomes redundant [47]. Sample size is not predetermined by a statistical formula but emerges during the study. The researcher continues to collect data—through interviews or observations—until saturation is achieved, ensuring the findings are rich and comprehensive [47] [43].

Troubleshooting Guides

Issue 1: Sampling Bias in Data Collection

Problem: The collected data does not accurately represent the target population, leading to skewed results and incorrect conclusions. A classic example is the 1948 U.S. presidential election telephone survey, which disproportionately sampled wealthy individuals and led to an incorrect prediction [41].

Solution:

- Use Probability Sampling: Employ methods like simple random or stratified sampling to ensure every population member has a known chance of selection, thereby minimizing selection bias [45] [48].

- Ensure a Robust Sampling Frame: Work from a complete and accurate list of all individuals in your target population. An incomplete frame automatically introduces bias [45] [46].

- Minimize Non-Response Bias: Actively encourage participation from all selected members. If certain groups are consistently non-responsive, their perspectives will be missing from your data [45].

- Justify Non-Probability Methods: If using non-probability sampling is unavoidable, transparently document the rationale and acknowledge the potential limitations on generalizability in your research report [44] [43].

Issue 2: Determining a Statistically Justified Sample Size

Problem: A sample size that is too small may lack the power to detect a meaningful effect, while an overly large sample wastes resources. Regulatory bodies like the FDA require a written statistical rationale for the sample size used [44] [49].

Solution:

- For Quantitative Studies: Use established statistical formulas that incorporate key parameters. For a simple random sample, the required size can be calculated as [45]:

n = (Z² * p * (1 - p)) / E²Where:n= required sample sizeZ= Z-value for your desired confidence level (e.g., 1.96 for 95%)p= estimated proportion in the population (use 0.5 for maximum variability)E= acceptable margin of error (e.g., 0.05 for ±5%)

- Link to Risk Assessment: For design verification and validation in regulatory research, align your sample size with your risk management file. Higher-risk scenarios typically demand higher confidence levels and reliability, which in turn require larger sample sizes [44].

- For Qualitative Studies: Plan to collect data until you reach data saturation, where no new themes or information emerge from additional interviews or observations [47].

Issue 3: Choosing Between Different Probability Sampling Methods

Problem: Uncertainty about which probability sampling method is most appropriate for a specific study context.

Solution: Refer to the following decision workflow to guide your selection:

Experimental Protocols & Methodologies

Protocol 1: Implementing a Stratified Random Sample

Objective: To obtain a sample that accurately represents key subgroups (strata) within a population.

Materials: A defined sampling frame (complete list of the population), data on the stratifying variable(s) for all units in the frame, random number generator.

Procedure:

- Define Strata: Identify the key characteristics (e.g., age groups, disease severity, clinical sites) that are critical to your research question. These will form your strata [42] [45].

- Divide the Population: Separate every unit in your sampling frame into the predefined strata [46].

- Determine Allocation: Decide on the number of units to select from each stratum. This can be:

- Random Selection: Within each stratum, use a simple random sampling method (e.g., computer-generated random numbers) to select the predetermined number of units [42] [48].

- Combine: Combine the selected units from all strata to form your final research sample.

Protocol 2: Implementing a Purposive Sample for a Qualitative Study

Objective: To intentionally select individuals or cases that are information-rich due to their specific knowledge or experience with the phenomenon of interest [47] [43].

Materials: Predefined inclusion criteria based on research objectives, a method for identifying and accessing potential participants.

Procedure:

- Define Criteria: Clearly articulate the specific experiences, characteristics, or knowledge that a participant must possess to be included in the study [43].

- Identify Potential Participants: Use your network, institutional records, or preliminary surveys to locate individuals who meet the criteria [47].

- Select Participants: Use your judgment to choose participants who best fit the criteria and are likely to provide rich, relevant data. This may involve seeking maximum variation in experiences or focusing on typical cases [47].

- Document Rationale: Keep a clear record of why each participant was selected, linking them to the research question and inclusion criteria. This transparency is crucial for the study's credibility [43].

- Iterate if Necessary: In approaches like grounded theory, the sampling continues iteratively alongside data analysis (theoretical sampling), where new participants are selected to help develop emerging theoretical concepts [47] [43].

Data Presentation: Sampling Plan Tables

The U.S. Food and Drug Administration (FDA) provides sampling tables for inspections, which illustrate the relationship between sample size, confidence level, and the maximum number of allowable defects. These principles can be adapted for quality review in research.

Table 1: Sampling Plan for 95% Confidence Level (Adapted from FDA Guidance) [49]

| Plan | Maximum Allowable Defect Rate | Sample Size for 0 Defects | Sample Size for 1 Defect | Sample Size for 2 Defects |

|---|---|---|---|---|

| A | 30% | 11 | 17 | 22 |

| B | 25% | 13 | 20 | 27 |

| C | 20% | 17 | 26 | 34 |

| D | 15% | 23 | 35 | 46 |

| E | 10% | 35 | 52 | 72 |

| F | 5% | 72 | 115 | 157 |

Table 2: Sampling Plan for 99% Confidence Level (Adapted from FDA Guidance) [49]

| Plan | Maximum Allowable Defect Rate | Sample Size for 0 Defects | Sample Size for 1 Defect | Sample Size for 2 Defects |

|---|---|---|---|---|

| A | 30% | 15 | 22 | 27 |

| B | 25% | 19 | 27 | 34 |

| C | 20% | 24 | 34 | 43 |

| D | 15% | 35 | 47 | 59 |

| E | 10% | 51 | 73 | 90 |

| F | 5% | 107 | 161 | 190 |

Table 3: Recommended Qualitative Sample Size Estimates by Methodology [47]

| Qualitative Methodology | Typical Data Collection Estimate | Key Determinant of Final Size |

|---|---|---|

| Ethnography | 25-50 interviews & observations | Data Saturation |

| Phenomenology | Fewer than 10 interviews | Data Saturation |

| Grounded Theory | 20-30 interviews | Data Saturation & Theoretical Saturation |

| Content Analysis | 15-20 interviews or 3-4 focus groups | Data Saturation |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Sampling and Sample Size Determination

| Tool / Resource | Function in Research | Example / Note |

|---|---|---|

| Random Number Generator | Selects participants without bias for simple random and systematic sampling. | Use computer-based algorithms (e.g., in R, SPSS) for true randomness; avoid manual methods. |

| Sampling Frame | A complete list of all units in the target population from which a sample is drawn. | A patient registry, a list of all manufacturing lots, a university's student directory [45] [46]. |

| Sample Size Calculator | Software or formulas to determine the minimum number of participants needed. | G*Power, R, or online calculators that use inputs like effect size, power, and alpha [45]. |

| Statistical Software (e.g., R, SPSS) | Performs complex sample size calculations and analyzes data from complex sampling designs. | Essential for calculating power for advanced designs and for analyzing stratified or cluster sample data. |

| Confidence & Reliability Table | Provides a statistically valid sample size for verification/validation studies, often with zero-failure plans. | FDA sampling tables are a key example; used extensively in medical device and manufacturing research [44] [49]. |

This technical support center provides practical guidance for researchers, scientists, and drug development professionals navigating data ethics within regulatory frameworks. The following FAQs and troubleshooting guides address implementation challenges for the 5Cs of Data Ethics—Consent, Collection, Control, Confidentiality, and Compliance—to ensure your research meets ethical standards while advancing scientific discovery [50].

Frequently Asked Questions (FAQs)

1. What constitutes valid informed consent for retrospective data use in regulatory research? Valid informed consent requires clarity about data usage purposes. For retrospective studies using existing datasets, consent is valid if individuals were initially informed that their data could be used for future research and provided voluntary agreement. If the new research purpose differs significantly, re-consent may be necessary unless the data is fully anonymized and ethics board approval is obtained [51] [52].

2. How can we ensure data collection practices are ethically sound? Apply the principle of data minimization: collect only what is strictly necessary for your specific research purpose [50]. Implement transparent protocols explaining what data is collected and why [53]. Secure data through encryption and access controls from the point of collection, and conduct regular audits to maintain standards [54] [50].

3. What technical methods effectively give subjects control over their data? Implement technical systems that allow data subjects to access, review, correct, and request deletion of their information [50]. Create granular privacy preferences rather than all-or-nothing choices, and establish automated workflows to process deletion requests across all data stores while maintaining comprehensive audit trails [51].

4. How do we maintain confidentiality when sharing data with regulators? Use robust de-identification techniques that minimize re-identification risk [55]. Apply differential privacy or synthetic data generation for analysis, and establish clear data sharing agreements that define usage boundaries. Implement strong encryption for data in transit and at rest, particularly for sensitive information like genetic data [56] [54].