The V3 Framework: A Comprehensive Guide to Verification and Validation for Digital Medicine Products

This article provides researchers, scientists, and drug development professionals with a detailed exploration of the Verification, Analytical Validation, and Clinical Validation (V3) framework for digital medicine products.

The V3 Framework: A Comprehensive Guide to Verification and Validation for Digital Medicine Products

Abstract

This article provides researchers, scientists, and drug development professionals with a detailed exploration of the Verification, Analytical Validation, and Clinical Validation (V3) framework for digital medicine products. It covers foundational principles, from distinguishing key terminology to establishing fit-for-purpose, and guides readers through methodological application across preclinical and clinical contexts. The content addresses common troubleshooting challenges, including cybersecurity, regulatory compliance, and audit readiness, while also examining advanced validation strategies for AI-driven tools and comparative analysis with traditional biomarkers. By synthesizing current best practices and emerging 2025 trends, this guide aims to equip professionals with the knowledge to build robust evidence for digital measures, enhance regulatory submissions, and accelerate the development of reliable digital health technologies.

Building the Bedrock: Core Principles of the V3 Framework

In the rapidly evolving field of digital medicine, the Verification, Analytical Validation, and Clinical Validation (V3) framework has emerged as the foundational model for evaluating sensor-based digital health technologies (sDHTs). Established by the Digital Medicine Society (DiMe), this modular approach provides a structured methodology for assessing whether digital clinical measures are "fit-for-purpose" across technical, scientific, and clinical dimensions [1]. Since its dissemination in 2020, the V3 framework has been accessed over 30,000 times, cited more than 250 times in peer-reviewed literature, and leveraged by more than 140 teams including major regulatory bodies like the NIH, FDA, and EMA [1]. This framework lays out a systematic process for evaluating the quality of sensors (verification), performance of algorithms (analytical validation), and clinical relevance of outcome measures (clinical validation) generated by digital health tools [1].

The framework's significance has grown alongside the expanding adoption of digital health technologies in clinical research and care. Between 2019 and 2024, the industry witnessed a 10-fold increase in sDHT-derived measures adopted in industry-sponsored interventional trials [2]. The first pivotal trial using a digital measure as an FDA-endorsed primary endpoint was reported in 2023, marking a critical milestone in the field's maturation [2]. More recently, the V3 framework has been adapted for new applications including digital twins for precision medicine and preclinical research, demonstrating its versatility and enduring relevance [3] [4] [5].

Core Components of the V3 Framework

Detailed Definitions and Comparisons

The V3 framework decomposes the evaluation of digital health technologies into three distinct but interconnected processes. The table below summarizes the key focus areas and methodological approaches for each component.

Table 1: The Three Core Components of the V3 Framework

| Component | Primary Focus | Key Questions Answered | Common Methodologies |

|---|---|---|---|

| Verification | Technical performance of sensors and hardware | Does the technology reliably capture and store high-quality raw data? | Engineering tests, performance characterization, sensor calibration [1] [5] |

| Analytical Validation | Performance of data processing algorithms | Does the algorithm accurately transform raw data into meaningful metrics? | Precision/repeatability tests, comparison against reference standards, triangulation approaches [1] [5] |

| Clinical Validation | Clinical relevance of the derived measures | Does the measure meaningfully reflect the targeted biological or clinical state? | Clinical trials, observational studies, correlation with clinical outcomes [1] [4] |

Verification

Verification establishes the integrity of the raw data collection process, confirming that sensors correctly capture and store source data without corruption or significant technical error [5]. In practice, verification involves a series of technical checks throughout data collection. For example, in computer vision systems, verification would include assuring proper illumination, maintaining contrast between subjects and backgrounds, and confirming that sensors record events from correct sources with precise timestamps [5]. This process serves as a fundamental quality assurance step, ensuring consistent, uncorrupted data collection within the intended period and conditions [5]. The confirmation through provision of objective evidence that specified characteristics have been fulfilled aligns with standard definitions of verification in quality management systems [6].

Analytical Validation

Analytical validation assesses whether the quantitative metrics generated by algorithms accurately represent the captured events with appropriate precision and resolution [5]. This stage often presents unique challenges, as digital technologies frequently measure biological events with greater temporal precision than traditional "gold standard" methods, and in some cases, no direct comparator exists for novel endpoints [5]. To address this, researchers employ triangulation approaches that integrate multiple lines of evidence: biological plausibility, comparison to available reference standards, and direct observation of measurable outputs [5]. For instance, analytical validation might involve comparing computer vision-derived respiratory rates with plethysmography data or assessing digital locomotion measures against manual observations [5]. Successful analytical validation requires collaboration between machine learning scientists and domain experts to establish clear definitions ensuring digital measures accurately reflect biological phenomena [5].

Clinical Validation

Clinical validation determines whether a digital measure is biologically meaningful and relevant to health or disease states within a specific research context [4] [5]. This component confirms that the measure adequately identifies, measures, or predicts a meaningful clinical, biological, physical, or functional state in the specified context of use, including the specific patient population [2]. For example, locomotor activity data in a toxicology study may serve as a relevant biomarker for assessing drug-induced central nervous system effects [5]. Clinical validation builds upon analytical validation by demonstrating that digital measures provide insights that are both interpretable and actionable within the intended research or clinical setting [5]. It confirms through objective evidence that requirements for a specific intended purpose have been fulfilled [6].

The V3 Workflow and Logical Relationships

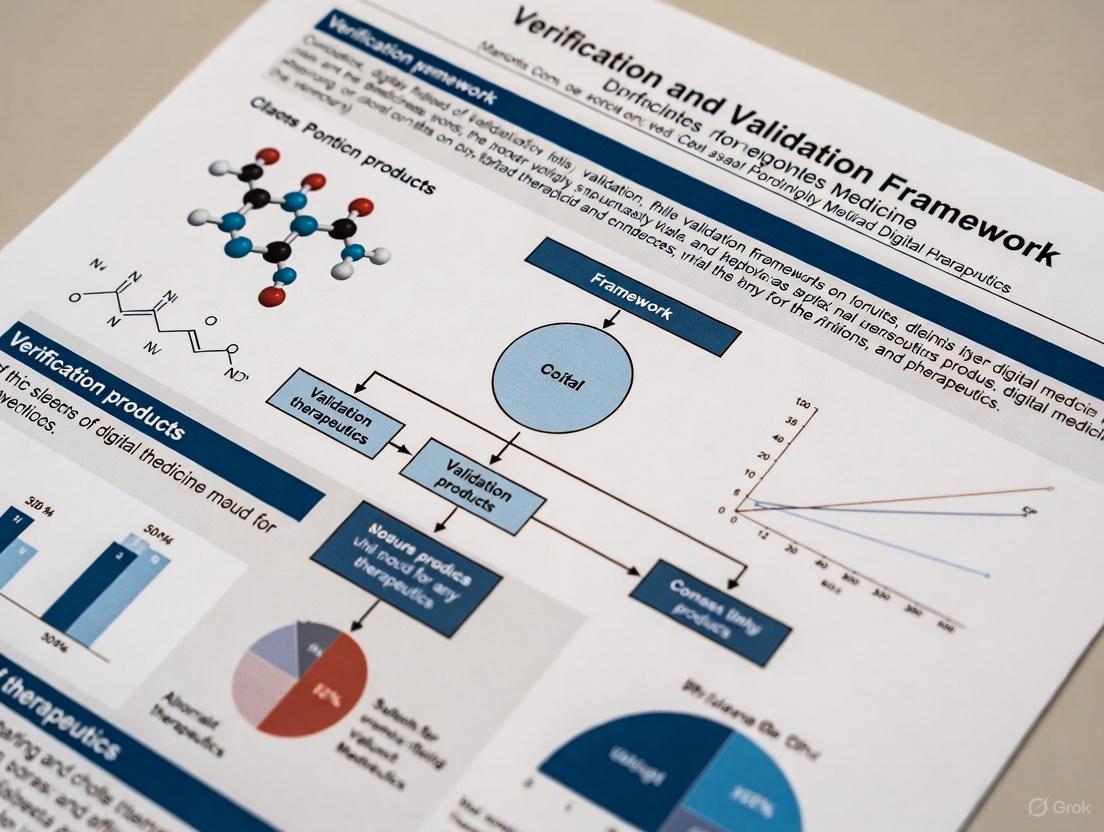

The following diagram illustrates the sequential relationship between the three V3 components and their role in establishing confidence in digital measures.

V3 Framework Validation Workflow

Evolution and Extensions of the V3 Framework

The V3+ Framework: Incorporating Usability Validation

As digital health technologies have matured, the original V3 framework has been extended to address implementation challenges at scale. The V3+ framework introduces a fourth critical component: usability validation [2]. This addition addresses challenges related to implementing sDHTs across diverse populations, different settings, and multifarious methodological approaches that have emerged as pressing concerns when scaling these technologies [2]. For example, one study reported that tremor classification data were missing for 50% of participants due to the inadvertent deactivation of device permissions, a problem that might have been prevented with more extensive usability testing [2].

The usability validation component consists of four key activities: (1) developing a use specification describing user groups and interaction patterns; (2) conducting a use-related risk analysis; (3) performing iterative formative evaluation of sDHT prototypes; and (4) executing a summative usability evaluation to confirm that intended users can safely and effectively use the sDHT [2]. This extension recognizes that even technically perfect digital measures fail if users cannot or will not implement them correctly in real-world settings.

Adaptation for Preclinical Research: The In Vivo V3 Framework

The V3 framework has also been adapted for preclinical contexts through the In Vivo V3 Framework, which tailors the original concepts to the unique requirements of animal research [4] [5]. This adaptation specifically addresses challenges unique to preclinical research, such as the need for sensor verification in variable environments and analytical validation that ensures data outputs accurately reflect intended physiological or behavioral constructs in animal models [4]. The framework emphasizes replicability across species and experimental setups—an aspect critical due to the inherent variability in animal models [4].

This adaptation strengthens the line of sight between preclinical and clinical drug development efforts by applying consistent validation principles across both domains [4]. For example, in Jackson Laboratory's Envision platform, the preclinical V3 framework ensures confidence in digital measures of animal behavior and physiology through rigorous verification of computer vision sensors, analytical validation of behavioral algorithms, and clinical validation establishing the biological relevance of these measures [5].

Expansion to Digital Twins: The VVUQ Framework

For digital twins in precision medicine, the framework has been expanded to Verification, Validation, and Uncertainty Quantification (VVUQ) [3]. This extension emphasizes the formal process of tracking uncertainties throughout model calibration, simulation, and prediction—a critical consideration for dynamic computational models that are regularly updated with new patient data [3]. These uncertainties can be epistemic (e.g., incomplete knowledge of how specific genetic mutations affect drug effectiveness) or aleatoric (e.g., natural variabilities not captured by the model) [3].

The VVUQ framework is particularly relevant for digital twins in cardiology and oncology, where computational models simulate patient-specific trajectories and interventions [3]. For instance, cardiac electrophysiological models incorporating CT scans enable simulations of heart electrical behavior at the individual level, aiding in diagnosing arrhythmias such as atrial fibrillation [3]. The continuous updates and bidirectional data flow in digital twins raise new validation challenges, as these systems require more flexible and iterative temporal validation approaches compared to traditional modeling [3].

Table 2: Evolution of the V3 Framework Across Applications

| Framework Version | Core Components | Primary Context | Key Innovations |

|---|---|---|---|

| Original V3 | Verification, Analytical Validation, Clinical Validation | Clinical sDHTs | Foundational modular approach for evaluating digital measures [1] |

| V3+ | Adds Usability Validation | Clinical sDHTs at scale | Addresses human factors and implementation challenges [2] |

| In Vivo V3 | Adaptation of V3 components | Preclinical animal research | Tailored for unique challenges of animal models and translational research [4] [5] |

| VVUQ | Verification, Validation, Uncertainty Quantification | Digital twins for precision medicine | Adds formal uncertainty quantification for dynamic computational models [3] |

Experimental Protocols and Methodologies

Standardized Experimental Approaches for V3 Validation

Implementing the V3 framework requires specific methodological approaches for each component. The table below summarizes common experimental protocols employed at each validation stage.

Table 3: Experimental Protocols for V3 Framework Implementation

| V3 Component | Experimental Protocols | Key Metrics | Data Collection Methods |

|---|---|---|---|

| Verification | Sensor calibration tests, Environmental stress testing, Data integrity checks | Signal-to-noise ratio, Sampling frequency accuracy, Data completeness, Dropout rates | Engineering bench tests, Controlled environment testing, Data logging verification [5] [6] |

| Analytical Validation | Algorithm precision/repeatability tests, Comparison against reference standards, Cross-validation approaches | Precision, Recall, F1 scores, AUC-ROC, Agreement statistics (ICC, Kappa) | Paired measurements with reference standards, Split-sample validation, Computational simulations [5] |

| Clinical Validation | Prospective observational studies, Clinical trials, Correlation with clinical outcomes | Sensitivity, Specificity, PPV/NPV, Effect sizes, Correlation coefficients | Clinical grade assessments, Patient-reported outcomes, Longitudinal monitoring [2] [4] |

| Usability Validation (V3+) | Formative evaluations, Summative usability testing, Use-related risk analysis | Task success rates, Error rates, Time on task, SUS scores | Expert heuristic reviews, User testing with representative participants, Simulated use studies [2] |

Methodological Details for Key Experiments

Verification Protocols for Sensor Systems

Verification of sensor systems involves a comprehensive testing protocol to ensure reliable data capture across anticipated operating conditions. For computer vision-based systems like those used in digital phenotyping, this includes illumination testing to verify performance across different lighting conditions, contrast validation to ensure adequate distinction between subjects and background, and temporal synchronization checks to confirm accurate timestamping across distributed sensor networks [5]. Additional verification steps include spatial calibration using standardized reference objects and data integrity checks to detect corruption or loss during transmission and storage [5]. These protocols establish objective evidence that the sensors fulfill their specified technical requirements before progressing to analytical validation [6].

Analytical Validation for AI/ML Algorithms

For AI/ML algorithms processing sensor data, analytical validation employs a tiered approach. Precision and repeatability testing involves measuring the same phenomenon multiple times under identical conditions to quantify variability [5]. Comparison against reference standards benchmarks algorithm outputs against established measurement approaches, though this presents challenges when digital measures capture phenomena with greater resolution than traditional methods [5]. When no direct reference standard exists, researchers employ triangulation approaches using multiple indirect comparators to build confidence in algorithm performance [5]. For instance, validation of a novel locomotion measure might involve comparison against manual scoring, agreement with alternative sensor modalities, and demonstration of expected biological responses to known stimuli [5].

Clinical Validation Study Designs

Clinical validation requires study designs that establish the relationship between digital measures and meaningful clinical states. Target population definition precisely specifies the intended patient cohort and context of use [4]. Clinical reference standard application involves blinded assessment using accepted clinical measures or diagnostic criteria [2]. Longitudinal tracking demonstrates that digital measures capture clinically relevant changes over time, such as disease progression or treatment response [4]. For regulatory endorsement as clinical trial endpoints, digital measures must additionally demonstrate reliability, responsiveness to change, and interpretability in the context of therapeutic decision-making [2]. Successful clinical validation provides the evidence that the digital measure adequately identifies or predicts the targeted clinical state in the specified context of use [4].

Experimental Workflow for Comprehensive V3 Evaluation

The following diagram illustrates the integrated experimental workflow for implementing the complete V3 framework, including key decision points and iterative processes.

V3 Experimental Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing the V3 framework requires specific methodological tools and approaches at each validation stage. The table below details essential "research reagent solutions" for digital medicine validation studies.

Table 4: Essential Research Reagents and Materials for V3 Implementation

| Tool Category | Specific Tools/Approaches | Primary Function | Application in V3 |

|---|---|---|---|

| Reference Standards | Certified measurement devices, Manual annotation by experts, Established clinical scales | Provide benchmark for comparison | Analytical validation (algorithm performance) and clinical validation (clinical relevance) [5] |

| Data Simulation Tools | Computational phantoms, Synthetic data generators, Model-based simulations | Create controlled test scenarios | Verification (sensor testing) and analytical validation (algorithm stress testing) [3] |

| Statistical Packages | Agreement statistics (ICC, Kappa), Classification metrics, Mixed-effects models | Quantify performance and relationships | All stages (quantitative assessment of verification, analytical, and clinical validation) [5] |

| Usability Assessment Tools | Heuristic evaluation frameworks, Task analysis protocols, System Usability Scale (SUS) | Evaluate human-technology interaction | Usability validation in V3+ framework [2] |

| Uncertainty Quantification Methods | Bayesian inference, Sensitivity analysis, Monte Carlo methods | Characterize and propagate uncertainties | VVUQ framework for digital twins [3] |

Comparative Analysis of Validation Frameworks Across Applications

The core V3 principles have been successfully adapted across diverse applications from clinical sDHTs to preclinical research and digital twins. The table below provides a comparative analysis of framework implementations across these domains.

Table 5: Framework Implementation Comparison Across Digital Medicine Applications

| Application Domain | Verification Focus | Analytical Validation Challenges | Clinical Validation Endpoints |

|---|---|---|---|

| Clinical sDHTs | Sensor performance in real-world environments, Data integrity during remote use | Comparison against clinical gold standards, Generalization across diverse populations | Clinical outcomes, Patient-reported outcomes, Functional status measures [1] [2] |

| Preclinical Digital Biomarkers | Sensor function in home-cage environments, Minimizing human interference | Developing appropriate reference standards for novel measures, Species-specific adaptations | Biological relevance, Translation to human conditions, Drug efficacy and safety [4] [5] |

| Digital Twins | Code verification, Mathematical model implementation | Validation across different patient subgroups, Temporal validation of updated models | Predictive accuracy for individual patients, Intervention outcome prediction [3] |

The V3 framework provides an essential structured approach for establishing confidence in digital medicine products, offering researchers and drug development professionals a systematic methodology for evaluating technologies across technical and clinical dimensions. Its core components—verification, analytical validation, and clinical validation—create a comprehensive evidence generation process that has become the de facto standard across the industry [1]. The framework's ongoing evolution through V3+ (adding usability validation) [2], preclinical adaptations [4] [5], and expansion to VVUQ for digital twins [3] demonstrates its flexibility and enduring relevance in a rapidly advancing field.

For researchers implementing digital measures in clinical trials or drug development pipelines, the V3 framework offers a rigorous yet practical roadmap for establishing fitness-for-purpose. By systematically addressing technical performance, analytical accuracy, and clinical relevance—and increasingly, usability considerations—the framework supports the development of digital medicine products that are not only technologically sophisticated but also clinically meaningful and reliably implemented at scale. As regulatory pathways for digital health technologies continue to mature, the standardized approaches provided by the V3 framework and its derivatives will play an increasingly important role in advancing evidence-based digital medicine.

The rapid integration of digital health technologies (DHTs) and digitally derived endpoints into pharmaceutical research and development has created a critical need for robust evaluation frameworks. These technologies, particularly Biometric Monitoring Technologies (BioMeTs), offer unprecedented capabilities for remote patient monitoring and continuous data collection in real-world settings. However, the term "validated" has been inconsistently applied, creating confusion and potential risks for clinical trials and patient safety. The V3 framework—comprising Verification, Analytical Validation, and Clinical Validation—emerges as a systematic, evidence-based approach to determine whether these digital tools are truly fit-for-purpose in pharmaceutical R&D. This framework provides the foundational evidence necessary to ensure that digital medicine products generate accurate, reliable, and clinically meaningful data for regulatory decision-making [7] [8].

Since its introduction in 2020, the V3 framework has become the de facto standard for evaluating digital clinical measures, accessed over 30,000 times and cited in more than 250 peer-reviewed journals. It has been leveraged by over 140 teams, including major regulatory bodies such as the NIH, FDA, and EMA [1]. The framework's importance continues to grow with the expansion of DHTs, with recent adaptations extending into preclinical research and emphasizing usability through the V3+ framework [2] [4].

The V3 Framework: Core Components and Definitions

The V3 framework intentionally combines established practices from both software engineering and clinical development to create a comprehensive evaluation structure for digital medicine products [8]. The table below details the three core components:

| Component | Primary Question | Key Activities | Responsible Parties |

|---|---|---|---|

| Verification | Does the technology work correctly from an engineering perspective? | Evaluating sample-level sensor outputs; bench testing in silico and in vitro; ensuring proper data capture and storage. | Hardware manufacturers, engineers [8] [4]. |

| Analytical Validation | Does the algorithm correctly process the data into a meaningful metric? | Assessing data processing algorithms that convert sensor data into physiological/behavioral metrics; evaluating precision and accuracy. | Algorithm developers (vendors or clinical trial sponsors) [8] [4]. |

| Clinical Validation | Does the metric meaningfully reflect the clinical condition or endpoint? | Demonstrating that the digital measure identifies, measures, or predicts a meaningful clinical, biological, or functional state in the specified context of use and population. | Clinical trial sponsors [8] [4]. |

This framework fills a critical gap by providing a common lexicon and systematic approach for the interdisciplinary field of digital medicine, which brings together experts from engineering, clinical science, data science, regulatory affairs, and other domains [7] [8].

The Evolving Framework: From V3 to V3+ and Beyond

The original V3 framework has been expanded to address implementation challenges at scale, leading to the development of V3+, which adds Usability Validation as a critical fourth component [2].

Usability Validation ensures that sensor-based digital health technologies (sDHTs) can be used effectively, efficiently, and satisfactorily by the intended users in the intended environment. This component is particularly crucial for avoiding use errors and extensive missing data, which can compromise trial results and patient safety [2]. For example, one study reported 50% missing tremor classification data due to inadvertent deactivation of device permissions—a failure that might have been prevented through robust usability validation [2].

The V3+ framework outlines four key activities for usability validation:

- Developing the use specification: A comprehensive description of the intended user groups, their interactions with the sDHT, and the contexts of use.

- Conducting a use-related risk analysis: Identifying potential use-errors and associated harms, prioritizing critical tasks.

- Performing iterative formative evaluations: Testing sDHT prototypes with representative users to identify and rectify usability issues.

- Conducting a summative evaluation: Formal testing to demonstrate that the sDHT can be used without serious use-errors in the intended use environment [2].

Concurrently, the V3 framework has also been adapted for preclinical research, creating an "In Vivo V3 Framework." This adaptation ensures the reliability and relevance of digital measures in animal models, strengthening the translational pathway between preclinical and clinical drug development [4].

V3 in Practice: Implementation and Comparative Analysis

Practical Application in Clinical Development

Implementing the V3 framework requires strategic planning throughout the clinical development lifecycle. Sponsors should begin planning for digitally derived endpoints during the discovery/preclinical phase, with activities including literature review, technology landscaping, and establishing the concept of interest and context of use [9]. The following workflow illustrates a typical integration of V3 activities into a clinical development program:

A critical advantage of the V3 framework is its support for leveraging prior work. Sponsors do not necessarily need to repeat all V3 activities for each new clinical development program. Instead, they can conduct a gap assessment of existing verification and validation data, then perform only the additional work needed to support the specific context of use [9]. For instance, if a DHT has already received FDA marketing authorization for measuring sleep parameters, a sponsor may leverage the existing verification and analytical validation data but still need to clinically validate the DHT specifically in an insomnia patient population [9].

Comparative Analysis of Validation Frameworks

The V3 framework does not exist in isolation. The pharmaceutical industry employs various validation models for different purposes. The table below compares V3 with other common validation approaches:

| Framework/Model | Primary Scope | Key Emphasis | Relationship to V3 |

|---|---|---|---|

| V3/V3+ Framework | Digital Health Technologies (DHTs/BioMeTs) | Establishing fit-for-purpose for digital measures across technical, analytical, and clinical dimensions. | Core focus of this article. |

| V-Model | Equipment and System Qualification | Sequential verification and validation of specifications in system development. | Foundational concept; V3 adapts and extends these principles for DHTs [10]. |

| FDA Process Validation Lifecycle | Manufacturing Processes | Three-stage approach: Process Design, Process Qualification, Continued Process Verification. | Complementary framework for manufacturing, while V3 addresses measurement tools [10]. |

| Risk-Based C&Q Models (ASTM E2500) | Facilities, Utilities, Systems, Equipment | Quality Risk Management (QRM) to focus validation efforts on critical aspects. | V3 can incorporate risk-based approaches, particularly in verification activities [10]. |

Essential Research Reagents and Tools for V3 Implementation

Successfully implementing the V3 framework requires leveraging specific tools and methodologies. The following table details key "research reagent solutions" essential for executing robust V3 evaluations:

| Tool/Category | Specific Examples | Function in V3 Process |

|---|---|---|

| Risk Assessment Tools | {riskmetric}, {riskassessment} R packages | Provide data-driven approaches to prioritize validation efforts, particularly for open-source software components [11]. |

| Environment Management Tools | Docker, renv, Posit Package Manager | Ensure reproducibility and traceability of analytical validation results by managing dependencies and version control [11]. |

| Data Collection Platforms | Wearable sensors (e.g., accelerometers, photoplethysmography), ambient technologies | Generate the raw data streams that undergo verification and feed into analytical validation processes [8] [4]. |

| Documentation & Reporting Tools | R Markdown, Officedown, Quarto | Create comprehensive, reproducible documentation for all V3 activities, supporting regulatory submissions [11]. |

| Usability Testing Platforms | User interaction recording software, structured interview guides | Support the usability validation component (V3+) by capturing user interactions and feedback during formative and summative evaluations [2]. |

The V3 framework provides the essential foundation for establishing fit-for-purpose digital measures in pharmaceutical R&D. By systematically addressing verification, analytical validation, and clinical validation—and with the recent expansion to include usability validation in V3+—this framework builds the evidence base necessary to trust and adopt digital health technologies. As the field continues to evolve, with increasing regulatory acceptance of digitally derived endpoints, the standardized approach offered by V3 enables more effective collaboration, generates a common evidence base, and ultimately accelerates the development of reliable digital medicine products. For researchers, scientists, and drug development professionals, mastering and applying the V3 framework is no longer optional but imperative for successfully navigating the new era of digital medicine [7] [8] [2].

In the evolving landscape of digital medicine, the process of transforming raw sensor data into meaningful biological metrics represents a critical pathway for pharmaceutical research and development. Digital measures—quantitative data collected continuously from unrestrained animals using digital in vivo technologies—offer unprecedented opportunities to enhance the efficiency of therapeutic discovery [4]. The reliability of this entire data supply chain, from initial signal capture to final biological interpretation, is governed by a structured evaluation framework known as V3 (Verification, Analytical Validation, and Clinical Validation) [8]. This framework, originally developed for clinical digital medicine products by the Digital Medicine Society (DiMe), has been specifically adapted for preclinical research to address the unique challenges of animal models and ensure the generation of trustworthy, translatable data [4] [12].

The V3 framework has emerged as the de facto standard across the industry for evaluating whether digital clinical measures are fit-for-purpose, with widespread adoption by regulatory bodies, pharmaceutical companies, and research institutions [1]. For preclinical researchers, the adaptation of this framework—termed the "In Vivo V3 Framework"—ensures that digital measures can reliably support decision-making in drug discovery and development by establishing rigorous evidence of their technical performance and biological relevance [4]. This comparative guide examines how different technological approaches navigate the digital measure data supply chain, with particular focus on their performance across the three critical V3 evaluation stages.

The journey from raw signal to biological metric follows a structured pathway with distinct transformation points. The diagram below illustrates this complete data supply chain and its alignment with the V3 validation framework.

Comparative Analysis of Digital Measure Approaches

Verification Stage: Ensuring Data Integrity

Verification constitutes the foundational stage of the V3 framework, focusing on establishing the integrity of raw data by confirming the correct identification and recording of sensor inputs [4] [12]. This process occurs computationally in silico and at the bench in vitro, providing systematic evaluation by hardware manufacturers to ensure that sample-level sensor outputs are accurately captured and stored [8].

Table 1: Verification Parameters Across Digital Monitoring Platforms

| Verification Parameter | Computer Vision Systems | Wearable Bio-Sensors | Electromagnetic Field Detectors |

|---|---|---|---|

| Sensor Calibration | Proper illumination, contrast maintenance | Signal baseline establishment | Field strength calibration |

| Data Provenance | Camera identification, cage assignment | Device-ID animal matching | Source identification |

| Temporal Accuracy | Frame-rate validation, timestamp verification | Sampling frequency confirmation | Event timing precision |

| Environmental Controls | Background consistency, lighting stability | Interference minimization | Shielding from external fields |

| Data Integrity Checks | Continuity of recording, corruption detection | Signal artifact identification | Signal-to-noise ratio monitoring |

During verification, computer vision systems like those used in JAX's Envision platform execute checks to ensure proper illumination, maintain contrast between animals and their background, and confirm that cameras record events from the correct cages with properly identified animals at precise timestamps [12]. This process serves as a key quality assurance step throughout a study, verifying consistent, uncorrupted data collection within the intended period. The verification stage defers to manufacturers to apply industry standards for validating the performance of sensor technologies, including digital video cameras, photobeam systems, electromagnetic field detectors, and associated firmware [4].

Analytical Validation: Assessing Algorithm Performance

Analytical validation represents the second critical stage of the V3 framework, assessing whether the quantitative metrics generated by algorithms accurately represent the captured biological events with appropriate precision and resolution [4] [12]. This stage occurs at the intersection of engineering and clinical expertise, translating the evaluation procedure from the bench to in vivo settings [8]. Analytical validation focuses on the data processing algorithms that convert sample-level sensor measurements into physiological metrics, typically performed by the entity that created the algorithm—either the vendor or the clinical trial sponsor [8].

Table 2: Analytical Validation Performance Metrics Across Digital Measure Types

| Performance Metric | Locomotion Tracking | Respiratory Rate Monitoring | Social Behavior Analysis |

|---|---|---|---|

| Precision (CV%) | <5% intra-day variation | <8% breath-to-breath variability | <12% interaction detection |

| Accuracy vs. Reference | 94% agreement with manual scoring | 89% correlation with plethysmography | 82% concordance with expert observation |

| Temporal Resolution | 30 frames/second | 60 samples/second | 5 frames/second minimum |

| Sensitivity to Detection | 97% movement detection | 95% breath cycle identification | 88% social interaction capture |

| Specificity | 93% non-movement discrimination | 91% non-respiratory motion rejection | 85% non-social behavior exclusion |

A significant challenge in analytical validation emerges when digital technologies measure biological events with greater temporal precision than traditional "gold standard" methods, or when no direct comparator exists for novel endpoints [12]. To address this, researchers employ a triangulation approach that integrates multiple lines of evidence: biological plausibility, comparison to reference standards where available, and direct observation of measurable outputs [12]. For instance, analytical validation may involve comparing computer vision-derived respiratory rates with plethysmography data or assessing digital locomotion measures against manual observations. While absolute values may differ between methods, consistent response patterns to known stimuli provide confidence in the digital measure's validity and performance [12].

Clinical Validation: Establishing Biological Relevance

Clinical validation constitutes the third stage of the V3 framework, determining whether a digital measure is biologically meaningful and relevant to health or disease states within a specific research context [4] [12]. This stage confirms that digital measures accurately reflect the biological or functional states in animal models relevant to their context of use [4]. Clinical validation is typically performed by clinical trial sponsors to facilitate the development of new medical products, with the goal of demonstrating that the digital measure acceptably identifies, measures, or predicts the clinical, biological, physical, functional state, or experience in the defined context of use [8].

The process of clinical validation confirms that digital measures provide insights that are both interpretable and actionable within the intended research setting [12]. For example, locomotor activity data in a toxicology study may serve as a relevant biomarker for assessing drug-induced central nervous system effects [12]. This stage builds upon analytical validation by demonstrating that the measures generated correspond to meaningful biological phenomena rather than merely representing technically accurate but biologically irrelevant outputs.

Table 3: Clinical Validation Outcomes Across Disease Models

| Disease Context | Digital Measure | Validation Outcome | Translational Correlation |

|---|---|---|---|

| Neurodegenerative Models | Gait coordination metrics | 92% discrimination from healthy controls | 87% concordance with clinical rating scales |

| Anxiety/Depression Models | Social interaction time | 94% response to anxiolytics | 79% prediction of clinical efficacy |

| Metabolic Disease Models | Activity-rest patterns | 89% correlation with metabolic parameters | 83% translatability to human circadian measures |

| Pain Models | Weight-bearing asymmetry | 96% detection of analgesic effects | 81% alignment with evoked response measures |

| Cardiovascular Models | Activity bout duration | 85% association with cardiac function | Limited correlation (42%) with clinical outcomes |

Successful clinical validation requires rigorous comparison of the performance of a novel method with a more established approach to demonstrate equivalent or better performance and value [4]. This benchmarking process ensures that digital measures not only capture data with technical precision but also reflect biologically meaningful phenomena that can effectively support decision-making in drug discovery and development [4].

Experimental Protocols for V3 Framework Implementation

Protocol 1: Verification Testing for Sensor Systems

Objective: To verify that digital sensors accurately capture and store raw data without corruption or misidentification in a preclinical setting.

Materials:

- Digital monitoring system (e.g., computer vision cameras, wearable sensors)

- Calibration references (standardized movement patterns, reference signals)

- Data integrity software (checksum verification, timestamp validation tools)

- Environmental monitoring equipment (light meters, temperature sensors)

Methodology:

- Sensor Calibration: Execute pre-study calibration sequences using standardized reference signals or movements.

- Provenance Establishment: Implement unique identifier systems linking each data stream to specific sensors and animals.

- Temporal Synchronization: Verify synchronization across all data collection nodes with millisecond precision.

- Environmental Control: Monitor and maintain consistent environmental conditions throughout data collection.

- Continuous Integrity Monitoring: Implement automated checks for data gaps, corruption, or signal loss during acquisition.

Validation Metrics: Record sensor output stability, data loss rates, timestamp accuracy, and environmental consistency measures.

Protocol 2: Analytical Validation of Behavioral Algorithms

Objective: To validate that algorithms accurately transform raw sensor data into quantitative measures of behavioral or physiological function.

Materials:

- Reference standard equipment (e.g., plethysmography for respiration, manual scoring systems)

- Algorithm testing framework (version-controlled codebase, testing datasets)

- Statistical analysis software (R, Python with appropriate libraries)

- Experimental cohorts (animals with known treatments or conditions)

Methodology:

- Reference Comparison: Collect parallel data using digital measures and established reference methods.

- Precision Assessment: Calculate intra-day and inter-day coefficients of variation for repeated measures.

- Accuracy Determination: Compute concordance metrics between digital measures and reference standards.

- Sensitivity/Specificity Analysis: Evaluate algorithm performance against manually verified ground truth datasets.

- Dose-Response Correlation: Assess whether digital measures detect expected responses to known treatments.

Validation Metrics: Calculate precision (CV%), accuracy (agreement with reference), sensitivity, specificity, and dose-response effect sizes.

Protocol 3: Clinical Validation of Translational Digital Biomarkers

Objective: To establish that digital measures meaningfully reflect biological states relevant to human disease or therapeutic responses.

Materials:

- Animal disease models (with well-characterized phenotypes)

- Pharmacological tools (reference therapeutics with known mechanisms)

- Clinical correlation data (where available, human equivalent measures)

- Statistical analysis plan (pre-specified endpoints, analysis methods)

Methodology:

- Context of Use Definition: Precisely specify the intended research context and decision-making purpose.

- Phenotype Discrimination: Assess ability to differentiate disease models from healthy controls.

- Therapeutic Response Detection: Evaluate sensitivity to known effective treatments.

- Translational Concordance: Compare preclinical findings with clinical data where available.

- Predictive Value Assessment: Determine capability to predict outcomes relevant to clinical translation.

Validation Metrics: Calculate effect sizes for group discrimination, treatment response detection, translational concordance rates, and predictive values.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The successful implementation of the V3 framework for digital measures requires specific technical resources and analytical tools. The following table details essential components of the digital measure research toolkit.

Table 4: Essential Research Reagents and Solutions for Digital Measure Research

| Tool Category | Specific Examples | Primary Function | Implementation Considerations |

|---|---|---|---|

| Sensor Systems | Computer vision cameras, inertial measurement units, radio-frequency identification (RFID) readers | Raw signal acquisition from research animals | Resolution, sampling rate, battery life, form factor |

| Data Acquisition Platforms | Envision (JAX), custom MATLAB/Python frameworks, commercial digital biomarker platforms | Continuous data collection with precise timestamping | Storage requirements, real-time processing capability, scalability |

| Reference Standards | Plethysmography systems, manual observation protocols, established behavioral assays | Benchmarking for analytical validation | Labor intensity, temporal resolution, potential human bias |

| Algorithm Development Tools | Python scikit-learn, TensorFlow, specialized behavioral analysis libraries | Transformation of raw signals into digital measures | Computational requirements, expertise needed, interpretability |

| Statistical Analysis Packages | R, Python Pandas, specialized biostatistics software | Performance assessment across V3 stages | Reproducibility, compliance with regulatory standards, visualization capabilities |

| Data Integrity Tools | Checksum validators, timestamp synchronizers, sensor health monitors | Verification of data provenance and quality | Automation potential, error detection sensitivity, reporting capabilities |

The journey from raw signal to biological metric traverses a complex data supply chain that requires rigorous evaluation at multiple checkpoints. The V3 framework provides a structured approach to establishing confidence in digital measures by systematically addressing verification (data integrity), analytical validation (algorithm performance), and clinical validation (biological relevance) [4] [8] [12]. This comparative analysis demonstrates that while technological approaches vary in their implementation specifics, successful navigation of the entire digital measure pipeline depends on rigorous application of all three V3 components.

For researchers selecting digital measurement platforms, priority should be given to systems that provide transparent evidence across all V3 stages, rather than those excelling in technical specifications alone. The future of digital measures in preclinical research will likely see increased standardization of validation protocols and growing regulatory expectation for comprehensive V3 evidence packages. By adopting this structured framework, researchers can enhance the reliability and applicability of digital measures in drug discovery and development, ultimately supporting more robust and translatable scientific discoveries [4].

Table of Contents

- Core Definitions and Relationships

- Comparative Analysis: Digital Biomarkers vs. BioMeTs

- The Critical Role of Context of Use

- Experimental Protocols for Validation

- Visualizing the Logical Framework

- The Scientist's Toolkit

Core Definitions and Relationships

In the rapidly evolving field of digital medicine, precise terminology is the foundation of robust research, development, and regulation. Three interconnected concepts are particularly crucial: Biometric Monitoring Technologies (BioMeTs), digital biomarkers, and Context of Use (COU).

- Biometric Monitoring Technologies (BioMeTs) are the hardware and software used to collect and analyze data from individuals. This category includes wearable sensors, smartwatches, ingestibles, and implantables, along with the algorithms that process the raw sensor data [13]. A BioMeT is the tool for measurement.

- Digital Biomarkers are the measures themselves. They are defined as objective, quantifiable physiological and behavioral data that are collected and measured by means of digital devices [14]. These data serve as indicators of health, disease, or response to therapy. It is critical to note that a 2024 systematic review of 415 articles found significant definitional variation, with 69% of articles providing no definition at all, indicating a lack of consensus in the field [14].

- Context of Use (COU) is a formal definition that describes how a digital biomarker or BioMeT should be implemented and the inferences that can be made from its data. It specifies the "who, what, when, where, how, and why" for a tool's application, ensuring it is fit-for-purpose [13]. For instance, the COU would define whether a gait speed measurement is intended for general wellness tracking or as a primary efficacy endpoint in a Parkinson's disease clinical trial.

The relationship is sequential: A BioMeT is used to collect data; a validated, purpose-specific algorithm processes this data to generate a digital biomarker; and the entire process is governed and interpreted according to its predefined Context of Use.

Comparative Analysis: Digital Biomarkers vs. BioMeTs

The distinction between a digital biomarker (the measure) and a BioMeT (the tool) is fundamental. The table below summarizes their key differences.

Table 1: Digital Biomarker vs. BioMeT Comparison

| Aspect | Digital Biomarker | Biometric Monitoring Technology (BioMeT) |

|---|---|---|

| Core Nature | A measurable data point or indicator (e.g., heart rate variability, step count) [15] [16] | A physical device and its software (e.g., smartwatch, wearable patch) [13] |

| Primary Role | Serves as an objective measure of a biological or behavioral process [14] | Serves as the platform for data acquisition and initial processing |

| Key Differentiator | The clinical or scientific insight derived from the data | The sensor technology and algorithm that generates the data |

| Example | Speech pattern changes indicating cognitive decline [15] [16] | A smartphone app's microphone and the AI algorithm analyzing voice recordings |

| Validation Focus | Clinical and analytical validity for a specific Context of Use | Technical verification and analytical validation of the device itself |

The Critical Role of Context of Use

The Context of Use is the linchpin that ensures the meaningful application of digital biomarkers and BioMeTs. A clear COU is essential for regulatory approval and clinical adoption, as it defines the boundaries within which the tool is validated and reliable [13]. The FDA's Biomarker Qualification Evidentiary Framework emphasizes the need for qualification of novel biomarkers, which is inherently tied to a specific COU [17].

Table 2: Context of Use Definitions Across Applications

| Context of Use Scenario | Impact on BioMeT Selection & Digital Biomarker Interpretation | Example |

|---|---|---|

| Diagnostic Biomarker | Device must be validated for high sensitivity/specificity against a clinical gold standard; data must be interpretable for confirming a disease. | Using a wearable ECG monitor to detect atrial fibrillation in at-risk individuals [16]. |

| Monitoring Biomarker | Device must be validated for repeated, longitudinal use; data tracks disease status or progression over time. | Using a consumer smartwatch to track resting heart rate trends for general wellness [13]. |

| Predictive Biomarker | Algorithm must be trained on diverse datasets to identify patterns that forecast future events or treatment response. | Using voice analysis software to identify early signs of suicidality or aggression [16]. |

| Clinical Trial Endpoint | The entire system (device + algorithm) must meet regulatory-grade standards for objectivity and reliability as a primary or secondary outcome. | Using a sensor-based gait analysis as a primary efficacy endpoint in a neurology clinical trial [18]. |

Experimental Protocols for Validation

A structured framework is essential for establishing that a digital biomarker is fit-for-purpose for its intended Context of Use. The V3 Framework (Verification, Analytical Validation, Clinical Validation) provides a robust methodology for this process [17].

1. Verification

- Objective: To confirm that the BioMeT's hardware and software are engineered correctly and perform according to technical specifications.

- Protocol: Testing is performed in a controlled engineering environment using calibrated equipment.

- Sensor Accuracy: An accelerometer's output is compared to a robotic motion simulator that produces movements of known distance and frequency.

- Software Reliability: The data processing pipeline is tested with simulated data inputs to ensure it produces expected, reproducible outputs without crashes or errors.

2. Analytical Validation

- Objective: To determine how accurately the BioMeT-derived digital biomarker measures the specific physiological or behavioral parameter it intends to measure.

- Protocol: Studies are conducted with human participants in controlled lab settings.

- Example: Gait Speed Measurement: Participants walk a known distance (e.g., a 10-meter walkway) while wearing the BioMeT (e.g., a smartwatch with an accelerometer). The digital biomarker (gait speed in m/s) calculated by the device's algorithm is statistically compared to the measurement from a gold-standard system, such as a motion capture camera system. Metrics like mean absolute error, intra-class correlation coefficient, and limits of agreement are calculated [17].

3. Clinical Validation

- Objective: To establish the relationship between the digital biomarker and the clinical, biological, or behavioral outcome of interest in the target population.

- Protocol: Studies are conducted in the intended real-world or clinical setting.

- Example: Fall Risk Prediction in Parkinson's Disease: A cohort of Parkinson's patients is monitored longitudinally using a wearable sensor (BioMeT). The digital biomarker (e.g., a composite score of gait variability and postural sway) is calculated. Researchers then use statistical models (e.g., Cox regression) to assess whether the biomarker score is a significant predictor of future falls, which are recorded via patient diaries and clinical follow-up. This establishes the biomarker's prognostic value [16].

Visualizing the Logical Framework

The following diagram illustrates the integrated workflow from technology development to clinical application, governed by the V3 validation framework and the Context of Use.

The Scientist's Toolkit

Successfully developing and implementing digital biomarkers requires a suite of specialized tools and reagents. The table below details key components of a research toolkit for this field.

Table 3: Essential Research Reagent Solutions for Digital Biomarker Development

| Tool / Reagent | Function & Purpose in Development |

|---|---|

| Research-Grade BioMeTs | Wearables or sensors with raw data access used for algorithm development and initial validation studies. They provide higher transparency than consumer devices [13]. |

| Gold-Standard Reference Devices | Laboratory-grade equipment (e.g., motion capture systems, clinical-grade ECG) used as a comparator during the Analytical Validation phase to benchmark the BioMeT's performance [17]. |

| Data Annotation & Labeling Platforms | Software tools used by clinical experts to manually label raw data (e.g., identifying "freezing of gait" episodes in sensor data), creating the ground-truth dataset for training and testing machine learning algorithms. |

| Algorithm Development Environments | Software frameworks (e.g., Python, R, TensorFlow) and high-performance computing resources used to build, train, and test the algorithms that transform raw sensor data into digital biomarkers [16]. |

| Clinical Outcome Assessments (COAs) | Traditional, validated paper or electronic clinical scales (e.g., UPDRS for Parkinson's, MMSE for cognition). Used during Clinical Validation to establish the correlation between the novel digital biomarker and a clinically accepted endpoint [18]. |

| Regulatory Guidance Documents | Documents from the FDA, EMA, and ICH that outline evidentiary standards for biomarker qualification and clinical trial conduct (e.g., ICH E6(R3)), serving as a critical roadmap for research design [18]. |

The Regulatory and Scientific Imperative for a Structured Framework

The integration of artificial intelligence (AI) and digital health technologies into medicine represents a paradigm shift with transformative potential. However, this rapid innovation has created a critical regulatory and scientific imperative for structured frameworks to ensure safety, efficacy, and reliability. Without standardized validation approaches, the promise of digital medicine risks being undermined by unverified claims, variable performance, and potential patient harm. Recent evidence underscores this pressing need: a comprehensive 2025 meta-analysis of generative AI diagnostic performance found that while AI models show promise, they have not yet achieved expert-level reliability, performing significantly worse than expert physicians in diagnostic accuracy [19]. This performance gap highlights the vital importance of robust validation frameworks.

The regulatory landscape is evolving rapidly in response to these challenges. The U.S. Food and Drug Administration (FDA) has established a Digital Health Center of Excellence to coordinate regulatory review of digital health technology, including AI/machine learning (ML)-based software as a medical device (SaMD) [20]. Simultaneously, the scientific community has developed validation frameworks like the V3 Framework (Verification, Analytical Validation, and Clinical Validation), which has emerged as a de facto standard for evaluating whether digital clinical measures are fit-for-purpose [1]. This article examines the regulatory requirements and scientific methodologies necessary to establish confidence in digital medicine products through structured validation frameworks.

The Evolving Regulatory Landscape for Digital Health Technologies

Current Regulatory Frameworks and Authorities

Digital health technologies operate within a complex regulatory environment primarily overseen by the FDA. The agency regulates digital health through several specialized divisions and approaches:

Software as a Medical Device (SaMD): The FDA defines SaMD as "software intended to be used for one or more medical purposes that perform these purposes without being part of a hardware medical device" [20]. The agency applies a risk-based approach, focusing oversight on software functions that could pose risks to patient safety if they malfunction.

AI/ML-Based Software: The FDA has acknowledged that "the traditional paradigm of medical device regulation was not designed for adaptive AI/ML technologies" [20]. In response, the agency has developed a Predetermined Change Control Plan (PCCP) framework that allows manufacturers to proactively specify and seek premarket authorization for planned modifications to AI/ML-based SaMD [21].

Digital Health Center of Excellence: This FDA center provides regulatory advice and support across multiple digital health domains, including medical device cybersecurity, AI/ML, regulatory science advancement, and real-world evidence [20].

Recent legislative developments further shape this landscape. The proposed Healthy Technology Act of 2025 seeks to permit AI/ML technologies to prescribe medications under specific conditions, sparking debate about the appropriate balance between innovation and safety [21].

International Regulatory Harmonization

Globally, regulatory bodies are working toward harmonized standards for digital health technologies. The International Medical Device Regulators Forum (IMDRF) has developed guidance on clinical evaluation of SaMD, describing internationally agreed principles for demonstrating safety, effectiveness, and performance [20]. This alignment is crucial as digital health companies increasingly operate across international borders and seek regulatory approval in multiple jurisdictions.

Table 1: Key Regulatory Bodies and Their Roles in Digital Health

| Regulatory Body | Jurisdiction | Key Responsibilities | Recent Developments |

|---|---|---|---|

| FDA Center for Devices and Radiological Health | United States | Regulates medical devices, including SaMD and AI/ML-based technologies | Finalized guidance on Predetermined Change Control Plans (2024) [21] |

| International Medical Device Regulators Forum (IMDRF) | International | Promotes international regulatory harmonization | Published guidance on clinical evaluation of SaMD [20] |

| European Medicines Agency (EMA) | European Union | Regulates medicines and medical devices | Working toward harmonized framework with FDA standards [22] |

The V3 Framework: A Scientific Standard for Validation

Framework Components and Applications

The V3 Framework has emerged as the scientific community's consensus approach to validating digital health technologies. Originally developed for sensor-based digital health technologies, it consists of three core components [1] [5]:

- Verification: Confirms that the technology system correctly technical captures and data from its source. This establishes the integrity of raw data, confirming proper system operation under specified conditions [5].

- Analytical Validation: Assesses how well the technology's output correlates with the clinically relevant phenomenon it is intended to measure. This determines whether the algorithm accurately represents captured events with appropriate precision and resolution [5].

- Clinical Validation: Evaluates the technology's ability to correctly identify or predict a clinically meaningful status or outcome in the intended population and context of use. This confirms the technology's capacity to provide biologically meaningful insights relevant to health or disease states [5].

The framework has been widely adopted, accessed over 30,000 times, cited more than 250 times in peer-reviewed literature, and leveraged by over 140 teams including major regulatory bodies and research institutions [1].

Adaptation for Preclinical Research

The V3 Framework has been successfully adapted for preclinical research through the In Vivo V3 Framework, which addresses the unique challenges of animal studies. For example, the Jackson Laboratory's Envision platform uses this adapted framework to validate digital measures of mouse behavior and physiology [5]:

- Verification includes ensuring proper illumination, maintaining contrast between animals and their background, and confirming correct data collection parameters.

- Analytical Validation employs a triangulation approach, integrating biological plausibility, comparison to reference standards, and direct observation.

- Clinical Validation establishes whether digital measures meaningfully represent an animal's health or disease status within specific research contexts.

This framework enables continuous, longitudinal, and non-invasive digital monitoring that captures validated measures while supporting animal welfare [5].

Figure 1: The V3 Framework for Digital Health Validation. This diagram illustrates the three-stage process for validating digital health technologies, from technical verification to clinical relevance assessment.

Performance Comparison: Structured Frameworks Versus Ad Hoc Approaches

Diagnostic Accuracy and Reliability

Recent comprehensive research demonstrates the critical importance of structured validation frameworks for AI-based diagnostic tools. A 2025 systematic review and meta-analysis of 83 studies comparing generative AI models with physicians revealed several key findings about diagnostic performance [19]:

- Overall diagnostic accuracy for generative AI models was 52.1% (95% CI: 47.0-57.1%)

- No significant performance difference was found between AI models and physicians overall (p=0.10) or non-expert physicians (p=0.93)

- AI models performed significantly worse than expert physicians (difference in accuracy: 15.8%, p=0.007)

- Several models (GPT-4, GPT-4o, Llama3 70B, Gemini 1.0 Pro, Gemini 1.5 Pro, Claude 3 Sonnet, Claude 3 Opus, and Perplexity) demonstrated slightly higher performance compared to non-experts, though differences were not statistically significant

Table 2: Diagnostic Performance Comparison Between AI Models and Physicians

| Performance Metric | Generative AI Models | Non-Expert Physicians | Expert Physicians |

|---|---|---|---|

| Overall Accuracy | 52.1% (95% CI: 47.0-57.1%) | Comparable to AI (0.6% higher, p=0.93) | Significantly higher than AI (15.8% higher, p=0.007) |

| Range by Model | Varied substantially across different AI architectures | Not specified in study | Not specified in study |

| Statistical Significance | Reference | No significant difference from AI | Significantly superior to AI |

| Key Models Evaluated | GPT-4, GPT-3.5, GPT-4V, PaLM, Llama 2, Claude models | Not specified | Not specified |

These findings underscore the necessity of rigorous validation frameworks, as AI diagnostic tools currently demonstrate variable performance that does not yet match expert clinical judgment.

Impact on Healthcare Outcomes and Implementation

The implementation of structured frameworks directly impacts healthcare outcomes across multiple domains:

Preventive Medicine: Digital health technologies enable a "left shift" toward preventive care, with technologies like genomics, AI, wearable devices, and telemedicine facilitating early intervention [23]. This approach is particularly valuable for managing chronic diseases, whose prevalence is projected to affect 48% of adults over 50 by 2050 [23].

Cardiovascular Disease Prevention: Laboratory medicine plays a crucial role in cardiovascular prevention through precision diagnostics and risk-stratification models. The integration of real-time biometric data with personalized AI algorithms shows promise for refining risk predictions and optimizing intervention strategies [24].

Operational Efficiency: Hyperautomation and AI are enhancing operational efficiency, minimizing errors, and streamlining workflows in laboratory medicine [24]. These improvements are particularly valuable given healthcare's increasing cost pressures.

Experimental Protocols for Framework Validation

Verification Methodology

The verification stage employs rigorous technical protocols to ensure data integrity:

Sensor Verification: For computer vision systems, this includes assurance of proper illumination, maintaining contrast between subjects and background, and confirming that sensors record events from correct sources with precise timestamps [5].

Data Collection Protocols: Continuous quality assurance checks throughout data collection, confirming consistent, uncorrupted data within intended parameters and timeframes.

System Integrity Checks: Validation of proper system operation under specified conditions, including environmental factors, power stability, and data transmission reliability.

Analytical Validation Methodology

Analytical validation employs multiple approaches to assess algorithm performance:

Reference Standard Comparison: Comparing digital measures against established reference standards. For example, comparing computer vision-derived respiratory rates with plethysmography data [5].

Triangulation Approach: Integrating multiple lines of evidence including biological plausibility, comparison to reference standards, and direct observation of measurable outputs [5].

Precision and Resolution Assessment: Evaluating the temporal and quantitative precision of digital measures, often revealing superior performance compared to traditional "gold standard" methods.

Clinical Validation Methodology

Clinical validation establishes real-world relevance through:

Context-Specific Validation: Determining whether a digital measure is biologically meaningful within specific research or clinical contexts [5].

Correlation with Health Outcomes: Establishing relationships between digital measures and clinically meaningful statuses or outcomes.

Cross-Species Translation: For preclinical tools, validating measures across species to establish translational relevance.

Figure 2: Experimental Validation Workflow. This diagram outlines the comprehensive methodological approach for validating digital health technologies across technical and clinical domains.

Essential Research Reagent Solutions for Digital Medicine Validation

The validation of digital medicine products requires specialized tools and platforms. The following research reagent solutions are essential for implementing comprehensive validation frameworks:

Table 3: Essential Research Reagents and Platforms for Digital Medicine Validation

| Research Reagent/Platform | Type | Primary Function | Validation Role |

|---|---|---|---|

| Digital Validation Platforms (ValGenesis, Kneat Gx, Veeva Quality Vault) | Software | Automated validation document control and workflow management | Streamlines verification protocols, ensures regulatory compliance, maintains audit trails [22] |

| Computer Vision Sensors | Hardware | Non-invasive monitoring of subject behavior and physiology | Enables continuous data collection for verification and analytical validation [5] |

| Reference Standard Instruments (Plethysmography) | Hardware | Established measurement of physiological parameters | Serves as comparator for analytical validation of digital measures [5] |

| AI/ML Model Validation Tools | Software | Validation of algorithm reliability and performance | Supports analytical validation, model drift detection, bias identification [22] |

| Digital Twins | Software | Virtual simulation of physical systems | Enables predictive validation and testing under varied conditions [22] |

| Cloud Data Analytics Platforms | Software | Secure data storage, sharing, and analysis | Facilitates continuous verification and remote audit capabilities [22] |

The regulatory and scientific imperative for structured frameworks in digital medicine is clear and urgent. As AI and digital health technologies continue their rapid advancement, robust validation approaches like the V3 Framework provide the necessary foundation for ensuring safety, efficacy, and reliability. The evidence demonstrates that while digital health technologies show significant promise, they currently do not match expert clinical performance in critical domains like diagnostics [19].

The path forward requires continued collaboration between researchers, regulatory bodies, healthcare providers, and technology developers. This includes further refinement of validation frameworks, development of standardized performance metrics, and creation of transparent reporting standards. Additionally, as digital health technologies evolve toward greater adaptability and autonomy, validation frameworks must similarly advance to address challenges like AI model drift, continuous learning systems, and personalized algorithms.

Ultimately, structured validation frameworks are not barriers to innovation but rather essential enablers of responsible, effective digital medicine. By implementing rigorous, standardized approaches to verification, analytical validation, and clinical validation, the field can realize the full potential of digital health technologies while maintaining the trust of patients, clinicians, and regulators.

From Theory to Practice: Implementing V3 in Preclinical and Clinical Development

In the development of digital medicine products, verification serves as the critical first pillar, ensuring that the hardware sensors performing data acquisition function correctly and reliably. Within the established V3 framework—which encompasses verification, analytical validation, and clinical validation—verification specifically addresses the fundamental question: does the sensor or technology perform as specified under defined operating conditions? [1] [2] For researchers and drug development professionals, rigorous sensor verification is not optional; it is the foundational step that determines whether subsequently collected data can be trusted for scientific and clinical decision-making [25].

The growing reliance on sensor-based digital health technologies (sDHTs) in clinical trials and healthcare delivery underscores the critical importance of this process. These technologies enable the capture of high-resolution, real-world data from participants in remote settings, offering significant potential to accelerate drug development timelines and decrease clinical trial costs [25]. However, this potential can only be realized if the integrity of the raw sensor data is unimpeachable. This article provides a practical, comparative guide to methodologies and experimental protocols for verifying hardware and sensor data integrity, framed within the broader context of the V3 framework for digital medicine products.

The Verification Framework: From Principles to Practice

Core Principles of Sensor Verification

Sensor verification is distinct from, and prerequisite to, analytical and clinical validation. Where verification asks "Was the data measured correctly?", analytical validation asks "Does the algorithm process the data correctly?" and clinical validation asks "Does the output measure something clinically meaningful?" [2] [25] The verification process evaluates sensor performance against a pre-specified set of technical criteria, focusing on the accurate translation of physical phenomena into digital signals [2].

The core principles of data integrity—accuracy, consistency, and reliability—form the bedrock of verification activities [26]. In practical terms, this means ensuring that a sensor's output consistently reflects the true physiological signal it is designed to capture, across all intended use environments and populations.

The Expanding Framework: From V3 to V3+

The original V3 framework has been extended to V3+, which incorporates usability validation as an additional critical component [2]. This extension recognizes that technical performance alone is insufficient; sensors must also demonstrate acceptable user experience and ease of use to ensure reliable data collection in real-world settings. For hardware and sensors, usability flaws can directly compromise data integrity through inadvertent user errors such as incorrect device placement or accidental deactivation of permissions [2].

The V3+ framework emphasizes that verification considerations should be integrated throughout the entire development lifecycle, from early technical specifications through post-market surveillance [27]. This integrated approach aligns with regulatory expectations, including the FDA's guidance on Digital Health Technologies for Remote Data Acquisition, which establishes comprehensive standards for verification, validation, and usability evaluation [27].

Comparative Analysis of Verification Methodologies

A robust verification strategy employs multiple complementary methodologies to assess different aspects of sensor performance. The table below summarizes the key approaches, their applications, and implementation considerations.

Table 1: Comparative Analysis of Sensor Verification Methodologies

| Methodology | Primary Application | Key Performance Indicators | Implementation Considerations |

|---|---|---|---|

| Technical Bench Testing | Laboratory verification against reference instruments | Accuracy, precision, resolution, range | Requires calibrated reference standards; controls environmental variables |

| Algorithmic Verification | Data integrity checksums and hashing | Data completeness, corruption detection | SHA-256, MD5 algorithms; confirms data unchanged during storage/transmission [26] |

| Controlled Human Use Testing | Usability and reliability in controlled settings | Failure rates, adherence, user error frequency | Conducted with prototypes; identifies use-related risks before deployment [2] |

| Use-Related Risk Analysis | Foreseeable error identification and mitigation | Risk severity, occurrence likelihood, detectability | Mandatory for regulated devices; focuses on inherent safety by design [2] |

Quantitative Performance Benchmarks

Establishing quantitative performance benchmarks is essential for objective verification. The following table illustrates example tolerance ranges for common sensor types used in digital medicine applications.

Table 2: Example Performance Tolerance Ranges for Common Sensor Types

| Sensor Type | Parameter Verified | Acceptable Tolerance Range | Testing Conditions |

|---|---|---|---|

| Accelerometer | Dynamic accuracy (step count) | ±5% against manual count | Treadmill (1-5 km/h), free-living simulation |

| Photoplethysmography (PPG) | Heart rate accuracy | ±3 BPM vs. ECG gold standard | Rest, controlled activity, postural changes |

| Electrodermal Activity | Amplitude response | ±5% against calibrated resistance source | Controlled chamber (temperature/humidity) |

| Temperature Sensor | Absolute accuracy | ±0.1°C against NIST-traceable standard | Range: 35°C-42°C; various ambient conditions |

Experimental Protocols for Sensor Verification

Protocol 1: Technical Performance Verification

Objective: To verify that a sensor meets its specified technical performance characteristics under controlled laboratory conditions.

Materials:

- Device Under Test (DUT) - the sensor or sDHT being verified

- Reference Measurement System (calibrated, traceable to recognized standards)

- Environmental Chamber (for controlling temperature, humidity)

- Vibration-isolated test platform

- Data acquisition and analysis software

Procedure:

- Stabilization: Place DUT and reference system in environmental chamber. Allow sufficient time for stabilization at each test condition (typically ≥30 minutes).

- Static Point Verification: Apply known, constant input signals across the sensor's specified measurement range. Record minimum 10 samples per point with appropriate settling time between measurements.

- Dynamic Response Testing: Apply time-varying input signals (sine waves, ramps) to characterize frequency response, hysteresis, and response time.

- Environmental Testing: Repeat static point verification at temperature and humidity extremes within the specified operating range.

- Data Integrity Checks: Implement checksum verification (e.g., SHA-256) on data files to confirm absence of corruption during storage and transmission [26].

- Analysis: Calculate accuracy (mean difference from reference), precision (standard deviation), linearity (R² of fit), and signal-to-noise ratio.