Validating Biometric Monitoring Technologies: A 2025 Regulatory Framework for Clinical Research and Drug Development

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the evolving regulatory landscape for Biometric Monitoring Technologies (BioMeTs) in 2025.

Validating Biometric Monitoring Technologies: A 2025 Regulatory Framework for Clinical Research and Drug Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the evolving regulatory landscape for Biometric Monitoring Technologies (BioMeTs) in 2025. It explores the foundational V3 validation framework, details methodologies for integrating AI and multimodal systems, addresses critical challenges in data privacy and algorithmic bias, and establishes best practices for performance benchmarking. The content synthesizes current regulatory standards, technological trends, and strategic imperatives to ensure that biometric technologies are fit-for-purpose in clinical trials and biomedical research.

The Evolving Regulatory Landscape for BioMeTs in 2025

Defining Biometric Monitoring Technologies (BioMeTs) and Their Role in Modern Clinical Trials

Biometric Monitoring Technologies (BioMeTs) represent a category of technologies, primarily wearable devices, that digitally capture and measure physiological and behavioral data in a structured manner. They are defined as "devices that can be worn on human skin to continuously and closely monitor an individual’s activities, without interrupting or limiting the user’s motions" [1]. In the context of clinical research, BioMeTs facilitate the continuous, remote monitoring of patient outcomes outside traditional hospital settings, unlocking new dimensions of objective, real-world data [1]. The global wearable electronics market, estimated at $32.5 billion in 2022 and projected to reach $173.7 billion by 2030, underscores the rapid growth and adoption of these technologies [1]. Their application in clinical trials is driven by the need for more ecologically valid data, the ability to capture rare events, and the potential to reduce the frequency of site visits, thereby lowering patient burden and trial costs.

Categorization and Comparison of Major BioMeTs

BioMeTs can be categorized based on their form factor, physiological parameters measured, and their application in clinical trials. The table below summarizes the key technologies, their data modalities, and primary clinical use cases.

Table 1: Comparison of Major Biometric Monitoring Technologies in Clinical Research

| Technology Category | Measured Parameters/Data Modalities | Common Clinical Trial Applications | Key Strengths |

|---|---|---|---|

| Electroencephalography (EEG) | Neuronal electrical activity (brain rhythms) [2] | Motor imagery decoding for Brain-Computer Interfaces (BCIs), monitoring neurological disorders [2] | High temporal resolution (milliseconds) [3] |

| Functional Near-Infrared Spectroscopy (fNIRS) | Hemodynamic responses (changes in oxygenated/deoxygenated hemoglobin) [2] | Motor imagery BCIs, cognitive workload assessment, neurorehabilitation [2] [3] | Better spatial resolution than EEG; robust to motion artifacts [3] |

| Multimodal EEG-fNIRS | Combined neuro-electrical and hemodynamic activity [4] | Advanced BCIs, comprehensive brain state decoding in naturalistic settings [4] [3] | Complementary information enhances spatiotemporal resolution and classification performance [4] |

| Consumer Wearables (e.g., Smartwatches) | Heart rate, physical activity, oxygen saturation, sleep patterns [1] | Monitoring chronic diseases (cardiac, respiratory, neurodegenerative), detecting disease onset (e.g., arrhythmias) [1] | High patient acceptability, continuous data collection in real-world settings |

The convergence of these technologies with artificial intelligence (AI) and big data analytics is transforming their utility. AI algorithms can analyze vast amounts of biometric data to identify potential safety concerns, predict drug efficacy, and optimize trial operations [5] [6]. Furthermore, the rise of "risk-based everything" in clinical data management encourages sponsors to focus monitoring efforts on the most critical biometric data points, enhancing trial quality and efficiency [7].

Experimental Data and Performance Comparison

The validation of BioMeTs relies on rigorous experiments demonstrating their technical performance and clinical utility. The following section details key experimental protocols and outcomes for prominent BioMet categories.

Multimodal Brain-Computer Interface (BCI) Decoding

Experimental Protocol: A landmark study creating a multimodal EEG-fNIRS dataset involved 18 subjects performing eight distinct motor imagery (MI) tasks related to hand, wrist, elbow, and shoulder movements [2]. Each subject completed 320 trials, resulting in a total of 5,760 trials of simultaneously recorded EEG and fNIRS data [2]. The protocol for each trial was as follows: a 2-second rest period with a fixation cross, a 2-second visual and text cue indicating the task, a 4-second motor imagery period, and a final 10-12 second rest period to allow fNIRS hemodynamic responses to return to baseline [2]. EEG was recorded using a 64-channel cap with a sampling frequency of 1000 Hz, while fNIRS was collected with a system using 8 sources and 8 detectors, resulting in 24 channels at a sampling rate of 7.8125 Hz [2].

Performance Outcomes: When this dataset was used to train a typical deep learning model (ShallowConvNet) with data augmentation, the highest classification accuracy of 65.49% was achieved for distinguishing between two complex tasks: hand open/close and shoulder pronation/supination using EEG data [2]. This demonstrates the feasibility of decoding fine-grained motor intentions from the same limb, a significant advancement over traditional left/right hand MI paradigms.

Representation Learning for Few-Shot Classification

Experimental Protocol: To address the challenge of limited labeled data, a novel multimodal EEG–fNIRS Representation-learning Model (EFRM) was developed [4]. This model employs a two-stage process: a pre-training stage that learns both modality-specific and shared representations from large-scale unlabeled data, followed by a transfer learning stage where the model is adapted to specific tasks with minimal labeled samples [4]. The pre-training leveraged approximately 1,250 hours of brain signal recordings from 918 participants [4]. The model uses a Masked Autoencoder (MAE) to learn modality-specific features and contrastive learning to align the shared representations between EEG and fNIRS [4].

Performance Outcomes: The EFRM model demonstrated competitive performance compared to state-of-the-art supervised learning models, even with very few labeled samples [4]. It showed significant improvements in fNIRS classification performance by leveraging the shared domain knowledge learned from the multimodal pre-training. This approach provides a robust framework for building accurate BioMet classifiers without the need for massive, expensively labeled datasets [4].

Table 2: Quantitative Performance of BioMet Classification Models

| Model/Algorithm | Data Modality | Classification Task | Reported Performance |

|---|---|---|---|

| ShallowConvNet [2] | EEG | Hand MI vs. Shoulder MI | 65.49% Accuracy |

| Multimodal EFRM [4] | EEG-fNIRS | Few-shot brain-signal classification | Competitive with supervised models; significant gains for fNIRS |

| AI for Clinical Trial Risk [6] | Diverse (EHR, genomic, etc.) | Adverse Event Prediction | AUROC up to 96% |

The workflow for developing and validating such a multimodal model can be summarized as follows:

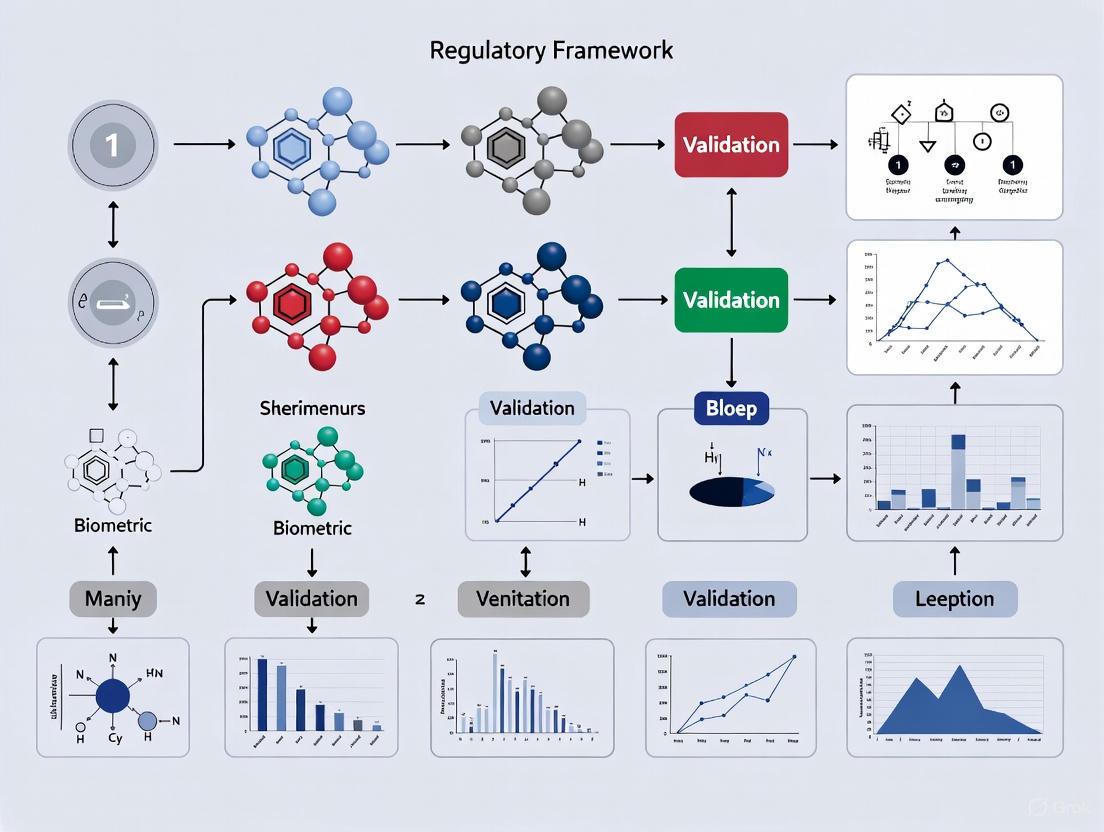

Diagram 1: Workflow for multimodal representation learning with EEG and fNIRS signals, enabling few-shot learning for classification tasks [4].

Detailed Methodologies and Signaling Pathways

A deep understanding of the underlying physiology and data processing workflows is critical for the valid application of BioMeTs.

The Neurovascular Coupling Pathway

The synergistic value of multimodal EEG-fNIRS is rooted in Neurovascular Coupling (NVC), the biological process that links neural activity to subsequent changes in cerebral blood flow [4]. This relationship forms the foundation for correlating electrical and hemodynamic brain signals.

Diagram 2: The neurovascular coupling pathway, linking neural activity measured by EEG to the hemodynamic response measured by fNIRS [4] [3].

Experimental Workflow for a Multimodal BCI Study

A standardized experimental protocol is essential for generating high-quality, reproducible data. The following workflow, derived from a public dataset creation study, outlines the key steps [2].

Diagram 3: Standardized experimental workflow for a multimodal EEG-fNIRS motor imagery study [2].

The Scientist's Toolkit: Key Research Reagent Solutions

The successful implementation of BioMet studies requires a suite of specialized hardware, software, and data resources. The following table details essential components of a modern BioMet research toolkit.

Table 3: Essential Research Reagent Solutions for BioMet Studies

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| 64-channel EEG System (e.g., Neuroscan SynAmps2) | Records electrical brain activity with high temporal resolution from the whole scalp [2]. | Capturing event-related potentials (ERPs) or motor imagery-related oscillations [2]. |

| fNIRS System (e.g., NIRScout) | Measures hemodynamic responses (changes in HbO/HbR) using near-infrared light [2]. | Localizing brain activation associated with cognitive or motor tasks [2] [3]. |

| Multimodal EEG-fNIRS Caps | Integrated headgear allowing simultaneous, co-located recording of both modalities. | Ensuring data is spatially and temporally aligned for fusion algorithms [2] [4]. |

| Public BioMet Datasets | Curated, annotated datasets for benchmarking algorithms (e.g., the 8-task MI dataset) [2]. | Training and validating machine learning models without primary data collection [2]. |

| Pre-trained Models (e.g., EFRM, BENDR) | Foundation models pre-trained on large-scale brain signal data [8] [4]. | Enabling few-shot or transfer learning for new tasks with limited labeled data [4]. |

| Data Fusion & ML Platforms | Software tools for artifact removal, feature extraction, and multimodal classification [3]. | Implementing advanced analysis pipelines, such as source decomposition or deep learning [3]. |

Biometric Monitoring Technologies are fundamentally reshaping the landscape of clinical trials by providing continuous, objective, and multidimensional data from patients in their natural environments. From consumer wearables tracking vital signs to sophisticated multimodal brain imaging systems like EEG-fNIRS, BioMeTs offer unprecedented insights into disease progression and treatment efficacy. The integration of these technologies with advanced AI and data-driven methodologies, such as risk-based monitoring and representation learning, is enhancing the efficiency, cost-effectiveness, and predictive power of clinical research. As the field matures, the continued validation of BioMeTs within robust regulatory frameworks will be paramount to fully realizing their potential in delivering better clinical solutions and accelerating drug development.

Biometric data, derived from the precise measurement of an individual's unique physical, physiological, or behavioral characteristics, represents a particularly sensitive category of personal information. Unlike passwords or identification cards, biometric identifiers—such as fingerprints, facial patterns, iris structures, and voiceprints—are inherently linked to an individual and are fundamentally immutable. This permanence and uniqueness make biometric data highly valuable for authentication and identification purposes in research and commercial applications, but also magnify the privacy and security risks associated with its processing. A data breach involving biometric information carries consequences far more severe than one involving traditional data, as individuals cannot change their fingerprints or facial structure once compromised.

The escalating integration of biometric monitoring technologies into research environments, particularly in clinical trials and pharmaceutical development, necessitates a rigorous understanding of the complex regulatory landscape governing its use. Researchers and organizations operating globally must navigate a fragmented framework of regulations that apply different standards, requirements, and protections. The European Union's General Data Protection Regulation (GDPR) establishes a stringent, rights-based approach, while the United States' Health Insurance Portability and Accountability Act (HIPAA) provides a sector-specific rule for health information. Concurrently, state-level laws like the California Consumer Privacy Act (CCPA), as amended by the CPRA, create a patchwork of requirements within the U.S. This guide provides a detailed comparative analysis of these core regulatory pillars, offering researchers and drug development professionals the essential knowledge for ensuring compliant and ethical handling of biometric data in a global context.

Core Regulatory Frameworks: A Detailed Analysis

The General Data Protection Regulation (GDPR)

The GDPR is a comprehensive data privacy law that applies to all processing of personal data of individuals in the European Economic Area (EEA), regardless of where the processing organization is located [9]. For researchers using biometric data, the most critical provision is its classification of biometric data specifically used for the purpose of uniquely identifying a person as a "special category of data" (akin to health data or religious beliefs) [10]. This classification triggers the highest level of protection under the regulation.

Lawful Basis for Processing: Processing biometric data under the GDPR requires identifying both a lawful basis for general processing (e.g., consent, public interest) and an additional, specific condition for processing special category data [11]. Explicit consent is the most common and often the most appropriate condition for research contexts [10]. This consent must be a freely given, specific, informed, and unambiguous indication of the data subject's wishes, demonstrated by a clear affirmative statement or action [11] [9]. The GDPR also mandates adherence to core principles, including purpose limitation, data minimization, and storage limitation, meaning researchers can only collect biometric data for specified, explicit, and legitimate purposes and retain it for no longer than necessary [9].

Data Subject Rights: The GDPR grants individuals robust rights over their data, including the right to access their biometric data, the right to rectification of inaccurate data, and the powerful right to erasure ('right to be forgotten') [10] [12]. Researchers must implement processes to facilitate these rights within the timelines stipulated by the regulation.

Security and Accountability: Organizations must implement appropriate technical and organizational measures to ensure a level of security appropriate to the high risk presented by biometric data processing, potentially including encryption, access controls, and regular security testing [10]. A Data Protection Impact Assessment (DPIA) is mandatory for processing operations that are likely to result in a high risk to individuals' rights and freedoms, a category that typically includes the systematic processing of biometric data [10]. The accountability principle requires organizations to be able to demonstrate their compliance with all these principles [9].

The Health Insurance Portability and Accountability Act (HIPAA)

HIPAA is a U.S. federal law that establishes standards for the protection of certain health information. Its scope is narrower than the GDPR, as it applies specifically to "covered entities" (healthcare providers, health plans, healthcare clearinghouses) and their "business associates" (contractors that handle protected health information on their behalf) [13] [12].

Protected Health Information (PHI): The core of HIPAA is the protection of Protected Health Information (PHI), which is individually identifiable health information that is created, received, or maintained by a covered entity [12]. Biometric data, such as a fingerprint used to identify a patient in a hospital, would be considered PHI if it is linked to health or payment information.

The Security Rule: The HIPAA Security Rule establishes national standards for securing electronic PHI (ePHI) [14]. It requires the implementation of administrative, physical, and technical safeguards to ensure the confidentiality, integrity, and availability of ePHI. The rule is flexible and scalable, allowing organizations to implement measures appropriate to their size and complexity. In response to rising cyber threats, 2025 proposed updates to the HIPAA Security Rule seek to strengthen these requirements by mandating specific controls like multi-factor authentication (MFA) for all access to ePHI, enhanced data encryption protocols (both at rest and in transit), and regular vulnerability scanning and penetration testing [14] [15].

Patient Rights: Under HIPAA, patients have rights to access their own health information, request amendments, and receive an accounting of disclosures [12]. However, these rights are generally less extensive than those under the GDPR; for instance, HIPAA does not provide a broad "right to be forgotten" that would allow a patient to demand the deletion of their medical records from a covered entity.

The California Consumer Privacy Act (CCPA/CPRA)

The CCPA, as amended by the CPRA, is a comprehensive state-level privacy law that grants California residents significant control over their personal information. It applies to for-profit businesses that operate in California and meet specific revenue or data processing thresholds [16].

Biometric Information as Sensitive Personal Information: The CCPA defines "personal information" broadly. While it does not single out biometric data as a "special category" in the same way as the GDPR, it classifies biometric information as a form of "sensitive personal information" [16]. This classification gives consumers the right to direct businesses to limit the use and disclosure of their sensitive personal information to that which is necessary to provide the requested goods or services.

Consumer Rights and Opt-Out Model: The CCPA is founded on an opt-out model, contrasting with the GDPR's opt-in default [17]. It grants California consumers the right to know what personal information is collected about them, the right to delete it, the right to correct inaccurate information, and the right to opt-out of the "sale" or "sharing" of their personal information [16]. Businesses must honor user-enabled global privacy controls, like the Global Privacy Control (GPC), as a valid opt-out request [17] [16].

2025 Regulatory Updates: New CCPA regulations approved in 2025 introduce significant new obligations for businesses, including requirements for cybersecurity audits and risk assessments for processing that presents significant risk to consumers [18]. These audits, with deadlines starting in 2028, must assess policies on MFA, encryption, access controls, and more. Furthermore, the regulations now specifically include "neural data" in the definition of sensitive personal information, reflecting the evolving nature of biometric monitoring technologies [18].

Comparative Analysis of Regulatory Pillars

The following tables provide a structured, quantitative comparison of the core regulatory frameworks governing biometric data, highlighting their key differences and overlaps to aid in compliance strategy development.

Table 1: Core Definitions, Scope, and Legal Basis Across Regulatory Frameworks

| Aspect | GDPR | HIPAA | CCPA/CPRA |

|---|---|---|---|

| Geographic Scope | Applies to processing of EU/EEA residents' data, regardless of entity location [9]. | Primarily applies to U.S. covered entities and business associates [12]. | Applies to businesses collecting California residents' data, with extraterritorial effect [17]. |

| Definition of Biometric Data | "Personal data resulting from specific technical processing... relating to physical, physiological, or behavioural characteristics... which allow or confirm the unique identification of that natural person" [10]. | Not explicitly defined; falls under PHI if it is an identifier linked to health information (e.g., fingerprint for patient ID) [13]. | "Biometric information" is defined and classified as "sensitive personal information" [16]. |

| Primary Legal Basis for Research | Explicit consent (for special category data) [10] [11]. | Permitted for research with patient authorization or as part of limited preparatory activities [13]. | Consent not required by default; consumers have right to limit use of sensitive information [17] [16]. |

| Default Consent Model | Opt-In [17]. | N/A (Authorization required for specific uses). | Opt-Out [17]. |

Table 2: Security, Rights, and Enforcement Mechanisms

| Aspect | GDPR | HIPAA | CCPA/CPRA |

|---|---|---|---|

| Core Security Mandates | "Appropriate technical and organisational measures" [9]. DPIA mandatory for high-risk processing [10]. | Administrative, Physical, and Technical Safeguards. 2025 updates propose mandatory MFA, encryption, vulnerability scans [14]. | Cybersecurity audits required for high-risk processors per 2025 rules; must cover MFA, encryption, access controls [18]. |

| Key Data Subject Rights | Right to Access, Rectification, Erasure ('Right to be Forgotten'), Portability [10] [12]. | Right to Access, Request Amendment, Accounting of Disclosures [12]. | Right to Know, Delete, Correct, Opt-Out of Sale/Sharing, Limit Use of Sensitive Information [16]. |

| Penalty Structure | Up to €20 million or 4% of global annual turnover, whichever is higher [13] [9]. | Tiered fines from $100 to $1.5 million per violation category [13]. | Civil penalties up to $7,500 per intentional violation [13]. |

Experimental Protocols for Regulatory Compliance

Validating research protocols against regulatory frameworks requires a systematic approach. The following experimental workflows and methodologies are designed to ensure compliant handling of biometric data.

Protocol 1: Lawful Basis Establishment for Biometric Processing

This protocol provides a step-by-step methodology for determining a lawful basis for processing biometric data under the GDPR, which requires both a general lawful basis and a specific condition for processing special category data.

Methodology Summary: This decision tree guides researchers through the stringent requirements of GDPR Article 9. The primary and most straightforward path is securing explicit consent, which requires a clear statement from the data subject and must be accompanied by a viable, non-biometric alternative (e.g., a PIN code for system access) to ensure the choice is truly free [11]. If consent is not feasible, two alternative grounds may be considered: substantial public interest must be rooted in EU or member state law, while vital interests apply strictly to scenarios necessary to protect someone's life. If none of these conditions can be met, the processing of special category biometric data is not permitted under the GDPR.

Protocol 2: Cross-Border Research Compliance Workflow

For research institutions operating internationally, managing data flows and compliance across multiple jurisdictions is a critical function. This workflow outlines the key steps.

Methodology Summary: This workflow emphasizes a sequential compliance strategy. The process begins with a Jurisdiction Assessment to identify all applicable laws based on the data subjects' locations and the research entity's operations [17] [9]. This is followed by Data Residency & Transfer Mapping to understand where data is stored and transferred, ensuring GDPR restrictions on international transfers are respected. The core of the protocol is the Dual Compliance Check, where researchers must implement both GDPR requirements (like DPIAs and data subject rights procedures) and HIPAA mandates (like Security Rule safeguards and BAAs) for projects involving EU and US patient data [12]. Finally, a State Law Analysis is required to comply with specific statutes like the CCPA, particularly its opt-out and sensitive information rules [17] [18]. This entire process must be underpinned by unified technical safeguards (e.g., encryption, MFA) and meticulous documentation for accountability and audit purposes [10] [14].

The Scientist's Toolkit: Essential Research Reagent Solutions

Navigating the complex regulatory environment requires a set of procedural and technical "reagents." The following table details essential components for a compliant biometric data research framework.

Table 3: Essential Compliance and Security Solutions for Biometric Data Research

| Research Reagent Solution | Function & Purpose | Applicable Regulatory Framework |

|---|---|---|

| Data Protection Impact Assessment (DPIA) Template | A structured tool to systematically identify and mitigate risks of data processing activities before they begin. | GDPR (Mandatory for high-risk processing) [10]. |

| Multi-Factor Authentication (MFA) | A technical security control that requires multiple verification methods to access data, drastically reducing risk of unauthorized access. | HIPAA (Proposed 2025 Mandate) [14], CCPA Cybersecurity Audits [18]. |

| Global Privacy Control (GPC) Signal Recognition | Technical capability to detect and honor a user's browser-level opt-out request for data sale/sharing. | CCPA/CPRA (Legally recognized) [17] [16]. |

| Encryption Protocols (Data at Rest & in Transit) | Cryptographic methods to render data unreadable without a key, protecting confidentiality and integrity. | GDPR (Appropriate security) [9], HIPAA (Proposed 2025 Mandate) [14]. |

| Business Associate Agreement (BAA) / Data Processing Agreement (DPA) | A legally binding contract that ensures third-party vendors (processors) provide sufficient data protection guarantees. | HIPAA (Required for Business Associates) [12], GDPR (Required for Data Processors) [9]. |

| Consent Management Platform (CMP) | A software tool to obtain, manage, and document user consent preferences, ensuring they are specific and withdrawable. | GDPR (Explicit Consent) [10] [11]. |

The field of digital medicine, propelled by advances in sensor technology and data analytics, has given rise to Biometric Monitoring Technologies (BioMeTs). These connected technologies process data from mobile sensors to generate measures of behavioral and physiological function [19]. However, the interdisciplinary nature of digital medicine—drawing from engineering, clinical science, data science, and regulatory science—has led to fragmented terminology and evaluation standards, creating a critical need for a unified framework to ensure these technologies are fit-for-purpose in clinical research and practice [19] [20]. The V3 framework (Verification, Analytical Validation, and Clinical Validation) emerged to address this gap, providing a structured approach to evaluating digital measures across technical, analytical, and clinical dimensions [19].

This framework has become the de facto standard for assessing sensor-based digital health technologies (sDHTs), with the original framework accessed over 30,000 times and cited in more than 250 peer-reviewed publications since its 2020 introduction [21]. Its adoption by regulatory bodies, including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), underscores its importance in the regulatory validation of BioMeTs for clinical trials and healthcare applications [21] [22].

The Core Components of the V3 Framework

The V3 framework comprises three distinct but interconnected components that form a comprehensive evidence-generation process for BioMeTs [19].

Verification

Verification constitutes the foundational layer, focusing on the technical performance of the sensors themselves. This process involves systematic evaluation by hardware manufacturers to ensure that sample-level sensor outputs meet pre-specified criteria [19] [22]. Verification occurs computationally (in silico) and at the bench (in vitro), confirming that the raw data captured by the sensor has integrity and that the source is correctly identified [19] [23].

In practice, verification includes checks throughout data collection. For computer vision sensors, this might involve ensuring proper illumination, maintaining contrast between subjects and their background, and confirming that recordings come from the correct sources with precise timestamps [23]. This stage serves as a quality assurance process, verifying consistent and uncorrupted data collection from initiation to completion of a study [23].

Analytical Validation

Analytical validation assesses the performance of algorithms that transform raw sensor data into meaningful physiological or behavioral metrics [19] [22]. This component bridges engineering and clinical expertise, translating evaluation procedures from bench settings to in vivo contexts [19]. Analytical validation determines whether the quantitative metrics generated by an algorithm accurately represent the captured events with appropriate precision and resolution [23].

A key challenge in analytical validation is that digital technologies often measure biological events with greater temporal precision than traditional "gold standard" methods, and for novel endpoints, no direct comparator may exist [23]. To address this, researchers employ a triangulation approach, integrating multiple lines of evidence including biological plausibility, comparison to reference standards where available, and direct observation of measurable outputs [23]. Successful analytical validation requires collaboration between machine learning scientists and biologists to establish clear definitions ensuring digital measures accurately reflect biological phenomena [23].

Clinical Validation

Clinical validation establishes whether a digital measure acceptably identifies, measures, or predicts a meaningful clinical, biological, physical, functional state, or experience in a specified context of use [19] [22]. Typically performed by clinical trial sponsors, this validation demonstrates that the BioMeT-derived measure is biologically meaningful and relevant to health or disease states within a specific research context [19] [23].

This component builds upon analytical validation by demonstrating that digital measures provide insights that are both interpretable and actionable within the intended research or clinical setting [23]. For example, in a toxicology study, clinically validated locomotor activity data may serve as a relevant biomarker for assessing drug-induced central nervous system effects [23]. Clinical validation is typically performed on cohorts of patients with and without the phenotype of interest to establish clinical relevance [19].

Table 1: Core Components of the V3 Framework

| Component | Primary Focus | Key Activities | Typical Responsible Party |

|---|---|---|---|

| Verification | Sensor performance and data integrity | Sample-level sensor evaluation; in silico and in vitro testing; data quality checks | Hardware manufacturers |

| Analytical Validation | Algorithm performance | Assessment of data processing algorithms; precision and accuracy testing; triangulation with reference standards | Algorithm developer (vendor or clinical trial sponsor) |

| Clinical Validation | Clinical relevance | Evaluation in target population; assessment of ability to identify/predict clinical states; determination of biological meaning | Clinical trial sponsor |

The V3+ Extension: Integrating Usability Validation

As clinical research sponsors and healthcare organizations implemented sDHTs at scale, challenges related to user-centricity and real-world implementation emerged [22]. In response, the V3 framework was extended to V3+ through the addition of a fourth component: usability validation [22].

The Need for Usability Validation

Real-world examples highlighted limitations in the original V3 framework. In the Wearable Assessment in the Clinic and at Home in Parkinson's Disease study, tremor classification data were missing for 50% of participants due to inadvertent deactivation of device permissions [22]. Similarly, the FDA recalled a specific blood glucose monitor because the product could inadvertently switch units of measure during battery insertion during normal use [22]. These examples underscored that even technically sound devices could fail due to usability issues, necessitating an expanded framework.

Components of Usability Validation

The usability validation component of V3+ comprises four key activities [22]:

- Develop the use specification: Creating a comprehensive description of intended user groups, their interactions with the sDHT, and their motivations.

- Conduct a use-related risk analysis: Identifying foreseeable risks associated with sDHT use, including use-errors and potential harms from missing data.

- Conduct iterative formative evaluation: Testing sDHT prototypes with users to identify use-errors and inform design improvements.

- Conduct summative evaluation: Formal testing to demonstrate that the sDHT can be used without serious use-errors for critical tasks.

Usability validation ensures that sDHTs can be used optimally at scale by diverse users, paving the way for more inclusive, reliable, and trustworthy digital measures within clinical research and care [22].

Experimental Protocols and Methodologies

Implementing the V3 framework requires rigorous experimental methodologies at each validation stage. Below, we detail protocols for generating evidence for each V3 component.

Verification Protocols

Verification focuses on the data supply chain, ensuring integrity from hardware sensors through data storage [19]. For a computer vision-based monitoring system, verification would include [23]:

- Illumination verification: Confirm consistent, adequate lighting conditions across all recording environments using calibrated light meters. Document minimum and maximum lux values during acquisition periods.

- Subject-background contrast validation: Quantify contrast ratios between subject and background across all possible environments. Establish minimum acceptable contrast ratio threshold (e.g., ≥3:1).

- Source identification testing: Implement automated checks confirming recordings originate from correct sources with proper animal/patient identification and precise timestamps.

- Data integrity checks: Establish protocols for verifying consistent, uncorrupted data collection throughout intended study period, including checks for data gaps or corruption.

Analytical Validation Protocols

Analytical validation employs a triangulation approach when traditional gold standards are inadequate [23]. For validating a digital locomotion measure:

- Reference standard comparison: Compare digital locomotion measures against manually scored video observations by trained experts. Calculate agreement statistics (e.g., intraclass correlation coefficients, Cohen's kappa).

- Biological plausibility assessment: Examine whether digital measures demonstrate expected responses to known stimuli or interventions. For example, assess if locomotor activity appropriately decreases following sedative administration.

- Precision evaluation: Conduct test-retest reliability studies under consistent conditions to establish within-subject and between-subject variability.

- Cross-method correlation: Compare digital measures with established but imperfect methods (e.g., photobeam breaks) to identify consistent response patterns despite absolute value differences.

Clinical Validation Protocols

Clinical validation establishes biological meaningfulness and context relevance [23]. For validating a digital measure of respiratory rate in a toxicology study:

- Population stratification: Enroll animals or patients with and without the condition of interest (e.g., drug-induced respiratory depression vs. healthy controls).

- Clinical reference standard testing: Compare digital respiratory measures against clinically accepted standards (e.g., plethysmography) using Bland-Altman analysis and correlation coefficients.

- Intervention response assessment: Evaluate whether digital measures detect expected changes following interventions with known effects (e.g., respiratory stimulants or depressants).

- Dose-response characterization: For toxicology studies, establish whether the digital measure demonstrates dose-dependent responses to toxicant exposure.

- Outcome prediction validation: Assess whether the digital measure predicts clinically relevant outcomes (e.g., need for medical intervention, survival).

Table 2: Methodological Approaches Across V3 Components

| V3 Component | Primary Methodologies | Key Outcome Measures | Acceptance Criteria |

|---|---|---|---|

| Verification | Technical specification testing; data integrity checks; environmental testing | Data completeness; signal-to-noise ratio; adherence to technical specifications | Meeting all pre-specified technical performance criteria |

| Analytical Validation | Comparison to reference standards; triangulation; precision studies; reliability testing | Agreement statistics (ICC, kappa); correlation coefficients; sensitivity; specificity | Sufficient accuracy and precision for intended measurement purpose |

| Clinical Validation | Cohort studies; intervention studies; outcome prediction; dose-response studies | Clinical accuracy; predictive values; effect sizes; clinical outcome correlations | Statistically significant association with clinical states or outcomes |

Framework Adaptations and Applications

The V3 framework has demonstrated remarkable adaptability across domains, with tailored implementations emerging for specific applications.

Preclinical In Vivo Adaptation

The In Vivo V3 Framework adapts the original clinical framework for preclinical animal research [23] [24]. This adaptation addresses unique challenges including sensor verification in variable environments, and analytical validation ensuring data outputs accurately reflect intended physiological or behavioral constructs in animal models [24].

In preclinical applications, the framework must account for species-specific behaviors, the need for non-invasive monitoring, and requirements for continuous data collection in home cage environments [23] [24]. The framework emphasizes replicability across species and experimental setups—an aspect critical due to inherent variability in animal models [24].

Regulatory Alignment

The V3 framework aligns with regulatory expectations for fit-for-purpose evaluation of digital health technologies [19] [22]. In the United States, regulators evaluate claims made for a product rather than the product's capabilities per se, making the framework's structured evidence generation particularly valuable for regulatory submissions [19].

The framework has been referenced by both the FDA and EMA in discussions of digital measure validation [21] [22]. This regulatory recognition positions V3 as a valuable tool for sponsors seeking qualification of digital measures as drug development tools or for regulatory endorsement of digital endpoints [22].

Comparative Analysis: V3 Versus Traditional Validation Approaches

The V3 framework addresses critical limitations of traditional validation approaches for digital measures.

Limitations of Traditional Approaches

Traditional validation methods for medical devices often employ siloed practices with discipline-specific terminology and standards [19]. This fragmentation creates confusion and inefficiency in evaluating digital technologies that inherently span multiple disciplines [19]. Additionally, traditional approaches often fail to distinguish between the technical validation of sensors and algorithms versus the clinical validation of derived measures [19].

For digital measures that capture novel constructs or provide higher temporal resolution than existing methods, traditional validation against potentially suboptimal "gold standards" can be particularly challenging [23]. The lack of a structured framework for evaluating multimodal and composite digital measures further limits traditional approaches [20].

Advantages of the V3 Framework

The V3 framework provides a comprehensive, structured approach that explicitly addresses the multi-layered nature of digital measure validation [19]. By separating verification, analytical validation, and clinical validation, the framework enables appropriate expertise to be applied at each stage while maintaining an integrated view of the overall evidence generation process [19].

The framework's common vocabulary bridges disciplinary divides, facilitating more effective communication and collaboration across engineering, data science, clinical, and regulatory domains [19]. This shared language enables generation of a common and meaningful evidence base for BioMeTs [19].

For novel measures, the framework's recognition of triangulation approaches to analytical validation provides methodological flexibility when direct comparison to gold standards is impossible or inappropriate [23]. This is particularly valuable for digital measures capturing previously unmeasurable aspects of physiology or behavior.

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing the V3 framework requires specific methodological tools and approaches at each validation stage. The table below details key "research reagent solutions" essential for executing V3 evaluations.

Table 3: Essential Research Reagents and Tools for V3 Implementation

| Tool Category | Specific Examples | Function in V3 Implementation | Key Considerations |

|---|---|---|---|

| Reference Standard Technologies | Plethysmography systems; manual video observation protocols; clinical grade lab equipment | Provides comparator measures for analytical and clinical validation | Select based on measurement accuracy, feasibility, and relevance to target construct |

| Data Quality Assessment Tools | Signal-to-noise calculation algorithms; data completeness dashboards; outlier detection scripts | Enables verification of data integrity throughout collection pipeline | Should be implemented proactively with pre-specified quality thresholds |

| Statistical Analysis Packages | Agreement statistics (ICC, kappa); Bland-Altman analysis; correlation analyses; mixed effects models | Supports quantitative assessment across all V3 components | Selection should align with research questions and data characteristics |

| Sensor Testing Equipment | Light meters; temperature chambers; motion simulators; signal generators | Facilitates technical verification under controlled conditions | Should reflect intended use environment conditions |

| Usability Testing Frameworks | Formative evaluation protocols; use-error categorization systems; task success metrics | Supports usability validation in V3+ implementation | Should involve representative users from target population |

The V3 framework represents a significant advancement in the systematic evaluation of Biometric Monitoring Technologies, providing a structured approach to establishing whether digital measures are fit-for-purpose [19]. Its core components—verification, analytical validation, and clinical validation—address the multi-layered evidence needs for digital measures across technical, analytical, and clinical dimensions [19].

The framework's evolution to V3+ through the incorporation of usability validation demonstrates its responsiveness to real-world implementation challenges [22]. This extension ensures that technologies are not only technically sound and clinically relevant but also user-centric and scalable across diverse populations and settings [22].

As digital measures continue to gain acceptance as primary endpoints in clinical trials and find broader application in clinical practice, the V3 framework provides a foundational methodology for generating the evidence necessary to support regulatory, clinical, and payer decision-making [19] [22]. The framework's adaptability across clinical and preclinical contexts further enhances its utility in the translational pipeline [23] [24].

For researchers, scientists, and drug development professionals, understanding and implementing the V3 framework is increasingly essential for successfully developing and deploying digital measures that are trustworthy, meaningful, and ultimately beneficial to patients.

V3 Framework Validation Workflow

Digital Measure Evidence Generation

In 2025, the field of biometric monitoring technologies (BioMeTs) is defined by a critical imperative: establishing robust validation standards that ensure data quality, clinical relevance, and regulatory compliance. As connected digital medicine products that process sensor data to generate measures of physiological function, BioMeTs represent a revolutionary tool for clinical research and patient care [25]. However, their rapid proliferation has created a validation landscape reminiscent of laboratory biomarkers two decades ago—lacking standardized frameworks, common terminology, and widely accepted performance characteristics [25]. This guide examines how major funding entities—including the National Institutes of Health (NIH), the Advanced Research Projects Agency for Health (ARPA-H), and private grant-making organizations—are strategically directing resources to address these gaps through specific validation requirements and support for standardized frameworks.

The alignment between funding priorities and validation standards represents a pivotal shift in the BioMeTs ecosystem. Funders are increasingly mandating rigorous evaluation frameworks as a precondition for support, thereby accelerating the adoption of practices that ensure BioMeTs are "fit-for-purpose" for specific research contexts and clinical applications [25] [19]. This convergence is particularly evident in areas such as AI-powered diagnostics, remote patient monitoring, and digital biomarker development, where funders are prioritizing projects that demonstrate adherence to evolving validation paradigms [26].

Foundational Validation Framework: The V3 Model

The evaluation of BioMeTs requires a structured, multi-stage process to establish their reliability and clinical relevance. The Verification, Analytical Validation, and Clinical Validation (V3) framework has emerged as the foundational model for this purpose, providing a standardized approach to determining whether a BioMeT is "fit-for-purpose" [19].

V3 Stage Definitions and Requirements

Verification: A systematic evaluation process conducted by hardware manufacturers to confirm that sample-level sensor outputs meet specified technical requirements. This stage occurs computationally (in silico) and at the bench (in vitro), focusing on the fundamental technical performance of the sensor hardware itself [19].

Analytical Validation: Conducted at the intersection of engineering and clinical expertise, this stage evaluates the data processing algorithms that convert sample-level sensor measurements into physiological metrics. Analytical validation translates the evaluation procedure from the bench to in vivo settings, typically performed by the entity that created the algorithm (vendor or clinical trial sponsor) [19].

Clinical Validation: Performed by clinical trial sponsors to demonstrate that the BioMeT acceptably identifies, measures, or predicts a clinical, biological, physical, functional state, or experience in the defined context of use. This stage establishes the relationship between the BioMeT-derived metric and clinically meaningful endpoints, typically evaluated on cohorts of patients with and without the phenotype of interest [19].

Funding Landscape and Strategic Imperatives for 2025

Agency-Specific Funding Priorities and Validation Requirements

Table 1: 2025 Federal Funding Priorities for BioMeTs and Associated Validation Requirements

| Funding Agency | 2025 Budget Authority/Request | Primary BioMeT Focus Areas | Key Validation Requirements |

|---|---|---|---|

| National Institutes of Health (NIH) | $50.1 billion requested [26] | • All of Us Research Program (precision medicine) • AI-powered diagnostics • Digital biomarker development | • Adherence to V3 framework • Demonstration of clinical utility • Interoperability with EHR systems • Data standardization across platforms |

| Advanced Research Projects Agency for Health (ARPA-H) | $1.5 billion requested [26] | • Real-time biometric data collection • Privacy-enhancing technologies • Autonomous diagnostic systems [27] | • Human factors testing • Cybersecurity protocols • Bench-to-human testing phases • Algorithm transparency |

| National Institute of Standards and Technology (NIST) | Ongoing program funding [26] | • Biometric standards development • Interoperability testing • Performance benchmarking | • Technical performance standards • Cross-platform compatibility • Reference materials and protocols |

Private and Philanthropic Funding Priorities

Private and philanthropic funders are playing an increasingly important role in advancing BioMeT validation, particularly through support for decentralized clinical trials and digital biomarker development [26]. Notable contributors include:

- Gates Foundation: Focusing on global health applications, particularly resource-limited settings, with emphasis on practical implementation and accessibility of BioMeTs [26].

- Leducq Foundation: Supporting cardiovascular research that integrates biometric data, with requirements for robust statistical validation and clinical correlation [26].

Private sector funding is increasingly directed toward wearable sensors, remote monitoring platforms, and AI-driven diagnostics, with a strong emphasis on generating evidence that supports regulatory submissions and clinical adoption [26].

Experimental Protocols for BioMeT Validation

Protocol 1: Analytical Validation of a Novel Digital Biomarker

Objective: To establish the analytical validity of a novel digital biomarker for monitoring cardiovascular function via a wearable patch.

Materials and Equipment:

- BioMeT device (wearable patch with accelerometer and gyroscope)

- Reference standard (12-lead ECG with synchronized timestamping)

- Controlled motion platform for simulating human movement

- Data acquisition system with secure storage

- Statistical analysis software (R, Python with pandas, sci-kit-learn)

Methodology:

- Device Verification: Confirm sensor specifications per manufacturer claims using calibrated input signals across operational range (e.g., 0-10 Hz for motion sensors) [19].

- Reference Standard Synchronization: Implement precise time-synchronization (±10ms) between BioMeT and reference standard data streams.

- Controlled Environment Testing: Collect data from 30 healthy volunteers performing standardized movements (rest, walking, running) while wearing both BioMeT and reference systems.

- Algorithm Performance Assessment: Evaluate the accuracy, precision, sensitivity, and specificity of the algorithm for detecting specific cardiovascular parameters against the reference standard.

- Statistical Analysis: Calculate intraclass correlation coefficients (ICC) for test-retest reliability, Bland-Altman plots for agreement analysis, and receiver operating characteristic (ROC) curves for classification performance.

Acceptance Criteria: ICC > 0.8, sensitivity and specificity > 0.9 for detecting target physiological states, mean absolute percentage error < 5% for continuous parameters [19].

Protocol 2: Clinical Validation of a Remote Monitoring BioMeT

Objective: To clinically validate a wrist-worn BioMeT for detecting exacerbations in patients with chronic respiratory disease.

Study Design: Prospective observational cohort study with 200 patients followed for 90 days.

Methodology:

- Participant Recruitment: Enroll adults with confirmed diagnosis of moderate-to-severe chronic obstructive pulmonary disease.

- Device Deployment: Provide participants with wrist-worn BioMeT and train on proper use, charging, and data synchronization.

- Data Collection: BioMeT continuously collects motion, heart rate, and respiratory rate data; participants complete daily symptom diaries.

- Event Detection: Algorithm identifies potential exacerbations based on predefined biometric signatures; these are compared with patient-reported events and healthcare utilization records.

- Clinical Correlation: Assess sensitivity, specificity, positive predictive value, and negative predictive value of BioMeT-detected events against clinician-adjudicated exacerbations.

Endpoint Comparison: Compare time to detection between BioMeT algorithm and patient self-report, with statistical analysis using Cox proportional hazards models [25].

The Scientist's Toolkit: Essential Research Reagents for BioMeT Validation

Table 2: Essential Research Reagents and Resources for BioMeT Validation Studies

| Reagent/Resource | Function in Validation | Example Applications | Critical Specifications |

|---|---|---|---|

| Reference Standard Devices | Provides gold-standard measurement for comparison | ECG for cardiovascular BioMeTs, polysomnography for sleep BioMeTs, motion capture for activity BioMeTs | • Validation against primary standards • Measurement uncertainty quantification • Appropriate sampling frequency |

| Data Synchronization Systems | Ensures temporal alignment between BioMeT and reference data | Hardware triggers, network time protocol, custom timestamping | • Sub-100ms synchronization accuracy • Minimal jitter • Robust failure recovery |

| Controlled Testing Environments | Enables verification under known conditions | Motion simulators, environmental chambers, signal generators | • Repeatable testing protocols • Comprehensive parameter sweeps • Real-world condition simulation |

| Open-Source Analysis Libraries | Facilitates standardized data processing and statistical analysis | Python BioSPPy, R signal, MATLAB toolboxes | • Peer-reviewed algorithms • Documentation and examples • Community support and maintenance |

| Validation Data Repositories | Provides benchmark datasets for algorithm development and testing | PhysioNet, Biometric Evaluation datasets | • Expert annotation • Diverse participant demographics • Comprehensive metadata |

Strategic Imperatives for Research Teams

Navigating the 2025 Funding Landscape

Research teams seeking funding for BioMeT development must align their validation strategies with funder priorities and requirements. Key strategic imperatives include:

- Embrace the V3 Framework: Implement verification, analytical validation, and clinical validation as distinct but connected phases of BioMeT evaluation, with documented evidence at each stage [19].

- Address Human Factors Early: Incorporate user-centered design and human factors testing throughout development, as required by ARPA-H and other funders [25] [27].

- Prioritize Data Standards: Ensure interoperability with EHR systems and adherence to data format standards, particularly for NIH-funded projects [26].

- Demonstrate Clinical Utility: Move beyond technical performance to show how BioMeT-derived measures impact clinical decision-making and patient outcomes [19].

The year 2025 represents an inflection point for validation standards in biometric monitoring technologies. Through strategic funding initiatives, NIH, ARPA-H, and private grant-makers are creating an ecosystem where rigorous validation is not just encouraged but required. The adoption of frameworks like V3 provides a common language and methodology for establishing that BioMeTs are truly "fit-for-purpose" for specific research and clinical contexts.

For research teams, success in this evolving landscape requires a proactive approach to validation—one that integrates regulatory science, clinical expertise, and engineering excellence throughout the development process. By aligning with funder priorities and embracing standardized validation frameworks, researchers can accelerate the development of high-quality, clinically valuable BioMeTs that transform healthcare and advance precision medicine.

The fields of wearable sensors and AI-powered diagnostics are rapidly converging to create a new paradigm in biometric monitoring and medical diagnostics. This transformation is primarily driven by the growing demand for remote patient monitoring, the need for more personalized medicine, and advancements in artificial intelligence that can interpret complex physiological data [28] [29]. For researchers, scientists, and drug development professionals, understanding this landscape is crucial for developing valid, reliable, and regulatory-compliant digital measures. These technologies are increasingly being incorporated into clinical trials and pharmaceutical development to provide continuous, objective data on patient outcomes, moving beyond traditional episodic measurements to richer, real-world evidence [24]. The global wearable sensors market, valued at $1.9 billion in 2024 and projected to reach $13.2 billion by 2034, underscores the significant investment and growth in this sector [28]. This article provides a comparative analysis of key technologies and players, framed within the essential context of validation frameworks required for regulatory and scientific acceptance.

The wearable sensors market is segmented by type, application, and end-user, with accelerometers currently dominating the market share by type [28]. Wristwear, such as smartwatches and fitness trackers, represents the largest application segment, while the consumer sector is the largest end-user, followed by healthcare [28]. Regionally, Asia-Pacific held the largest market share in 2024, exceeding 40%, but Europe is expected to witness the highest CAGR during the forecast period [28].

Leading Companies in Wearable Sensors and Digital Health

The competitive landscape includes established semiconductor companies, specialized sensor manufacturers, and emerging digital health technology firms. Key players identified in the wearable sensors market include STMicroelectronics, Panasonic Corporation, Infineon Technologies, Knowles Electronics, NXP Semiconductors, ROHM Semiconductor, TE Connectivity, MEMSIC, Analog Devices, and Murata [28]. These companies primarily compete through product launches and strategic acquisitions to expand their technological capabilities and market reach.

In the broader healthcare technology space, several companies are leading the integration of these sensors into diagnostic and therapeutic applications. Notable companies recognized among the top 50 healthcare technology companies of 2025 include [30]:

- Natera: A leader in cell-free DNA testing for oncology, women's health, and organ health.

- Spring Health: Provides a precision mental health platform for employers and payers.

- Komodo Health: Offers an AI-powered healthcare intelligence platform that tracks de-identified patient journeys.

- CareDx: Specializes in molecular diagnostics and digital tools for transplant patient monitoring.

- Qualifacts: Provides electronic health record solutions for behavioral health organizations.

Comparative Analysis of Wearable Sensor Technologies

Wearable sensors form the foundational layer for biometric monitoring, capturing raw physiological and movement data. The performance characteristics of these sensors directly impact the quality and reliability of the digital measures derived from them. The table below provides a structured comparison of primary wearable sensor technologies based on key parameters critical for research applications.

Table 1: Performance Comparison of Key Wearable Sensor Technologies

| Sensor Technology | Primary Measured Biometrics | Common Form Factors | Key Strengths | Key Limitations / Validation Challenges |

|---|---|---|---|---|

| Inertial Measurement Units (Accelerometers, Gyroscopes) [28] [31] | Movement, acceleration, step count, posture, gait. | Wristwear, Footwear, Bodywear | Compact, low power consumption, well-established for activity profiling. | Data can be noisy; requires complex algorithms for specific movement classification; accuracy varies with placement. |

| Optical Sensors (e.g., PPG) [32] | Heart rate, blood oxygen saturation (SpO₂), potentially blood pressure. | Wristwear, Smart Rings | Non-invasive, enables continuous vital sign monitoring. | Signal susceptible to motion artifacts; skin pigmentation and body hair can affect accuracy; calibration challenges for advanced metrics like blood pressure. |

| Electrodes (Wet, Dry, Microneedle) [32] | Electrical activity of heart (ECG), brain (EEG), muscles (EMG). | Chest Patches, Headbands, Smart Clothing | Provides clinical-grade electrical biosignals; high accuracy for specific physiological events. | Skin contact impedance can affect signal quality (especially dry electrodes); comfort and long-term wearability issues for some designs. |

| Chemical Sensors (e.g., for Interstitial Fluid) [32] | Glucose, lactate, alcohol, electrolytes. | Skin Patches, Smart Watches (emerging) | Potential for continuous, non-invasive monitoring of metabolites. | Maturity varies significantly; calibration and specificity are major hurdles; limited commercial availability for non-glucose analytes. |

Supporting Experimental Data and Validation

The validity of data generated from these sensors is paramount for research use. A 2024 systematic review on the use of wearable devices in field hockey provides insightful experimental data on the performance of GPS and heart rate monitors in a real-world, high-mobility setting. The study reported that the intraclass correlation coefficient (ICC) for these wearable devices showed "reasonably high between-trial ICCs ranging from 0.77 to 0.99," indicating good to excellent reliability [31]. This study highlights both the potential and the challenges of wearable sensor data, noting that "discrepancies in sampling rates and performance bands makes it arduous to draw comparisons between studies" [31]. This underscores the need for standardized experimental protocols, even within a single sport.

Frameworks for Validating Digital Measures in Research

For digital measures to be accepted in pharmaceutical research and development, they must undergo a rigorous validation process. The V3 Framework, developed by the Digital Medicine Society (DiMe) and adapted for preclinical research, provides a structured approach [24]. This framework is essential for establishing the reliability and relevance of digital measures, ensuring they are fit for their intended use in drug discovery and development.

The V3 Validation Framework

The framework breaks down validation into three distinct but connected stages [24]:

- Verification: Confirms that the digital technology accurately captures and stores the intended raw data. This involves ensuring sensors and data acquisition systems function correctly in the intended environment.

- Analytical Validation: Assesses the precision and accuracy of the algorithms that transform the raw sensor data into a meaningful digital measure (e.g., converting raw accelerometer data into a "step count" or "gait score").

- Clinical Validation: Establishes that the digital measure accurately reflects the specific biological, functional, or behavioral state it is intended to measure within its Context of Use (COU).

The following workflow diagram illustrates the application of this framework from technology development to a qualified digital biomarker.

Diagram 1: The V3 Framework for Digital Measure Validation

Experimental Protocol for Sensor Validation

Researchers can implement the V3 framework through a structured experimental protocol. The methodology below is adapted from principles outlined in the validation literature and systematic reviews on wearable technology [31] [24].

- Aim: To determine the validity and reliability of a specific digital measure (e.g., heart rate from a PPG sensor) against an accepted reference standard.

- Experimental Design: A controlled laboratory study with a cross-comparison design.

- Participants: A cohort representative of the intended population (e.g., healthy adults, patients with a specific condition), with sample size justified by a power calculation.

- Protocol:

- Simultaneous Data Collection: Participants wear the test wearable device (e.g., smartwatch) while simultaneously being connected to the reference standard (e.g., 12-lead ECG for heart rate).

- Protocolized Activities: Participants perform a series of activities in a controlled sequence to test the sensor across a range of physiological states. This typically includes:

- Resting (seated, supine)

- Controlled breathing

- Postural changes (e.g., sit-to-stand)

- Steady-state walking/running on a treadmill at varying intensities

- Recovery period

- Data Synchronization: Precise time-synchronization between the test device and the reference standard is critical.

- Data Analysis:

- Statistical Comparison: Use Bland-Altman analysis to assess agreement (bias and limits of agreement) and intraclass correlation coefficients (ICC) for reliability between the test device and the reference standard.

- Error Analysis: Investigate the root cause of any significant discrepancies (e.g., motion artifacts, poor skin contact).

AI-Powered Diagnostics and Clinical Decision Support

The data from wearable sensors serves as a key input for AI-powered diagnostic tools. Artificial intelligence, particularly machine learning and deep learning, is transforming diagnostics by analyzing complex datasets—including medical images, biosignals, and electronic health records—to identify patterns that may elude human observation [29]. The AI diagnostic market is evolving rapidly, with trends pointing toward Explainable AI (XAI), General AI (GAI), and even exploratory Quantum AI (QAI) to enhance accuracy, speed, and trust in these systems [29].

The Emerging Workflow of AI-Assisted Diagnosis

The integration of AI is fundamentally changing clinical workflows. A 2025 qualitative study on stroke care provides a compelling model. Traditionally, diagnosis is an iterative process where a clinician gathers data, forms a hypothesis, and refines it until a diagnostic label is reached. With AI, this process is being transformed [33].

In advanced stroke hubs, the diagnostic journey now often begins with an AI system that processes MRI/CT images and distributes a preliminary diagnosis (e.g., "large vessel occlusion detected") to the entire stroke team within minutes. The clinical team's role then shifts to verifying the AI's claim against other evidence and clinical findings, a process that can trigger early activation of treatment pathways like thrombectomy [33]. This "AI-as-first-reader" model, where the algorithmic output precedes the clinician's diagnosis, represents a significant shift in clinical agency and workflow.

The following diagram contrasts the traditional diagnostic process with the emerging AI-assisted model.

Diagram 2: Traditional vs. AI-Assisted Diagnostic Workflow

Key Considerations for AI Diagnostics in Research

For drug development professionals, several factors are critical when evaluating AI-powered diagnostic tools for use in clinical trials [29] [33]:

- Data Quality and Bias: AI algorithms require large, high-quality, and representative datasets. Biased training data can lead to incorrect diagnoses and unfair outcomes for underrepresented populations.

- Algorithmic Transparency and Explainability: The "black box" nature of some complex AI models poses a challenge for regulatory approval and clinical trust. Explainable AI (XAI) is crucial for understanding the rationale behind an AI's diagnosis.

- Regulatory and Ethical Compliance: Issues of data privacy, security, and informed consent are amplified when using AI. Clear accountability for decisions made with AI assistance must be established.

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers designing studies involving wearable sensors and digital biomarkers, the following toolkit outlines essential components and their functions, derived from the cited technologies and validation frameworks.

Table 2: Essential Research Toolkit for Digital Biomarker Development

| Tool/Component | Function in Research | Example Specifics |

|---|---|---|

| Multi-Modal Sensor Platform | Captures raw physiological and behavioral data (e.g., acceleration, heart rate, ECG). | Research-grade devices with raw data access (e.g., from ActiGraph, GENEActiv, Empatica) [31]. |

| Reference Standard Instruments | Provides gold-standard measurement for validating digital measures (Analytical Validation). | 12-lead ECG, metabolic cart for energy expenditure, clinical-grade spirometer, lab-based blood analyzers [31] [24]. |

| Data Synchronization System | Precisely time-aligns data from multiple sensors and reference systems. | Dedicated hardware (e.g., LabStreamingLayer) or software-based timestamping with high precision. |

| Algorithm Development Environment | Platform for building and testing algorithms that convert raw sensor data into digital measures. | Python/R with signal processing libraries (e.g., SciPy, TensorFlow, PyTorch) for custom feature extraction and model training [29]. |

| V3 Framework Checklist | Guides the structured validation of the digital measure from sensor to clinical relevance. | A protocol checklist based on Verification, Analytical Validation, and Clinical Validation principles [24]. |

| Regulatory Guidance Documents | Informs study design to meet standards for regulatory submission. | FDA's "Bioanalytical Method Validation Guidance," DiMe's V3 Framework publications, and ICH guidelines [24]. |

The integration of wearable sensors and AI-powered diagnostics presents a transformative opportunity for medical research and drug development. A thorough understanding of the performance characteristics, limitations, and validation requirements of these technologies is fundamental to their successful application. As the field evolves, the rigorous application of frameworks like V3 will be paramount in ensuring that digital measures are reliable, clinically meaningful, and ultimately acceptable to regulators. This will enable researchers to robustly capture the patient experience through continuous, objective data, accelerating the development of new therapeutics and personalized medicine approaches.

Implementing Robust Validation Methodologies and Clinical Applications

Integrating AI and Machine Learning for Enhanced Accuracy and Liveness Detection

The increasing reliance on biometric verification across sectors such as financial services, healthcare, and border security has made robust liveness detection a critical component of identity systems. This technology determines whether a biometric sample comes from a live person present at the time of capture, thereby preventing spoofing attempts using photos, videos, or deepfakes [34]. For researchers and professionals validating these technologies against emerging regulatory frameworks, understanding the integration of AI and machine learning is paramount. These technologies are not merely enhancements but fundamental requirements for achieving the accuracy and reliability demanded by international standards and regulations aiming to validate biometric monitoring technologies [35] [36]. This guide objectively compares the performance of leading liveness detection and accuracy-enhancing methods, providing the experimental data and protocols necessary for rigorous technological assessment.

The Critical Role of AI and ML in Modern Biometrics

Artificial Intelligence (AI), particularly deep learning models, and Machine Learning (ML) have transformed biometrics from a static verification tool into a dynamic, adaptive security layer. These technologies directly address two core challenges: maximizing accuracy in diverse real-world conditions and ensuring robust liveness detection against evolving spoofing attacks.

AI-driven systems, such as Convolutional Neural Networks (CNNs) and the emerging Capsule Networks, analyze facial features with unprecedented detail, identifying subtle characteristics that are imperceptible to the human eye or traditional algorithms [35] [37]. This capability is crucial for maintaining high accuracy across varied demographics and environmental conditions, a key metric for regulatory validation.

Simultaneously, ML models are the foundation of modern liveness detection. They are trained on massive, diverse datasets of real human features and known spoofing artifacts—such as printed photos, digital screens, and masks—to learn the minute differences between live human skin texture, blood flow patterns, and micro-movements compared to inanimate spoofs [34]. This continuous learning process is essential for defending against new, AI-generated deepfakes, whose sophistication is growing rapidly [8] [34].

Comparative Analysis of Liveness Detection Modalities

Liveness detection methodologies are broadly categorized into two approaches, each with distinct mechanisms, strengths, and ideal applications. The following table provides a structured comparison, crucial for evaluating their suitability for specific regulatory and use-case requirements.

Table 1: Comparative Analysis of Active vs. Passive Liveness Detection

| Feature | Active Liveness Detection | Passive Liveness Detection |

|---|---|---|

| User Interaction | Required (e.g., blinking, head turns) [34] | None required; works in the background [34] |

| Detection Method | Motion analysis and response to prompts [34] | AI-based image analysis (texture, depth, micro-expressions) [36] [34] |

| User Experience | More intrusive; may cause friction [34] | Seamless and frictionless [34] |

| Spoofing Resistance | High, depending on implementation [34] | Very high, especially against advanced deepfakes [34] |

| Processing Speed | Slightly slower due to user prompts [34] | Generally faster [34] |

| Best Use Cases | High-risk or high-security environments [34] | Scalable onboarding, mobile-first user experiences [34] |

Experimental Protocols for Liveness Detection

To validate the performance claims of liveness detection systems, researchers employ standardized experimental protocols. These methodologies are designed to simulate real-world spoofing attacks and measure the system's resilience.

Presentation Attack Detection (PAD) Evaluation:

- Objective: To determine the system's ability to correctly reject Presentation Attacks (PAs), such as photos, videos, masks, and deepfakes.

- Protocol: A dataset containing both "bonafide" (live) samples and "attack" (spoof) samples is used. The system processes each sample, and the outcomes are tallied to calculate key metrics like Attack Presentation Classification Error Rate (APCER) and Bonafide Presentation Classification Error Rate (BPCER) in line with the ISO/IEC 30107-3 standard [34].

- Deepfake-Specific Testing: Given the surge in AI-generated fraud, a dedicated protocol involves challenging the system with a dataset of hyper-realistic deepfakes. The model analyzes pixel-level inconsistencies, unnatural eye blinking patterns, and AI-generated artifacts that are not present in live human videos [34]. The quadrupling of deepfake usage from 2023 to 2024 makes this a critical test [34].

User Experience and Performance Benchmarking:

- Objective: To measure the impact of liveness detection on system throughput and user acceptance.

- Protocol: For active liveness, researchers measure the total task completion time and the error rate (how often users fail the challenge). For passive liveness, the focus is on the marginal increase in processing time compared to a baseline facial recognition step. Both methods are evaluated through user satisfaction surveys to quantify perceived friction.

Diagram 1: Liveness Detection Workflow Comparison. This diagram illustrates the divergent user journeys for active (red) and passive (blue) liveness detection methodologies.

Quantitative Performance of AI-Enhanced Facial Recognition

The integration of AI has dramatically elevated the performance benchmarks for facial recognition technology. Under controlled laboratory conditions, top-performing algorithms now demonstrate accuracy rates exceeding 99.5%, with some verification algorithms reaching as high as 99.97%—a performance level that rivals leading iris recognition systems [35]. However, for regulatory validation, it is critical to examine performance across diverse scenarios and demographic groups.

Table 2: AI-Enhanced Facial Recognition Performance Metrics (2024-2025)

| Performance Metric | Laboratory / Optimal Conditions | Real-World / Challenging Conditions | Notes & Context |

|---|---|---|---|

| Top Verification Accuracy | 99.97% [35] | Not directly comparable | Ideal lighting, front-facing, high-resolution images. |

| General Identification Accuracy | >99.5% [35] | Varies significantly | 45 of 105 NIST-tested algorithms were >99% accurate on high-quality images [35]. |

| False Negative Identification Rate (FNIR) | <0.15% [35] | Can increase to 9.3% [35] | Measured at a False Positive Rate (FPIR) of 0.001. "In the wild" factors cause performance drop. |